|

||

|

||

| ||

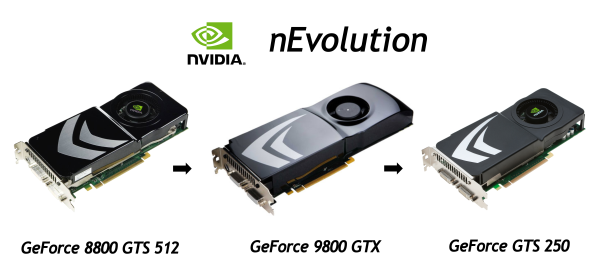

Early in spring NVIDIA suddenly decided to make another announcement. According the new policy, this model was named GeForce GTS 250. In this case GTS stands for lower performance level than GTX, and 250 is a model number.  Judging by the graphics card characteristics, this article might have fit into this one picture or a single line: "GeForce GTS 250 = GeForce 9800 GTX+". Or "GeForce GTS 250 = GeForce 9800 GTX+ + 1GB", although this amendment changes nothing. However, being model journalists, we have published almost a full article. It's "almost" because we skipped theoretical data, which haven't changed for a long time, and synthetic tests, which make no sense in this case. So, GeForce GTS 250 is an update of the popular GeForce 9800 GTX+ that competes with RADEON HD 4850. Let's retrace the history of all such "updates". The first model based on the sterling G92 with all stream processors unlocked was GeForce 8800 GTS 512MB, released in late 2007 for the recommended price of $349-399. We wondered why it hadn't got its own name instead of confusing users with the diverse 8800 series. Then there appeared GeForce 9800 GTX -- almost the same card, just a tad faster, plus a new PCB design. So we were surprised why change the good design for a small increase in frequencies. The 9800 GTX was announced in February, 2008, with the recommended price of $299-$349. That year in summer it was overhauled again (slightly overclocked) into GeForce 9800 GTX+. That was in June 2008, when its price went down to $229. And now the same card based on G92 (manufactured by the 55nm process technology) with a simplified PCB design (resembling the good old 8800 GTS 512) has been overhauled one more time. Now it's called GeForce GTS 250. It's not important to potential users how this card is called. What really matters is that a 512MB modification will now cost only $129, and a 1-GB card will be $149. However, these are recommended prices for the North American market, prices in our parts will be noticeably higher for several months. Despite all our gibes, GeForce GTS 250 had a timely rollout for several reasons. The first one has already set our teeth on edge -- the notorious world recession. At such times people try to buy only bare necessities, or they slacken their appetites. So the GTS 250 is a good choice, offering a very good performance level sufficient for all multiplatform projects and most PC-exclusive games, and it's all for just $130-150. The second reason is the impending Spring launch of competing Low-End solutions from AMD (below $100) and another overhauled product from NVIDIA for the same sector. Plus a price drop for more expensive products. By the way, AMD has already responded to the price drop of NVIDIA GTS 250 by decreasing prices for RADEON HD 4850 and HD 4870. So what's so special about this solution from NVIDIA, what can it offer to its users except for the third minor update of the same product (8800 GTS 512 -- 9800 GTX -- 9800 GTX+ -- GTS 250)? Representatives of this company mention the following features (versus its competing product, not the old G92-based cards): Support for NVIDIA CUDA, NVIDIA PhysX, and NVIDIA 3D Vision, as well as "readiness" for Microsoft Windows 7. We shall analyze these features a tad later, as we have nothing to add about the new graphics card. You might have already figured out that the theoretical part of the GeForce GTS 250 review will be short. We already examined the G92 architecture, which is based on G80. The 55nm G92b is no different. And the only differences from GeForce 9800 GTX(+) that matter to common users are lower power requirements and slightly decreased heat release. And don't forget about prices. If you are not familiar with the architecture of G92, you can read about it in our baseline review. This architecture developed from G8x/G9x with some modifications. Before you read this article, you should study the baseline theoretical articles -- DX Current, DX Next, and Longhorn. They describe various aspects of modern graphics cards and architectural peculiarities of products from NVIDIA and AMD.

These articles predicted the current situation with GPU architectures, and confirmed many of our assumptions about future solutions. The detailed information about NVIDIA G8x/G9x unified architecture is provided in these articles:

We proceed from the assumption that our readers are already familiar with the G9x architecture. Now we shall examine detailed characteristics of the first card from the GeForce GTS 200 series, based on a 55nm G92. GeForce GTS 250

GeForce GTS 250 reference specs

There is nothing interesting here, the "new" graphics card with a 55nm G92 chip does not differ from GeForce 9800 GTX+ at all. However, the new model can be partially justified by one GB of video memory install (instead of 512MB as in the 9800 GTX+). It significantly improves performance in heavy modes with maximum graphics quality, high resolutions, and full-screen antialiasing. There are also 2-GB modifications, but their advantages have to do with marketing rather than with reality. So top models of GeForce GTS 250 should be really faster than GeForce 9800 GTX+ owing to their increased memory volume. And some bleeding edge games will get an advantage even in not very high resolutions. It's all good, but some manufacturers already rolled out GeForce 9800 GTX+ cards with 1GB of on-board memory. The 55nm process technology of G92b and a simplified PCB allowed NVIDIA to design a card similar to GeForce 9800 GTX in characteristics, but for a lower price and with reduced power consumption and heat release. And now GeForce GTS 250 has only one 6-pin PCI-E power connector. We already wrote about the name of the announced model in the very beginning. In this case NVIDIA just brought names of its G92-based cards in line with the modern rules. GeForce title is followed by an index (GTX, GTS, GT, G) and ends in the model number, where the first figure stands for a generation (it means little, though, because Series 200 includes cards based on GT200 and G92), and the remaining two figures show position of the model inside the series. Architecture and featuresWe have nothing interesting to tell you about the architecture of GeForce GTS 250, because G92 hasn't changed since Autumn 2007, it's just smaller and consumes less power. The improved architecture based on the 55nm process technology makes NVIDIA GeForce GTS 250 cheaper, quieter, and more power efficient than GeForce 9800 GTX+. In fact, it would have been very interesting to know what NVIDIA GPU departments are busy with. We actually haven't seen anything new for almost three years already, only small improvements (OK, GT200 had lots of improvements, but it's still only improved G8x), or just modified frequencies, designs, and titles. The GT200 architecture, announced last summer, is the modified G8x/G9x, which traces back to G80 (2006). The main differences between G92 and G80 were the 65nm process technology, a video decode unit, and some improvements in texture units. What concerns GT200, it mostly features quantitative changes. So we are still waiting for a really new architecture (supporting DirectX 11 API) from NVIDIA, and we hope that the recession won't affect these plans. NVIDIA CUDALike all the other modern solutions from this company, GeForce GTS 250 supports CUDA. This technology was described in two of our articles, so we shall not dwell on its description. You can read about it here. What's important, programs for common users that support CUDA are already appearing in the market. One of CUDA pioneers is Elemental Badaboom for transcoding video. There are others... Now we can mentioned other useful applications with CUDA support (we speak of immediate use, so Folding@Home and SETI@Home do not belong here): Pegasys TMPGEnc 4 -- GPU-accelerated video post processing, ArcSoft TotalMedia Theatre -- GPU-accelerated video scaling to HD resolutions. Pegasys TMPGEnc 4 (aka Tsunami MPEG Encoder) is a video encoder to various formats, which also offers several editing features and special post processing filters. TMPGEnc has been using CUDA of late in order to accelerate video decoding (video encoding acceleration is not supported yet) as well as to accelerate such filters as color correction, denoise, and smart sharpen.  These tasks require high computing capacity, and video stream processing with these options takes up much more time. NVIDIA cards supporting CUDA can apply these filters 4-5 times as fast as a CPU. Perhaps it's not very impressive now, but it can still make the life of some users easier. ArcSoft TotalMedia Theatre is a video player for various formats that supports NVIDIA CUDA for high-quality scaling from DVD and other low-res formats to HD resolutions (1280x720, 1920x1080). The upscaling technology of ArcSoft SimHD uses CUDA to offload some of computations to a GPU, so this procedure is performed in real time at 30 frames per second. For example, usual CPUs cope with this task only at several FPS.  Don't forget that it's just a theory. So we are planning to publish a practical analysis of all such features of GPUs from NVIDIA and AMD. Let's hope that there will appear more applications by the time we write this article, especially those that support both solutions. NVIDIA PhysXAll the features mentioned above are not very important for a gaming graphics card. What really matters is improvements in games. We wrote about GPU-assisted physics computing many times. PhysX allows to use such complex computing effects as liquid and cloth simulation as well as to compute physics for thousands of objects simultaneously. Support of the gaming industry is very important here, it determines success of any undertaking. And PhysX has this support. Three big game publishers (Electronic Arts, Take-Two Interactive, and THQ) announced PhysX support in their games last year. Considering the appearance of games that actively use PhysX effects accelerated by NVIDIA cards even last year, we can expect the number of such games to grow in 2009. For example, Anabiosis and Mirror's Edge. PhysX technology does not affect their gaming process, but it brings new user experience by raising interactivity of the gaming world, adding high-quality cloth/glass/smoke/liquid simulation. Games with PhysX effects offer higher interactivity and physically correct object behavior.  It goes without saying that all games are developed for systems that cannot accelerate PhysX effects on the hardware level. There are a lot of such systems that have to compute everything using their CPUs. And they cannot provide high performance in this task. For example, systems that don't support PhysX slow down below 30 fps on some levels of Mirror's Edge, while GeForce GTS 250 provides comfortable gameplay in this game even with maximum graphics quality at up to 2560x1600. GeForce 3D VisionOne of the latest technologies from this company included into GeForce GTS 250 is GeForce 3D Vision. It's a ready solution for viewing stereo images with shutter glasses. Shutters ensure better image quality than passive glasses with polarization filters. It concerns higher resolutions as well as higher angles of view.  Another major advantage of GeForce 3D Vision is its excellent compatibility with 3D games. NVIDIA's stereo driver provides native support for stereo rendering in games (over 300 titles already). Other than games, it supports 3D video players (e.g. 3dtv Stereoscopic Player) and viewing stereo photos (with a special bundled utility). What's important, stereo drivers from NVIDIA are based on the same code as the usual drivers, they do not use software wrappers that lower performance. Stereo support in games is implemented like SLI profiles with ready settings for each game. NVIDIA employees research games and find optimal settings for each title, so users don't have to do it. However, the main problem of GeForce 3D Vision is not in $200 you have to pay for the glasses and not in twice as high requirements to video performance (frames should be rendered for each eye), but in practically a total absence of video output devices compatible with this technology in our parts. You actually need a monitor that can receive a 120-Hz signal via DVI. There exist only two models with such characteristics: SAMSUNG SyncMaster 2233RZ and ViewSonic VX2265wm, and they are not yet available in our stores. Many modern LCD TV sets can do 100 Hz or 120 Hz. But it has to do with a display refresh rate, they cannot receive a 120-Hz signal via HDMI. So they cannot be used for stereo output. It possible to use CRT monitors that support refresh rates above 100 Hz, but they must have a dual-channel DVI input, which is also a very rare feature. You can use Mitsubishi 1080p DLP devices and DepthQ HD 3D projectors, but they will hardly expand the circle of potential users of GeForce 3D Vision. However, in spite of some problems this stereo technology is very interesting. And we'll try to examine it in more detail in the nearest future. As for now, it has one nasty peculiarity, its main drawback -- very tough requirements to a monitor. The situation must improve with the appearance of such monitors and TV sets in the market. We'll be looking forward to it. In our conclusions after the theoretical part of the article we can mention the following: although NVIDIA GeForce GTS 250 is a complete copy of GeForce 9800 GTX+ in terms of technical characteristics, it's an excellent market offer with higher performance versus the previous 9800 GTX+ card. The GTS 250 promises to become a successful graphics card and a strong competitor to AMD RADEON HD 4850. However, the issue of performance in modern 3D games will be covered in the next part of our article. It's when we see the real situation with modern solutions. These tests will cross t's and dot i's concerning relative rendering performance and consequently expediency of this overhauled solution. Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |