Longhorn

Microsoft improves graphics by 55%

|

Remember the ad about some women's cosmetics? "The research showed that the complexion got better by 37% and the skin condition was improved by 70%". Isn't it nice? Let's try to estimate how much the new operating system codenamed Longhorn and its graphics API will enhance developers' options and the quality of games. Of course, we do not aspire to one percent accuracy and the 55% used in the title have absolutely no grounds. But it looks good, isn't it?

The new driver architecture

and graphics system in Longhorn

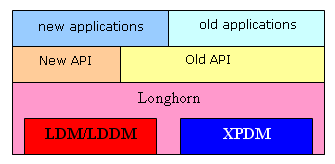

No wonder users get lost in codenames, there are so many of them (Avalon, Longhorn, WGF 1.0 and 2.0, etc). Let's draw a clear picture and arrange everything in orderly pigeonholes.

Longhorn

It's a new operating system that will replace various Windows XP modifications approximately in 2006. Unlike Windows XP and Windows 2000, based on different versions of the same kernel and having almost identical driver models, Longhorn promises considerable changes not only in its interface and API, but also in the heart of hearts of the system, in the kernel, memory and source management architecture. The new operating system will support two driver types (two driver models): one is retained for compatibility with the old drivers (XP/2000 model) and the second is new drivers, developed specially for Longhorn and next versions of this OS. This new model (a standard for drivers and their interaction with the OS kernel and API) is called LDM (Longhorn Driver Model). Its most important and interesting part is LDDM, responsible for all graphics (Longhorn Display Driver Model). All landmark graphics features will be implemented based on new LDDM drivers, old model drivers will be able to provide only the base-level (available in Win XP) of graphics hardware support.

It goes without saying that new applications can address old as well as new API, the old applications know only of the old API. APIs interact with the system and hardware (via drivers). The old drivers are installed in the compatibility mode via Windows XP driver interface (XPDM), new drivers — in the new Longhorn mode (LDM).

LDM

Interestingly, the new driver model provides two levels — basic and advanced. Basic drivers can be developed for the current hardware as well, without requiring from the devices any functions, specifically intended for the new Longhorn features. These drivers will provide a minimum necessary level for the new API and new driver model, but not always optimal in terms of performance or usability. Advanced drivers require special hardware support for some specific issues, in the first place having to do with resource management, virtual memory, and competitive workflows from applications to hardware devices. That is hardware must be designed with regard to LDM specifications and understand some special system data structures. In this case new functions of the kernel and Longhorn driver model will be executed in the fastest, most reliable and effective way.

Of course, right after this operating system is released, most drivers will operate in the XP compatibility mode or in the basic LDM mode — it will take time for Longhorn designed hardware to appear in a variety. But there will gradually appear an increasing number of devices with sterling advanced LDM support, video cards in the first place — the new system features are especially important to them. Think about it — new drivers will be able to considerably reduce system call latencies (the main scourge of modern 3D hardware acceleration), to raise the efficiency of memory and resource management - it will all be done automatically, requiring no resources from applications or programmers.

The new driver model and the new LDDM will offer the following set of important innovations:

- Visualization of states. Each application has its own state of all settings of the graphics pipeline, they do not cross or interfere with each other.

- Resource virtualization and management (allocating memory, real and virtual, moving to the local memory of an accelerator and back, etc) is performed automatically, this process is completely transparent to applications. The only failure to allocate new resources is depleted virtual memory. Resources in the advanced driver model may be unavailable at any moment and created in memory on request "on the fly" — that is when it's time to render them, they will appear on screen. In case of the basic driver model, all resources must be loaded and available, but applications may be ousted from the main memory into the swap file depending on how frequently they are addressed.

- Execution virtualization. Applications can use hardware accelerators simultaneously and competitively, without interfering with each other. It's up to the system to distribute time for execution various command streams.

- Command validation. Parameters and commands are validated (like in OpenGL), which drastically increases application stability. The advanced driver model allows to perform this validation completely on the hardware level (!) without loading CPU.

- Considerable drop in expenses (delays) on API calls, changing accelerator's parameters and settings (states), and other operations performed via the driver and hardware. In fact, it's the most important innovation for developers, which can significantly increase gaming performance and the variety of visual effects.

- Hot-plugging graphics devices is supported.

These and other delights are promised by the new driver model and the new graphics core of OS Longhorn — admit it, the prospect are bright. We shall see what it will be like in practice. And now let's recreate a detailed situation in graphics API:

Graphics API in Longhorn

User and system graphics applications are on top of our diagram. Below is the line of various APIs that the applications address. Let's go from left to right one after another:

- WGF 2.0 is the new 3D API developed for Longhorn and future OS, it will replace DX 9 and its modifications (we can say that it's the new name for D3D10). It possesses significant innovations and differences in the graphics pipeline (we'll discuss it later) and thus it offers landmark capacities for 3D hardware acceleration. The new pipeline (under WGF 2.0 on the diagram) requires new drivers, old drivers in compatibility mode cannot provide the WGF 2.0 required functionality. This API is intended for the new hardware, which will be designed with regard to Longhorn and WGF 2.0 — old accelerators, including the current leaders, will not be able to provide necessary functionality, so API WGF 1.0 will be quite enough for them (see below).

- D3D9 and other old 3D APIs are retained for compatibility. They do fine with old drivers and are no different from what we had in Windows 2000/XP as far as applications are concerned. API D3D9 corresponds to the latest 9.0c specification. All older APIs (including D3D5, 6, and 7) are excluded and emulated by being translated into D3D9 calls, all outdated fixed functions are emulated by shaders. Forgotten D3D RM (retained mode) is removed and not supported even for compatibility's sake.

- DX VA — DirectX Video Acceleration — a new system and API for hardware acceleration of video steams. It's intended for high-performance hardware processing of video streams, HD-DVD resolutions inclusive. It also includes a content protection system that prevents its interception and modification when it's processed by CPU or GPU. Hardware acceleration can use special shaders executed by GPU. Protected data, transferred along general purpose buses, are encrypted and can then be decrypted by video cards on the hardware level. Moreover, it provides authentication system that checks drivers and drivers check accelerators. I wonder how efficient this system will be and how soon it will be hacked ;-)

- WGF 1.0 is also a new API, which is functionally based on the D3D9 graphics features with minor changes and innovations (it's sometimes referred to as D3D9.L, but we shall call it D3D9+). From the programmer's point of view the API is different from D3D9 in many aspects and calls, including considerably easier resource management and some other aspects, the graphics pipeline remains the same and provides flexibility and functionality (familiar by D3D9), but nothing more. That is it's more convenient to develop applications for WGF 1.0 than for the old D3D9, but they will not get access to the landmark features. Though they will run faster and become more reliable due to the above mentioned LDDM advantages, which WGF 1.0 tries to use as far as possible. This API is an ideal match to the state-of-the-art hardware, given the drivers are overhauled for the new LDM, which won't take very long — by the time the operating system is released or even earlier. The key innovations include sharing resources among applications and processes (for example, a single virtual frame buffer for several applications) and automatic memory management, which is limited only by the available virtual memory, but not limited by physical resources of the local or system memory.

- DWM/Avalon — superstructure over WGF 1.0. It's a graphics window API and a new DWM (Desktop Window Manager), which is significantly different from what we had before (including Windows WP) by its approach to window management and rendering. Now, like in Unix (X-Windows), every application is rendered in its own graphics space, some virtual window, which has its own graphics context, state, and is isolated from other virtual buffers of other applications. And DWM is responsible for the direct final rendering of these prepared windows, their movements, and mutual sorting (overlaying windows). A more complex scheme can be implemented without any special problems, for example a 3D window manager. This approach leads to increased memory consumption, but it delivers us from the headache of constantly redrawing windows in case top windows are moved and other operations. No more nasty jerks, temporarily damaged images at peak load, etc. There is no more such thing as exclusive control of the screen — it's only emulated. There is no chance of the system loosing resources when switching between full-screen applications. The new WDM will provide various multi-monitor luxuries, from assigning selected windows to a given monitor and independent desktops on different monitors to the classic multi-display (one desktop split into several displays). Another major innovation is the scaling support for fonts and windows, displays with non-standard and high DPI, and D3D9 shaders for GUI visual effects, drawing and animating windows and controls.

- OpenGL — everything is as it used to be. There are two options — either an installed ICD driver from a video card's manufacturer with its own graphics pipeline (ginger-colored unit) or the translation of OpenGL 1.4 into WGF 1.0, and then a standard procedure to XPDM or LDM graphics D3D driver. As is well known, the second option is slow, it will not do for games, it just provides compatibility for other applications.

Now let's trace the route of our calls from applications to hardware. The main innovation is the graphics driver, split into two parts. The first part (ginger-colored unit, marked as user mode) is executed on the user level and cannot be of serious interference to the system, given it's erratic or unstable. Its objective is to provide all driver functions, which can be executed without critical and tight (intensive) contact with the hardware. There are plenty of such functions, from checking parameters, compiling and optimizing shaders to assembling settings and converting formats into internal hardware form. The installed driver with OpenGL graphics pipeline exists on the same level. Then follows the kernel and its most interesting part — DXG kernel — here we deal with zero execution level (privileged) and errors in this code may ruin normal system operations. The critical part of the graphics driver is also located here, it's executed in the privileged mode as well. This separation increases system stability, because the volume of alien code executed on Level 0, which may result in the global crash of the system in case of glitches, is reduced manifold. Interestingly, assembling the list of commands to be drawn on the hardware level is carried out completely in the user part of the driver. Thus, the main driver access time will also fall on the protected mode. We are promised a simpler structure of new LDM drivers — it should lower chances for errors and cut down the development time for new drivers, especially for future Longhorn-designed hardware.

The new graphics pipeline and WGF 2.0

That's the tastiest part. The new graphics pipeline.

- Detailed specifications on accelerator's features. No CAPS — all accelerators must support every feature, included into the specifications. That is all API features are standard and mandatory. No more discord, everything is under Microsoft control.

- The common unified software model for all shader types: vertex, pixel, and others (if they exist). The new shader model, more flexibility, less restrictions, almost no limits to shader complexity.

- GPU is highly independent from CPU — fully automatic check of parameters and execution of render queues, automatic creation, distribution, and upload of resources. Automatic hardware context switching and operations with several command streams. Minimal delays when parameters are passed from applications to the accelerator — all these features are included into the new LDDM.

- New stages of the graphics pipeline — exporting intermediate results for further processing and a geometry shader (it will be described later on).

- New data formats for HDR and normals, custom data structures, and even resource arrays (!). For example, a cubic texture is now represented as an array of six regular textures, other options are also possible.

- The new compression algorithm for normals — it's like 3Dc from ATI.

- Integer texture addressing (it's accessed as an array of constants), several simultaneous samples.

That's how it looks like on a flowchart:

Let's discuss the main innovations from left to right. First of all, uniform (with identical features) access to textures is now possible at three pipeline stages — at the stage of a vertex shader, then at the stage of geometry shader, and certainly at the stage of a pixel shader. Samplers are accessible at all stages, they allow to select several neighbouring values from a texture by a single calculated texture coordinate, which may help significantly facilitate implementation of custom filtering algorithms or operations with non-trivial data representations and special maps. The usual stage of a vertex shader is followed by geometry shader. What is it? It's a shader that manages triangles (assembled from vertices) as entities before they are drawn. That is it can manipulate triangles as objects. Including some control or additional vertex parameters. It can change these parameters, calculate new parameters, specific for the triangle as a whole, and then pass them to a pixel shader. It can mark a triangle with a predicate (and then process it differently, depending on the predicate value), or exclude it from candidates to be drawn. Unfortunately, this shader unexpectedly cannot create new geometry and new triangles at its output, but it's followed by another new stage — Stream Out.

At last it's officially possible to return the data, which was processed in the vertex part of the pipeline, to the buffer (memory) before it's passed and drawn in the pixel section. Then it can again be used for choice. Thus, it allows a lot of things that were previously impossible or very hard to implement. For example, you can now generate new geometry and tessellate surfaces to a greater multiple quantity of triangles by this or that algorithm. In order to do it, you should use two passes in the vertex section, where the first one exports the data stream and stream division coefficients between them (you may remember that DX 9c allowed to set a divider for vertex indices when they were assembled from several independent streams, one for each stream). It's a tad less natural than just generating new vertices and new geometry in a vertex shader, but finally this option has become available. You can even route the data in the output -> input cycle and use predicates to sample and degrade geometry in cycle to simplify a model, for example.

Thus, the importance of exporting data from the middle of the pipeline can scarcely be exaggerated. Interestingly, this data can be then interpreted not only as vertices, bus also as textures or other data structures. It means that procedural textures can be generated fast on the hardware level and then their generated representation can be used without extra delays for constant calculations.

A very important innovation is HLSL canonization — from now on its compiler will become inseparable from the API core, instead of being a separate unit. Compiling will now be up to WGF 2.0. It will call the driver "for advice" on optimizations and then pass to the driver the intermediate byte code, which should be executed almost directly by a WGF 2.0 accelerator. However, it does not rule out another shader optimization stage in the driver, right in the binary code before the execution:

Let's hope that HLSL will become the main tool for developing shaders and the assembler code will pale into insignificance, as it happened with CPU. HLSL leaves a lot of room for the future architecture development and new performance gains due to simple recompilation for new hardware.

We can note two major innovations in the lowest level model of the new shader processors:

- Widely spread predicates and their performance optimization in hardware, in order to implement flexible conditions without noticeable performance drops.

- Significant reduction of quantitative limitations — the number of constants and shader length are practically not limited now, the number of temporary registers has grown considerably. Texture sample nesting is not limited as in the Basic Model 2.0.

in fact, it looks more like the drastically improved Model 3.0 than something principally new. But nothing new is actually necessary, the only possible architectural step (arbitrary access to the data and command memory) is not yet ready to happen for several reasons. The road to it comes through the arbitrary generation of new objects (e.g. vertices), which is not yet available in WGF 2.0 either.

Likely terms and scenarios

A tad later, when we have more information, we shall publish a separate article devoted to the features of new WGF 2.0 shaders (let's say SM 4.0). But now let's draw the bottom line and discuss the prospects. So, even though not everything has come up to our expectations (for example, the geometry shader), we can admit that the significance of WGF 2.0 is revolutional rather than evolutional. Quantity is transformed into quality. It happens in the first place because Microsoft got rid of many pest rudiments and because of the approach harmony that starts to take shape, which is often not typical of this company (OpenGL, beware!). It's parameter checks, and centralized compilation, and multitasking support, and windows manager, which is finally separated from GDI and other immediate graphical API. Resource virtualization and reduction of delays when passing parameters to an accelerator - these features alone are worth much. Outputting data from the pipeline is one of the long awaited hits. We have no doubts that it's all great; the improvements amount to 85%, not 55%; but the question is when?

Most likely by the end of 2006. At first the operating system must come out, then appropriate hardware, then it's time to write and debug sterling advanced LDDM drivers. Only then we'll get a sterling tandem of WGF 2.0 + advanced LDDM driver with Longhorn designed hardware. The first applications to actually use the system advantages will appear 1-1.5 years after that.

Perhaps, if all goes well and we are lucky, ATI or NVIDIA will manage to implement WGF 2.0 functions already in the next generation (NV5X and the like from ATI) and even publish some demos. But our sad experience with NV3X and Shaders 3.0 shows that it's not easy to forecast final specifications beforehand. It will be most likely only the next generation (NV6X and the like from ATI) that will be truly WGF 2.0 hardware. And considering application development, we may just as well speak of NV7X generation at the end of 2007. That's all right — operating systems have a long life and they don't enter the market right away, WGF 2.0 will be available only for Longhorn and the next operating systems on its basis.

It's interesting to see Microsoft making another step towards total control of the graphics market development — from now on accelerators will have to offer almost identical features and the difference between them will be only in their quality and performance. No CAPS, no tyranny of formats — all cards support everything. That's all, these are all features that Microsoft decided to include into the next version of its graphics API. Of course, there will be hacks — vendor specific features, but this way or another they will be used from D3D applications, even if not in the official way as in OpenGL with its extensions. But they will be harder to implement. Well, it has always been crystal clear who is the ruler that divides and conquers ;-)

However, we won't have to wait for long, as usual. We shall soon see everything with our own eyes!

Write a comment below. No registration needed!

|

|

|

|

|

|