|

||

|

||

| ||

CONTENTS

It's not been long since new NVIDIA and ATI High-End products were announced (April, 13 and May, 4, respectively).

In the latest R420 review, I wrote that we were about to study how new superpowerful accelerators worked in modern games. Older tests proved that all new accelerators depended greatly on the CPU and system performance in general and were limited by them even at maximal AA and anisotropy loads. So the moral is: even if there are people who are ready to pay a lot for a superaccelerator so that they can play their favourite old games, they will have to update their whole systems; otherwise, their acquisition will be of little use. In short, what are the basic things that we learnt examining the new products? First of all, it is a double number of pipelines in RADEON X800 XT, compared to 9800XT, and an increased operational frequency. It all resulted in a double performance at the maximal accelerator load (in new games). I emphasise, it's not 15-20-25 percent as was before, but a whole 100-percent increase, and even more sometimes. But GeForce 6800 Ultra has a much more astonishing gain in capabilities and performance, compared to its GeForce FX 5950 Ultra predecessor. First, the number of pixel pipelines has increased fourfold, and the number of texture units and vertex pipelines has doubled. Besides, NVIDIA engineers have done a perfect job concerning acceleration of shader units, and the new product really smashes its predecessor. Although it has a lower frequency of 400 (vs. 475) MHz. Before, we said that NV38 was equal to R360 only in older games (mostly in OpenGL ones where NVIDIA drivers have supremacy) and only due to optimisation supports and the likes. Now the situation is different: both products can compete "within the same weight category". But does that put an end to the war of "optimisations" and speed adjustments at the expense of quality? Hopefully, yes, but certain doubts still exist. Certainly, useful optimisations in drivers (e.g. the ones that correct the developers' mistakes) will continue to take place, but such optimisations should only be engouraged. Today, we dwell on three aspects:

Concerning the first point, it is still unclear which cards will become serial, 400 or 450 MHz ones. Some experts believe that the accelerated solution will be produced on a limited scale, and two or three vendors will base "special" cards on it, like Gainward's Golden Sample. Other 6800 Ultra's will work at 400 MHz (eVGA orders for these products in the US clearly indicate that it is 400-MHz cards that will be mostly produced for sale). As for the second point, we'll have to check if NV40 will have performance gain or not and if yes, what it will be caused by. Besides, we'll also test how safe and stable this version is. And now a brief reminder of the cards we're testing.

Boards

Both of them are reference cards, that is, pre-serial products. But later on, we'll deal with serial items as well. Both cards have 256-MB GDDR3 memory with a 1.6ns access time. Memory frequencies are 550 (1100) MHz in NV40 and 575 (1150) MHz in R420. Chip frequencies are 400 and 450 MHz for NV40 and 525 MHz for R420. Each GPU contains 16 pixel pipelines and 6 vertex ones. NV40 also supports shaders version 3.0, and R420 supports 3Dc, the company's own normal map compression technology.

To estimate roughly and visually overclocking potentials, we're giving a photo containing both NV40 and R420 chips:

We see that NV40 has the largest die (which is no surprise considering its 222 mln transistors); however, practice shows that some cards can be overclocked from 400 to 450 MHz. For example, our card with silver-containing thermal grease could work at such frequencies after we just installed an additional small fan that blew cold air from the videocard to the turbine of the original cooler. Certainly, it doesn't indicate how many GPUs can work at 450 MHz, but at least, we see that it's possible. Although Internet rumours have it that only a few selected chips will work at 450 MHz, but time will tell, anyway.

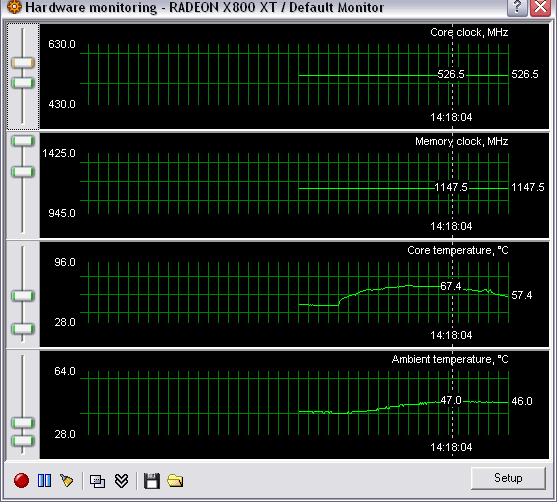

Unfortunately, I can't say anything definite about X800 XT overclocking, as the tested card had BIOS that forbade to overclock the chip. Later on, this issue will certainly be studied. Although it must be said that the latest beta-version of RivaTuner (by Alexey Nikolaichuk AKA Unwinder) already has a full support of X800 and not only shows the number of pixel pipelines but monitors the temperature as well:

We're now working with the author on the driver patch that would allow to change the number fo pipelines including previously disabled ones (as was the case with RADEON 9500/9700; 9800SE/9800). We're facing a lot of problems with protections (an ATI official told the truth that it would be much more difficult to change softwarily the number of pipelines). But we're working on, so we'll wait and see :-)

Installation and driversConfigurations of the testbeds:

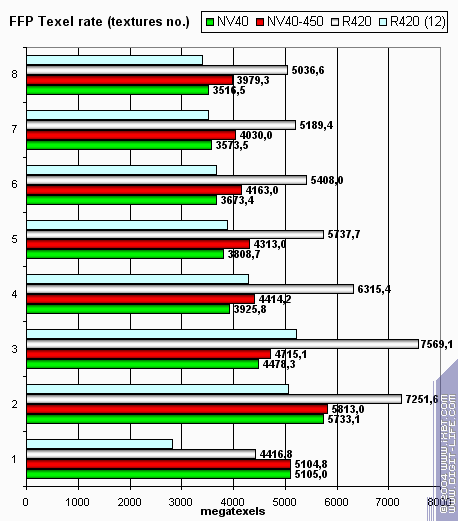

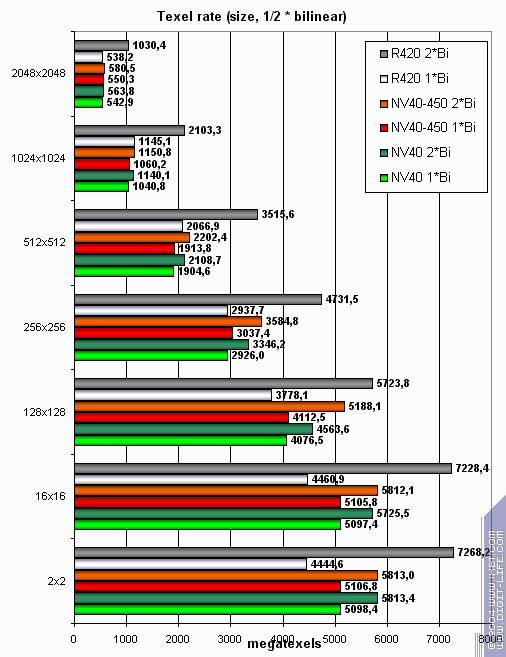

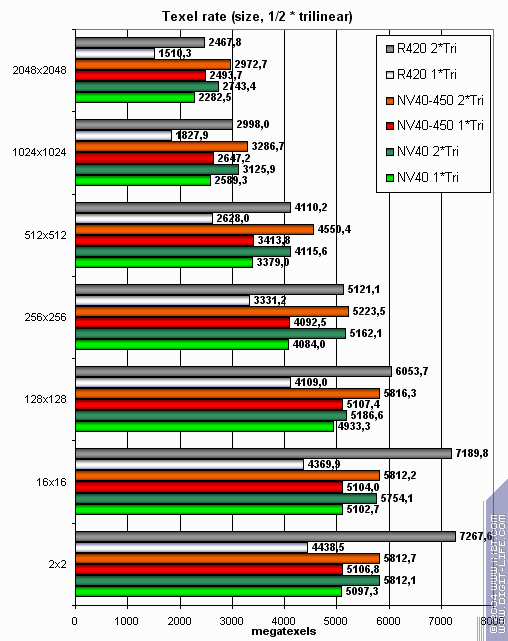

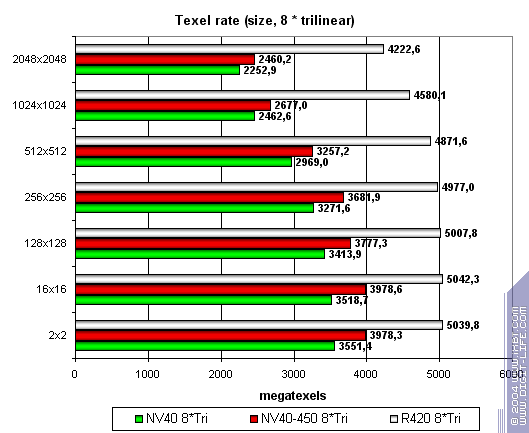

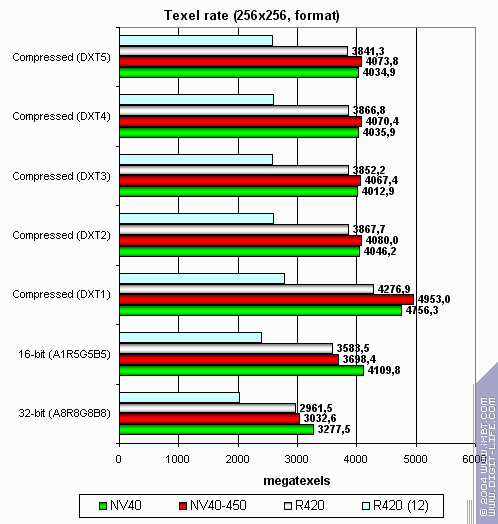

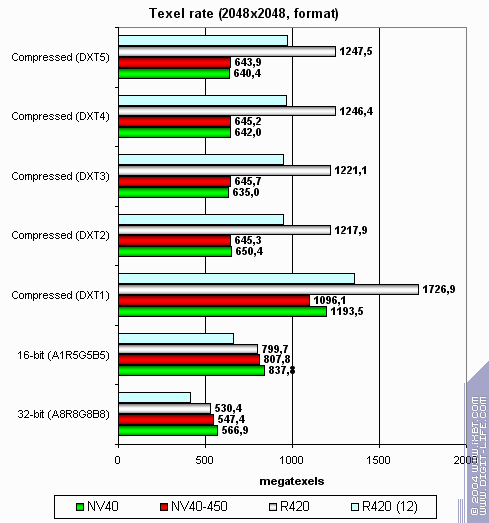

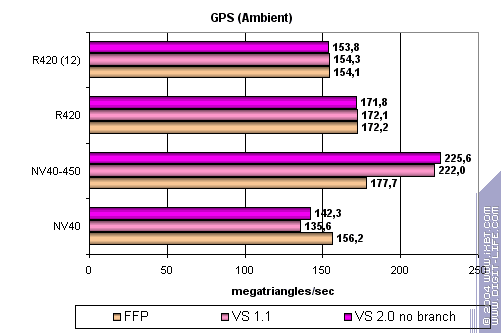

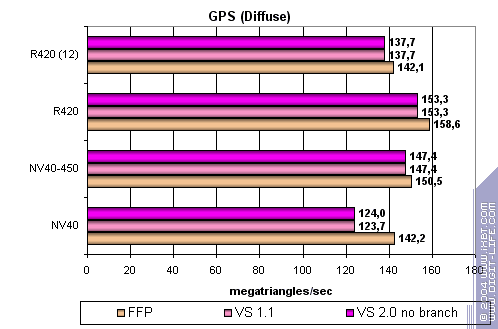

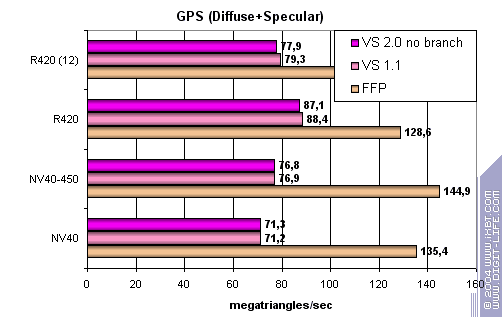

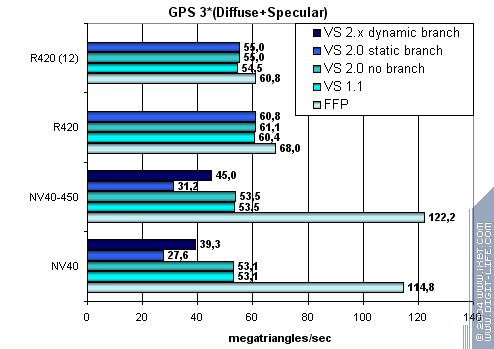

Synthetic tests in D3D RightMarkThe version of D3D RightMark Beta 4 (1050) synthetic benchmark we used, as well as its description are available at http://3d.rightmark.org I repeat that all RightMark tests were made on a Pentium4-based computer. All tests include the results of the following reviews: NV40 at 400MHz and R420, so we'll only comment on the differences and behaviour of NV40 with a 450MHz core. Pixel Filling testTexelrate, FFP mode, for various numbers of textures overlaid on one pixel:  In the case of one and two textures, NV40-450 is expectedly limited by memory bandwidth, or to be precise, by the speed of work with the frame buffer. As the number of textures grows, the advantage of a higher core frequency become more evident but makes no great difference, and R420 remains the leader. Fillrate (pixelrate), FFP mode, for various numbers of textures overlaid on one pixel: The situation is the same. Now let's examine how pixelrate depends on the shader version: Nothing new. So, 450 MHz didn't turn the tables in this test. And that's quite natural, as they are still far away from 525 MHz. Now let's see how texture modules cope with caching and bilinear filtering of real various-size textures:    The differences are insignificant. Memory bandwidth and filtering algorithm are more important here than a 50-MHz core growth. Now let's see how texelrate depends on the texture format:  A larger size:  Again, nothing new or surprising. Thus, we can state an absence of significant differences. Geometry Processing Speed testThe simplest shader — peak triangle bandwidth:  The gain is visible and NV40-450 becomes the leader. Interestingly, the jump in vertex shaders exceeds the frequency difference (due to new drivers) and it is evident that short vertex shaders are optimised better. Let's see if this jump will stay in more complex tasks. A more complex shader - one simple point light source:  No more surprises. The optimisation only concerns peak bandwidth and won't manifest itself so clearly in a more or less standard task. Anyway, the result of vertex shaders does not depend so much on memory bandwidth, that is why it has increased in proportion to the core frequency. Now let's make the task still more complex:  NV40 FFP is leading here, although ATI has a frequency advantage. But the general picture is still favourable for ATI: a higher core frequency and faster vertex shaders. Now we've come to the most complex task: three light sources in variants without jumps, with static and dynamic execution controls:  FFP is strong enough, while NVIDIA chips are badly affected by static jumps. The paradox of it is that dynamic jumps are better than static ones for NVIDIA chips. The general picture is again favourable for R420 in every aspect that concerns FFP... The conclusion is that there are no surprises once again. Vertex shaders are scaled in proportion to the core frequency -- absence of a strong dependence on memory bandwidth comes at a price.

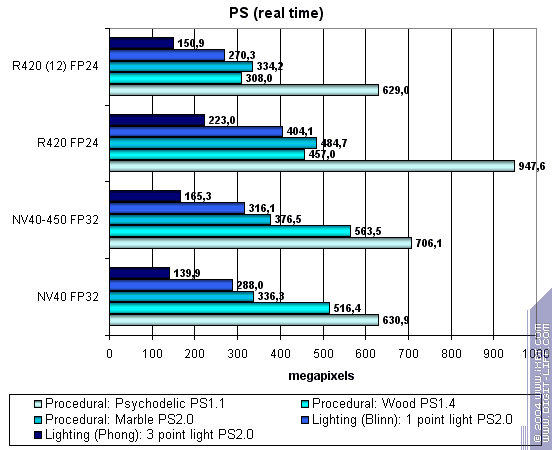

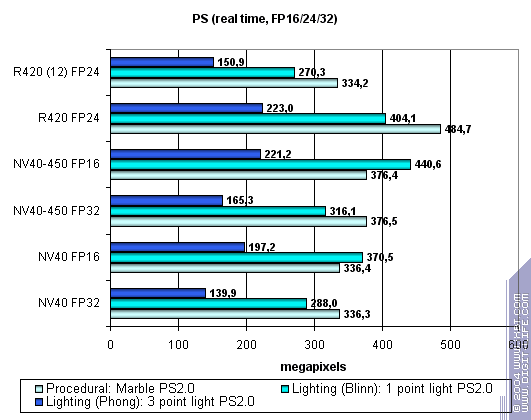

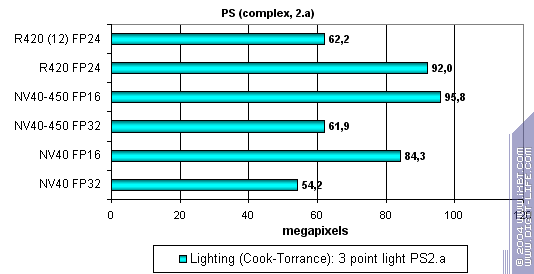

Pixel Shaders testThe first group of shaders comprises quite simple versions for real-time execution -- 1.1, 1.4, and 2.0:  As we can see, NV40 works faster at 450 MHz. But that's not enough to win R420 which still remains the leader in game-complexity pixel shaders. Let's see if the use of 16-bit precision floating-point numbers can save NV40:  16-bit precision brings an advantage to NV40, big in some shaders, small in others. Sometimes NV40 even catches up with R420 (don't forget about low precision artefacts (see the game section of the review)) but it still can't win the palm. And now let's take a look at a really complex "cinema" shader 2.a. Due to a small number of dependent selections, it met the restricting requirements for R420 pixel pipelines:  Proportionally faster. Now NV40-450 overtakes R420, but only if 16-bit precision is used. Thus, total results concerning pixel shaders are as follows: sometimes 450 MHz enable to equalise performance with R420, but they don't show what could be called a clear-cut leadership.

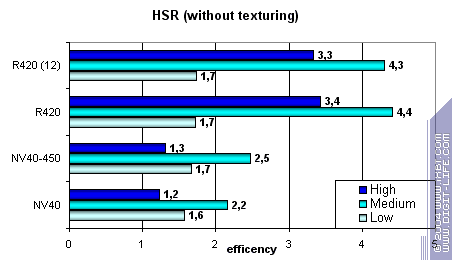

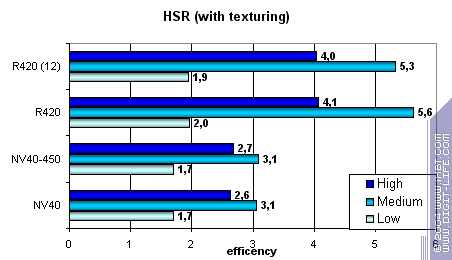

HSR testPeak efficiency (with/without textures) depending on the geometrical complexity:

The picture is virtually the same, and the small changes in 450 MHz NV40 effectiveness are caused by a different ratio of core/memory frequencies.

Point Sprites testInterestingly, new NVIDIA drivers show no performance falls that we saw in small sprite sizes.

CONCLUSIONS

In other words, even 450 MHz can't ensure NV40's victory in synthetic tests.

[ Next part (2) ]27.05.2004

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |