|

||

|

||

| ||

Characters:

Popular synthetic actors of finished and scheduled full-length 3D movies are also taking part in this movie. We will return to the scenario a bit later, and now some general information on what was happening at the presentation as an overture to the today's review... Let's sum up what we have added to the original article:

Around the scenario: "Run, Industry, run"!

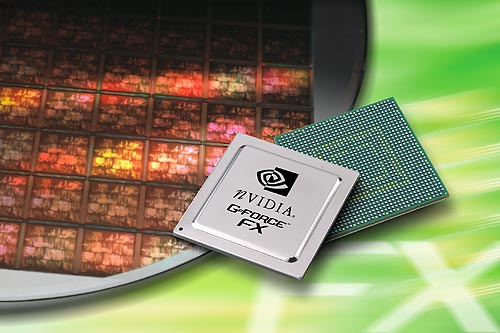

The NVIDIA's GPU codenamed NV30, so long-awaited and one of

the most arguable GPUs, was announced on November 18, 2002.

It was coupled with the announcement of a new marketing name

of GeForce FX. First NVIDIA was going to give a new name to

a new trademark to emphasize its importance but then it turned

out that it was impossible to refuse its mark. According to

the public-opinion polls, the GeForce brand is known to much

more people than the company's name NVIDIA is. It's like with

Pentium. The parallel with Intel, which is a recognized locomotive

of the IT industry is well suitable here as at the moment

NVIDIA is a flagship on the 3D graphics ocean, like 3dfx was

in its time, a creator of the first really successful hardware

3D solution. Symbolically, while working on the NV30 the developers

used a lot of ideas of the 3dfx's project codenamed Mojo which

failed to be completed. No secret that the GeForce FX is an incarnation of a so called flexibly programmable graphics architecture, i.e. a graphics processor. Therefore, this chip should be called GPU, but on the other hand, this term was earlier used for less flexible solutions of the previous generation of accelerators (let's call it the DX8 generation: NV2x, R200 etc.). Let's glance at the transient process from a fixed architecture to flexibly programmable ones:

This is an "evolutionary revolution" whose current stage is not completed yet. There is one or maybe several stages coming in the near future:

Well, we'll see what we will see. But two latter steps can bring in here much more than two DX versions both because of Microsoft and a guileful intent of graphics chip makers. On the other hand, while in terms of such evolutionary layout the current stage looks expected, users and programmers can take it as a revolutionary period as it provokes a switchover to utilization of capabilities of flexible programming of accelerators. Big flexible pixel shaders, even without the command stream control, or with simplified control on the predicate level, are able to bring to PC an earlier unreachable visual level making a much greater jump compared to the first attempts of the previous generation clamped by the awkward assembler code of shaders and a limited number of pixel instructions. Quality, rather than quantity, can win this time, and the epoch of DX9 accelerators can become as significant as the arrival of the 3dfx Voodoo. If you remember, the Voodoo wasn't conceptually the first. But it did provoke a quantitative jump of accelerators, which then turned into a qualitative jump of development of games for them. I hope this time the industry will be given a powerful spur caused by the possibility to write complex vertex and pixel shaders on higher-level languages. Let's leave aside the issue concerning a revolutionary nature of DX9 solutions which are so much spoken about among sales managers. Just must say that while the revolutionary character of the solution as a whole is yet to be proved, the revolutionary character of the separate technologies of the GeForce FX is undoubted. The accelerators are gradually approaching common general-purpose processors in several aspects:

The accelerators are striding toward CPUs and they have already outpaced average general-purpose processors in the number of transistors or peak computational power. The issue of convergence depends only on time and flexibility. CPUs are also nearing them by increasing their performance, especially in vector operations, and soon they will be able to fulfill yesterday's tasks of graphics acceleration. Moreover, the degree of brute force parallelism of CPU is growing up as well - just remember the HT or multicore CPUs. The direct confrontation is not close, but it will definitely take place, and primarily between trendsetters in one or another sphere rather than between the classes of devices (the outcome will be called CPU (or CGPU :). Wallets of users are being fought for now, and graphics in this sphere doesn't lose to CPUs. Before going further I recommend that you read (if you haven't yet) the following key theoretical materials:

Now we are finishing the digression and turning to our main hero - GeForce FX. GeForce FX: leading role in focus

Straight away: the key specifications of the new GPU:

And now look at the block diagram of the GeForce FX:  Functions of the blocks:

Let's go through the main units of the new chip approximately in the same order as a usual data flow goes. Along the way we will comment on their functions, architecture (the basic one regarding the DirectX 9.0 and additional capabilities of the GeForce FX) and make small lyrical digressions.

Aleksander Medvedev (unclesam@ixbt.com)

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |