|

||

|

||

| ||

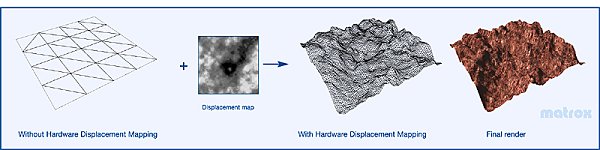

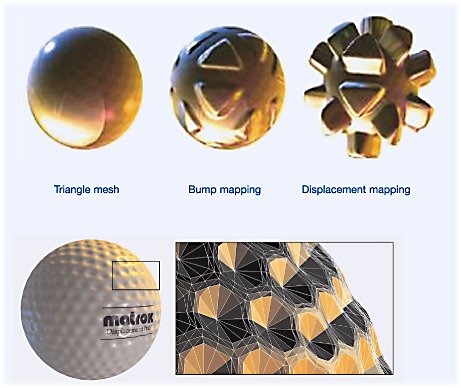

On the 14th of May 2002 Matrox Graphics Inc., already forgotten by 3D visualization enthusiasts, is to announce the Parhelia-512 chip, belonging to the new generation of graphic accelerator architectures. I'd rather characterize it as the "Generation X9". The new chip from Matrox is the second specimen of this generation as 3Dlabs has the priority (with some stipulations), having already announced its flexibly-programmable P10 VPU. Before we get closer to the new brainchild of Matrox, this article's top hero, let's discuss another symbolic one: The Ninth Mister X (DirectX 9)Let us quote the key features (from the angle of accelerators) of the popular API's future version. Confidential style. The improved data precisionThere are new texture and frame buffers formats. Each of the four components (RGBA) can now be presented as a 32- or 16-bit floating point value in the standard IEEE F32 and F16 formats. Each pixel will occupy 128 or 64 bits, this will considerably increase the requirements to the accelerator memory capacity. Though there's still no need to use these formats everywhere. Their main intent is the realization of various effects and lighting models (for example, the storage of pixel shader tables). The floating point content of frame buffers can't be output directly to the monitor via RAMDAC or digital interface, they are only for internal usage. There's the new format for improved integer data representation on-screen: 10:10:10:2 (RGBA), where each color component has the 10-bit precision. This exceeds the capabilities of current display devices and considerably extends the dynamic range and the resulting image quality. Displacement MappingThe Displacement Mapping technology, licensed from Matrox Graphics Inc. (included into the Parhelia-512, of course) enables to increase considerably the realism and detail of bumped surfaces. Unlike the traditional relief variations, layered to triangles' surfaces at rendering and not affecting the visibility of pixels (the traditional bump mapping affects only pixel luminance, not its actual location), the displacement mapping enables to create geometrically correct bumps, that do not look perfectly straight, intersecting. Actually this technology modifies the location of triangle vertexes, pushing them towards the surface normal by value, proportional to another value of a special texture (a displacement map):

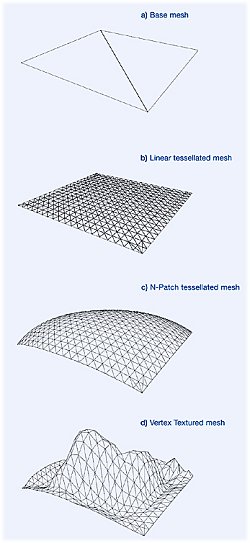

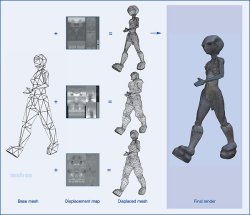

The displacement mapping is responsible for the "rough" bumps, pushing triangle vertexes to this surface perpendicular. One can use a traditional bump map along with the displacement map to create more precise "by-pixel" details, if needed. Of course, there must be enough triangles to show all nuances of a rough bump, set by displacement mapping. In order to create these triangles automatically (without bothering programmers, CPU, and AGP bus), we use the already familiar N-Patches:

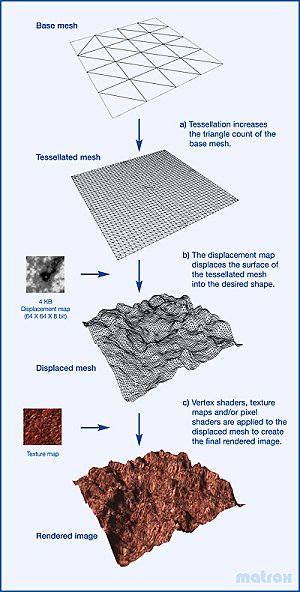

I.e. the technology of model detail improving based on the additional triangle tesselation. The displacement mapping can't be used without the N-Patches hardware support - from the angle of DX9 this technology is just an important addition to N-Patches algorithm:

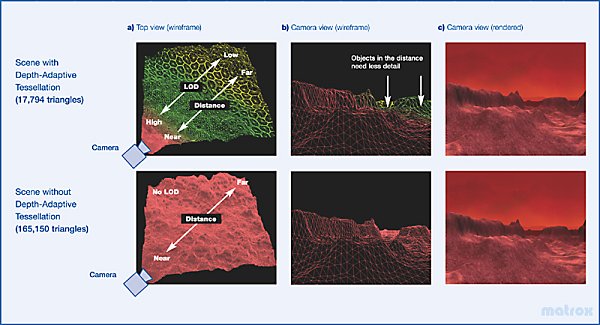

It's interesting that DX9 features considerably improved patches. For example, the detail level (granularity value) can now be chosen automatically depending on the distance from a triangle to an observer. Thus we get more optimal, scene granularity close to even (from observer's viewpoint) as all triangles now have about the same visible size:

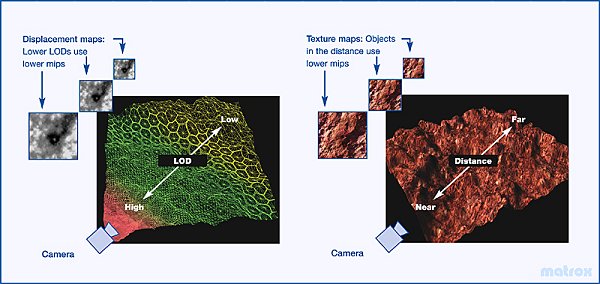

Besides, you can use mip-mapping for the displacement mapping as well as for usual textures:

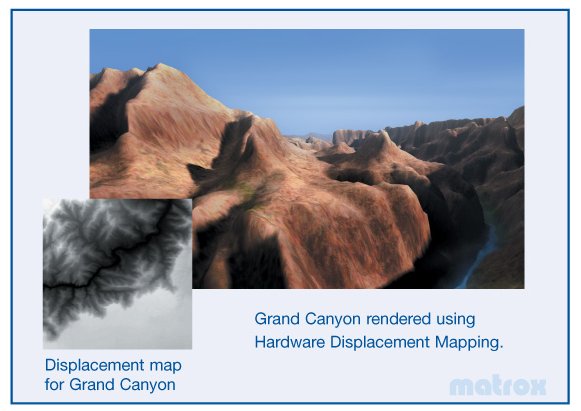

As a result we get something nice "without serious troubles":

Of course, the displacement mapping can be used for 3D models as well as for flat surfaces:

In comparison with traditional bump maps (including by-pixel shader bumps), the displacement mapping obviously consume more resources - it's no joke to represent all bump details as triangles. But they produce more realism as well:

Of course, they'll need to combine these technologies reasonably to get dynamics and realism in actual game applications. Vertex Shader 2.0The brief of new vertex program features:

Pixel Shader 2.0The brief of new pixel program features:

Besides, there's the very important DXVC format of 3D-texture compression, licensed from NVIDIA. The bottom line. Much is staked at accelerator's programmability (as well as in case of OpenGL 2.0). The higher-level language and considerably improved programmable block flexibility will do their good. The dream of many graphic chip developers - to offload the most difficulties (algorithms) from hardware to programmers - comes true faster than one could have imagined. Now all the data inside accelerator pipelines feature high precision due to the floating point format. The stable MS DX9 is expected in the end of summer, and its official release seems to be an X-mas surprise. And now let me introduce the new chip from Matrox Graphics in all its beauty: Parhelia-512 GPU

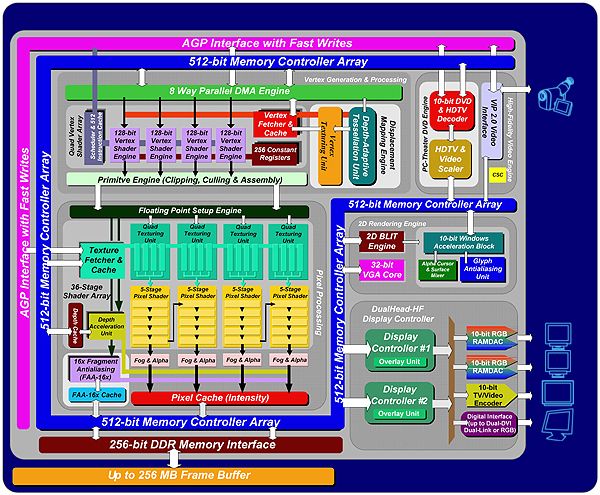

Flowchart:

Specifications:

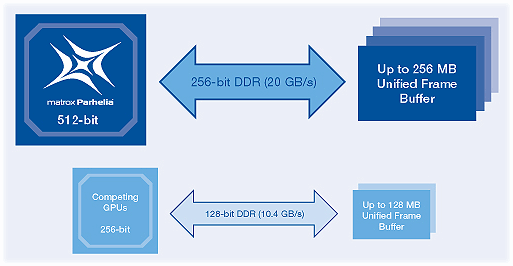

The large number of transistors at such technological norm means both great capabilities, and high cost price of Parhelia-512. It's strange that this complex GPU will be produced according to 0.15-micron technological process; there's still no data about the heat emission. The complete 256-bit DDR memory bus is the even more expensive feature of all latest-generation GPUs (P10, R300, NV30).

Theoretically, such a technological jump improves GPU performance by the factor of two. And only due to the improved cost price, without any new architecture technologies. It seems such solution is economically better now, than any architecture surprises, as the prices for memory and circuit boards enable to use a "wide bus" widely. Besides, DX9, OpenGL 2.0 and further API versions will store more and more data in GPU memory in the floating point (complex to compress) format. Still more data will represent the geometry and (and other non-graphical) data. Having adopted such a wide memory bus, one needn't invest into the development and debugging of complex tile rendering and compression technologies, saving the local memory bandwidth (and considerably increasing the cost and developing time of chip & drivers). Speaking of Parhelia-512 development cost, it's the time to think over the reasons that made Matrox release this GPU. Noone we've been waiting from this company anything like this for a year already. Surprise! According to one of Matrox engineers: "We've made this chip to show everybody that we are still capable to develop the most up-to-date solution". Another worker said something less serious: "Just for fun". Obviously this expensive solution won't affect the market in the near future, but it'll improve Matrox reputation for sure. Even if the company sells less than 10000 Parhelia-512 cards. The announce of this unique chip alone can draw the fixed attention. So, the local video memory bandwidth is twice as wide in comparison with the former-generation GPUs. But the speed is not the main feature of Matrox products. Parhelia-512 surprisingly doesn't support any memory bandwidth saving technologies. This is strange - such solutions are present in all latest products of ATI and NVIDIA. But it seems that Matrox decided to speed up and reduce the price of chip development, the chip itself being a symbol more than a market takeover attempt. So, unlike ATI and NVIDIA, Matrox hasn't supplied its latest chip with Z-buffer compression or Z-occlusion culling technologies. It features fast Z-clear only. The chip is very complex anyway: the amount of transistors requires the finer .13 technology, but it doesn't seem to be fully available for Matrox at the required cost price. One might forecast the release of the .13-micron updated chip version fully compatible with DX9 in some time (in the beginning of 2003 or later). The tests of engineer samples with very raw drivers show only 20...30% advantage of Parhelia-512 comparing to NVIDIA GeForce 4 Ti4600. Even in case successful software tuning, the twice advantage isn't that possible. 1.5 - that's the limit. Obviously, usual consumers should buy cards on the new Matrox GPU due to the quality and unique features, but not the speed. On the other hand, Matrox can't pretend to be successful on the professional market without certified OpenGL drivers (they might appear along with the planned professional Parhelia-512 cards line). The card is very expensive for the "just the nice 2D fans" niche. So, initially this is for enthusiasts, semi-professionals, brand fans and us - videocard specialists :-). It's interesting that the GPU is only "partially" DX9-compatible. Parhelia-512 supports Vertex Shaders 2.0. But at that Parhelia-512 doesn't correspond to the second generation of Pixel Shaders! 1.3 version (up to 4 textures per pass) is the chip's maximum. Let's look at the detailed flowchart of chip's pixel pipelines:

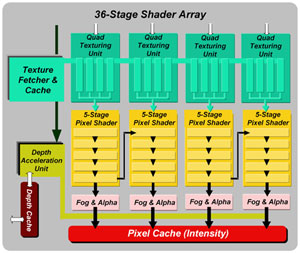

Pixel Shaders 2.0 are already impossible due to obviously insufficient pixel pipeline stages and texture loopbacks absence (you can't use more than 4 texture values per pass). It's interesting that Matrox's papers state 36 stages, but for the total of 4 pixel pipelines. There are two configurations possible:

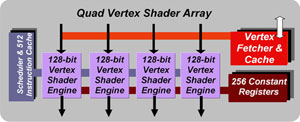

As a result, pixel shaders with 5 instructions and more will be executed twice slower. For the sake of justice I'd note that typical Pixel Shaders 1.3 (and lower) often consist of 5 and fewer instructions. Actually, Matrox marketing specialists want to confuse the potential buyers by the considerable number of 36 stages, that are useless for a GPU with 4 textures per pass. The actual maximum is 4 texture and 10 pixel stages. Applications will use only 8 of 10 - clearly corresponding to Shaders 1.3 limitations. The same Matrox's papers mention 64 (!) texture samples per cycle of Parhelia-512, but at closer inspection it's obviously the total for all 16 texture units again. Unpleasant, is it? They try to confuse us with large numbers around the generally accepted norms :-(. So, Parhelia-512 is not a complete DX9 GPU. There's no sense in hoping to get its unique features (between DX8 and DX9) supported by developers - the Matrox GPU won't just occupy enough market. On the other hands, Parhelia-512 fully supports previously described displacement mapping and adaptive tesselation N-Patches. Both strictly according to DX9. The chip works with 10-bit frame buffer introduced with DX9, and has some other DX9-specific features. It also supports Vertex Shaders 2.0 unit, capable of handling 4 vertexes simultaneously:

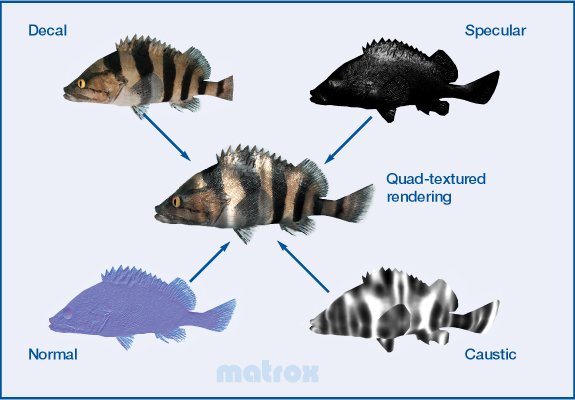

it's not the first time a chip borns at the turn of APIs - hardware evolves almost twice as faster as software. Let's hope that one of this chip's intents is the creation and debugging of a core for the future mainstream products from Matrox, possibly fully compatible with DX9 with the "floating" Pixel Shaders 2.0 unit. Except the wide bus and improved data precision, the new-generation chips are bound to feature the high-quality and "performance-inexpensive" antialiasing (AA) and anisotropic filtering. Parhelia-512 is good at this, excluding the marketing trick with 64. We actually have four bilinear sampling units and flexibly-programmable interpolator per each pixel pipeline (or rather a single flexibly-configurable texture unit, capable of handling 4 bilinear samples per cycle (including different textures)). Each of these units can choose and interpolate 16 discrete samples per cycle (the total of 64 for all four pixel pipelines). Below is the example of actual single-pass four-texture mapping:

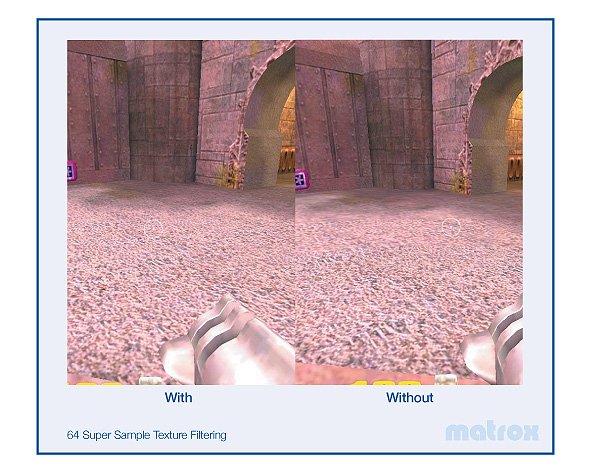

Depending on filtering we get four bilinear-filtered, two trilinear- or anisotropic-filtered (8 samples) or one anisotropic-filtered (16 samples) textures per cycle. So the old "dual-texture" applications will feature lossless 8-tap anisotropic filtering. The anisotropic filtering technology is close to NVIDIA's, but we won't see it almost for free (based on the RIP mapping) like featured by ATI products. Below is the screenshot of Quake III with anisotropy:

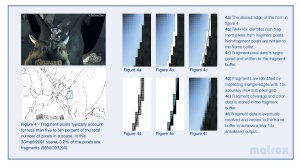

We'll describe anisotropic filtering quality further. But let's note that the performance drop will be smoothed by the twice number of sampled texture data per cycle. But only in old applications, not using 4 textures per cycle. The antialiasing technology of Parhelia-512 is currently unique. So, it should be praised, being close to the ideal. Essentially, it's the supersampling, up to 16 (4x4) samples per one screen pixel. But it's performed ONLY (!) for polygon edge pixels (3..5% of a typical scene):

Let's compare with popular FSAA methods:

The main advantage is obvious: unlike multisampling, the surplus data isn't stored in memory and are not sent over the bus! The total frame buffer size increases slightly, not more then by two, even at the maximum 16x setting. The special fast rendering pass is used to determine the edge pixels: GPU marks edge pixels of polygons in a separate buffer without texture value calculations and intermediate texel rendering. Besides, there's no texture sharpness drop, featured by FSAA and some hybrid MSAA techniques:

Let's compare the screenshots:

On the left: 3D Mark 2001 - Without FAA-16x; On the right: 3D Mark 2001 - With FAA-16x

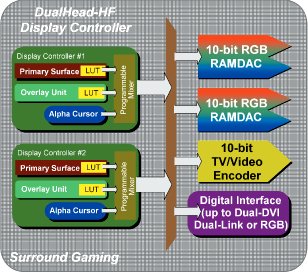

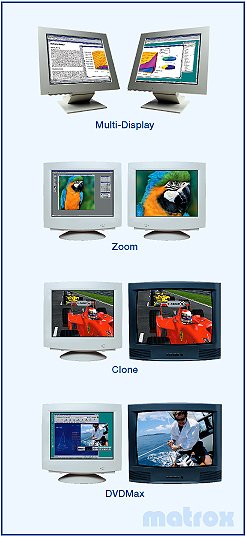

On the left: Seaplane - Without FAA-16x; On the right: Seaplane - With FAA-16x However this very intellectual AA technique may occasionally cause artefacts. Besides, it can't correctly antialias edges overlayed by semi-transparent polygons (like clouds, fog, glass, fire). A user might want to use the familiar classical 4å (2å2) MSAA supported by the chip as well. The interface richness is the undoubtful advantage of the chip. There are two complete, traditionally high-quality 400 MHz RAMDACs, two TDMS transmitters, integrated TV-Out and two CRTC, providing the opportunity of simultaneous dual-screen image output:

There's also the complete set of dual-display features supported:

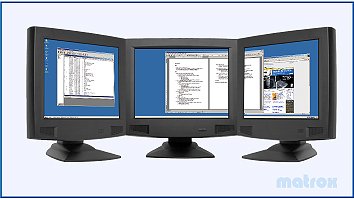

As well as the new feature of partial image output to (!) receivers simultaneously. For example, to three monitors, using the third external RAMDAC):

We expect many various games to be showed off at E3 this year in the wide (180-degree view) mode on three monitors at once. As you might have already guessed - on Parhelia-512-based card. There's the special utility supplied with the software, enabling to play many current games in the wide dual- or triple-screen mode.

Feel the difference as they say (the usual mode to the left, Surround Gaming to the right):

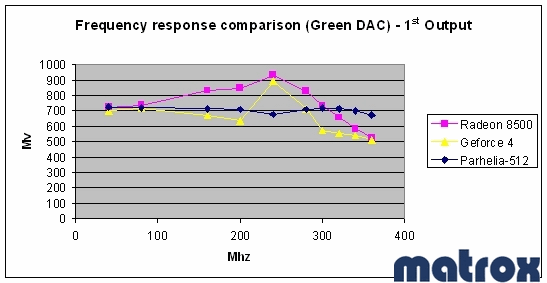

So, there's only one simple problem to solve: where a usual player might obtain three monitors? And where should he put them? :-) And about the RAMDAC quality, by the way. Matrox has always been presenting high-quality 2D - the company even states the results (!) of its RAMDAC frequency characteristic comparison with the main rivals. The primary:

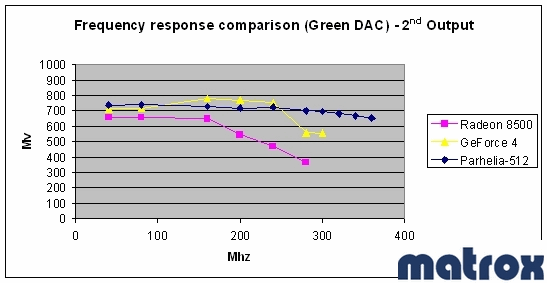

And the secondary RAMDAC:

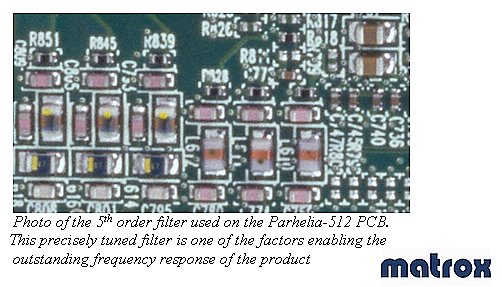

The board features the high-quality 5th-degree output filter:

Besides, Parhelia-512 features the hardware support of DVD and HDTV decoder. You can now watch DVD at 10-bit quality. There's just a question of its practicability for signal originally 8-bit stored and compressed. Bright! Warm?Let's finally provide the useless enough table:

It's useless as there's no sense in drawing premature conclusions about Parhelia-512's technological leadership - let's wait for the announces and samples of new-generation game chips from rivals - NVIDIA NV30 and ATi R300 DX9 chips. I wonder if the story about GF2 and Radeon pixel shaders, "cancelled" by Microsoft as the result of the long evolution of DX8 key features including the period after chip development, continues. Will these main rivals be fully compatible with DX9? Their creators answer positively, but we'll be able to check it only in fall. We shouldn't also forget the professional P10 chip, announced by 3Dlabs. It's the physical incarnation of still unadopted OpenGL 2.0 standard (from 3Dlabs point of view), evolving similarly to DX9. Though slower, but more reasonably and consistently. The architecture, required for OpenGL 2.0, will potentially (possibly) correspond to DX9 requirements as well. Not the vice versa. So, 64, 128, and 256 MBytes Parhelia-512 cards are to be announced soon (avail. in July). The senior will cost about $500, 128 MBytes one - about $400. All of them will feature the full 256-bit memory bus - the difference is in memory clock rate and capacity only. Parhelia-512 cards will be released only by Matrox itself. The partnership with Gigabyte was a big mistake, according to the company's representatives. We should also await the professional line of Parhelia-512 cards. They'll appear for sale in the end of summer, at about the same time as NV30/R300 cards. Matrox product will obviously be competing with them, not the former-generation GeForce4 Ti4600 and especially RADEON 8500. This somehow changes the picture, doesn't it? The parhelia will shine on the 14th of May. Will

it be warm?

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||