|

||

|

||

| ||

CONTENTS

General InformationIt's too banal to start a new review with the words like the spring has come and new accelerators are making their way in life. It's clear that every new product is followed by dozens of beautiful words, lofty matters of 3D functionality and screenshots from perfectly looking games... from the future.  Actually, I'm very sorry for those who spent their money on the GeForce FX 5800 Ultra, which is often priced over $500. NVIDIA let them down again. Or not? The NV35 is much spoken about, but the company didn't promised it officially, and they didn't mention that it wasn't worth buying the NV30 since the improved versions were expected soon. So, when the GeForce FX 5800/Ultra based cards promised yet before the New Year appeared on the market, the company made one more announcement. In the speech of NVIDIA's President a week ago he promised the release of the NV35 and called the NV30 their mistake. But what about those who have already bought this poor product? No one would replace it with the NV35 for nothing. That is why I feel very sorry for those who were ensnared by the elf girl. The NV35 represents a line again. The rumor has it that the line will have three cards, but we have trustworthy information only about two cards:

The specs of the GF FX 5900 can change, but still, the GeForce FX 5900 Ultra, earlier codenamed NV35, is NVIDIA's fastest accelerator. The number 5900 looks similar to 5800, and it makes me think that there are not many changes done. But it's not simply overclocked judging by the frequencies, - moreover, the clock speeds are even lower than those of the NV30. Is there only a 256bit bus added? Well, we'll see that.  One thing is clear - the NV35 is not simply a new line dethroning the previous products, - it replaces the NV30! This is the key difference from the previous tandems: NV10-NV15 (GeForce256-GeForce2), NV20-NV25 (GeForce3-GeForce4Ti). That time NVIDIA also aimed to replace the GeForce256 and GeForce3, but the process was gradual and delivery and sales were normal. Now its the first time when a new product is announced in a month. Certainly, the manufacturers were well aware of it, and the cards were in very short supply. Hence the high costs of the GF FX 5800/Ultra. Here is the list of the reviews of the GeForce FX based cards where we discussed all these subjects:

Specification

Now let's have a closer look at the list items.

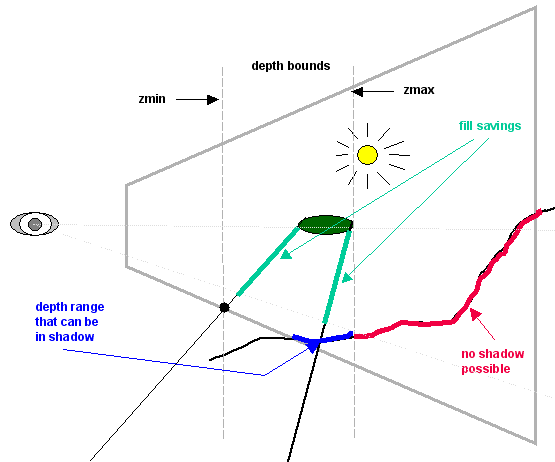

If the pixel depth value does not fall into the range specified when shadows are rendered, the stencil buffer for this pixel is not updated. It's possible thus to save much on the fillrate. It seems that the unit controlling early z-cull is improved, and now it compares the value stored in the Z buffer with the current value interpolated according to the triangle coordinates and with two additional values. As a result, the gain will be twice at least as compared to other chips of the same number of pipelines (8 x-buffer values against 4 color values) and four times (considering that early z-cull can cull up to 16 pixels per clock), even if we assume that the bus load is not lowered. Below you can see how large the area taken by the shadow buffers rendered is.    The technology, however, has downsides as well.

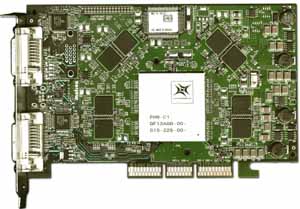

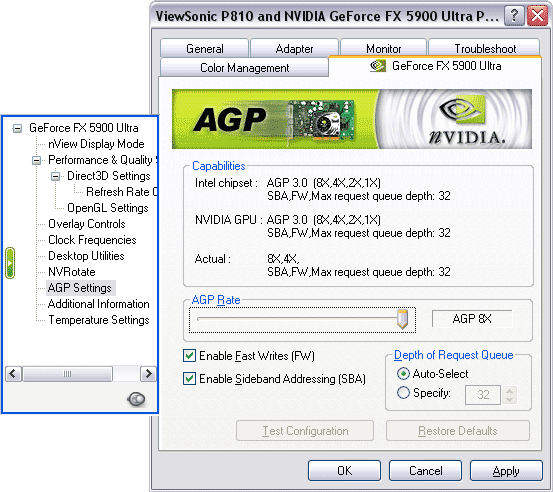

CardThe card has AGP X2/4/8 interface, 256 MB DDR SDRAM in 16(!) chips on both

PCB sides.

We can see that 256 MB occupy twice more chips. They sum up to 512bits,

i.e. if the chip and PCB had a hardware 512bit memory, the card could have

such a high throughput. But there is a 256bit bus, that is why the interleave

is used. The memory uses the two-bank scheme, which raises the exchange

rate a little. That is why we can affirm that the 128MB GeForce FX 5900

card will be a little less efficient than the 256MB models, just like the

RADEON 8500 64 and 128 MB.

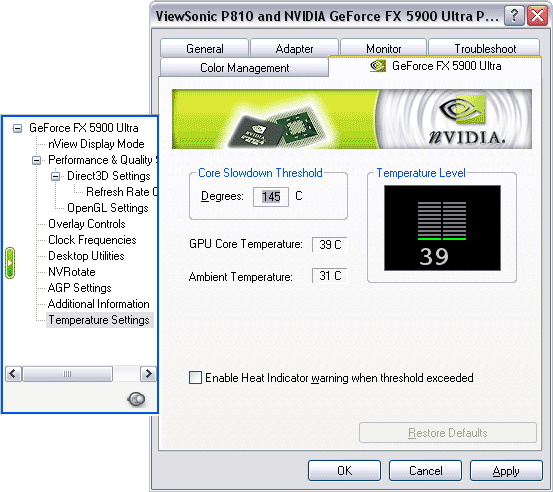

The card is very long and almost equal to the PCB of the Voodoo5 5500'. The chip arrangement reminds the Matrox Parhelia:  The PCB is quite sophisticated, but there are only 8 layers plus shielding (against 12 of the NV30):

The card heats less than the NV30. You can't touch the GeForce FX 5800

Ultra (even the PCB) in an hour of operation in 3D, while this one is just

a little hotter than the pain barrier.

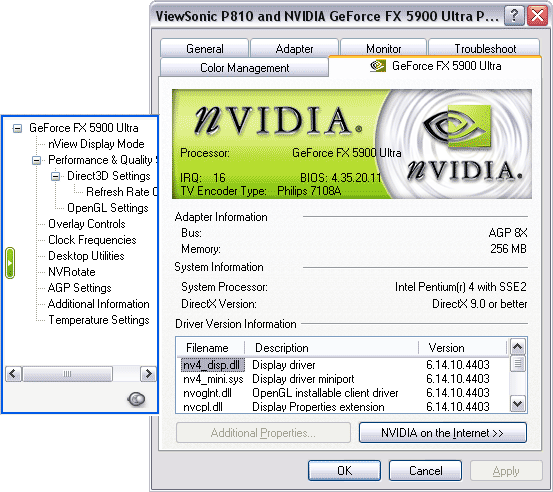

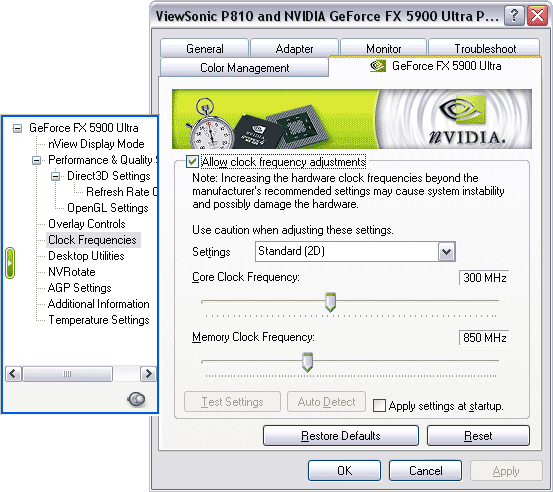

One side of the card is used entirely for the power supply system, - there is also an external power supply connector.  As for the TV-out, the card incorporates the Philips 7108 codec that potentially supports the VIVO.  See the review by Aleksei Samsonov and Dmitry Dorofeev on operation of TV-out with such codec. OverclockingOverclocking is locked in the drivers: you can change the frequencies but the new settings will be changed back then. Low-level overclocking is not possible either because the latest versions of the RivaTuner do not support the NV35. Installation and driversTestbed:

NVIDIA drivers v44.03, VSync off, texture compression off in applications. DirectX 9.0a installed. Cards used for comparison:

Drivers Settings

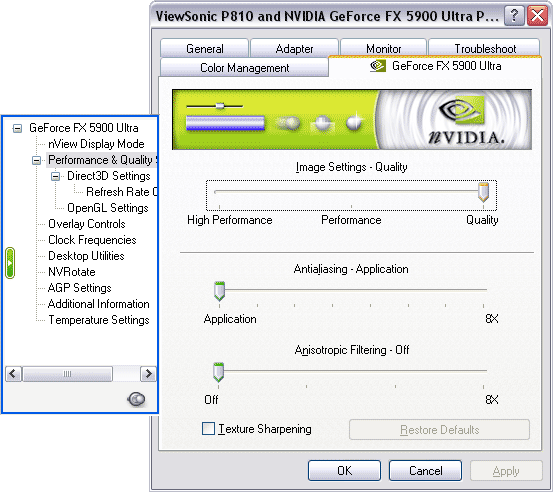

The IntelliSample's modes are renamed again: Quality instead of ApplicATIon, Performance instead of Balanced, High Performance instead of Aggressive and Performance. AA 8x was added (not 8xS only for D3D, but supporting both D3D and OGL). All the other parameters are the same as before. Test Results2D graphicsIn spite of the 400 MHz RAMDAC and other quality components, 2D quality can depend on everything but the specs. Moreover, it can change from sample to sample, even if they come from the same factory. Besides, monitors do not work equally with all videocards. That is why our estimation of 2D is subjective. The 2D quality was tested on the ViewSonic P817-E monitor and Bargo BNC cable. In my opinion, the card has excellent 2D quality! No visual problems at all in 1600x1200 at 85Hz and in 1280x1024 at 120Hz. RightMark 3D (DirectX 9) Synthetic TestsThe test suite from the RightMark 3D (which is under development now) includes the following synthetic tests at this moment:

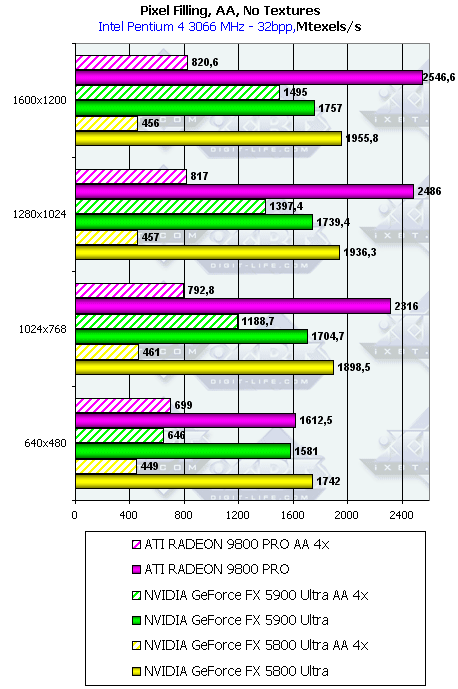

The philosophy of these synthetic tests and their description are given in the NV30 Review. Those who are eager to try RightMark 3D synthetic tests can download the "command-line" test versions which record the final XLS file in the XML format accepted in Microsoft Office XP: Each archive contains description of the test parameters and an example of a .bat file used for benchmarking accelerators. We welcome your comments and ideas as well as information on errors or improper operation of the tests. The first beta COMPLEX version of the packet of the above RightMark 3D synthetic tests is available at http://www.rightmark3d.org/d3drmsyn/. This site is entirely dedicated to the RightMark 3D packet. You can also find there the beta version of the RightMark Video Analyzer v0.4 (14.8Mb) packet we are currently using for the video cards tests. Mailto: unclesam@ixbt.com. Practical TestsThe accelerators we are going to test now are meant for enthusiasts in opinion of the manufacturers: NVIDIA GeForce FX 5900 Ultra (today's hero), NVIDIA GeForce FX 5800 Ultra and ATI RADEON 9800 PRO. The NVIDIA GeForce FX 5800 Ultra which has the closest architecture to the GeForce FX 5900 Ultra is used as a reference model. The scores of the ATI RADEON 9800 PRO are given to find out whether NVIDIA is able to outpace ATI's flagship. Pixel Filling

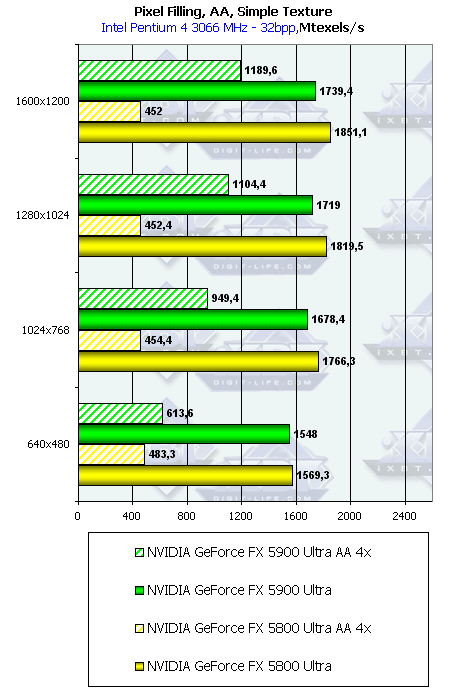

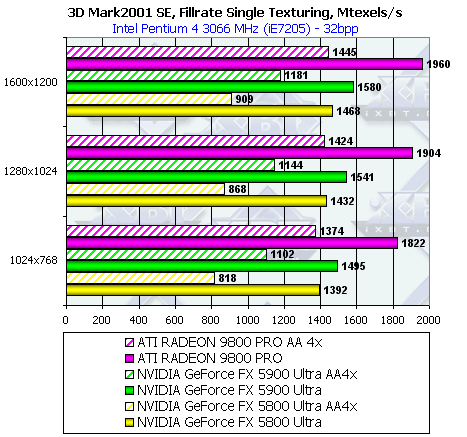

The fillrate in the AA mode corresponds to the frequency difference between GeForce FX 5900 Ultra and NVIDIA GeForce FX 5800 Ultra (450 vs 500). In spite of the higher clock speed of the GFFX chips, the GeForce FX 5900 Ultra loses to ATI in all cases. For the GFFX chips the bus throughput is not a limiting factor in case of ordinary filling. Radeon 9800 Pro takes the lead at the expense of 8 pixel pipelines able to record up to 8 color and depth values per clock, and the maximum values demonstrated by ATI are limited exactly by this throughput. FX can record only 4 full pixels (color + depth + Stencil buffer when needed). But FX's 4 pixel processors have an interesting capability - if when the shader is processed or a triangle is filled, we do not save the pixel value but change only the Z or Stencil buffer values, the pixel processor can have two results per clock. That is, it can record 8 values of the Z or Stencil buffers per clock. NVIDIA openly declares this advantage of the GeForce FX 5900 Ultra NVIDIA as a part of the UltraShadow technology. Such optimization will be of much help in games with stencil shadows, like DOOM III. It can accelerate scene rendering almost 1.5 times. But our test deals with color values as well. That is why the results demonstrate 4 pixels rendered per clock. In the AA mode the fillrate changes. As compared to the GeForce FX 5800 Ultra

the 5900 card goes far ahead, and the ATI's card can fight only in low resolutions.

In higher resolutions the GeForce FX 5900 Ultra is an unexampled leader in spite

of twice fewer pixel pipelines.  In general the situation is the same, but the peak values are lower. Let's

see whether the reality goes along with the theoretical limits based on the core

clock speed and number of pipelines:

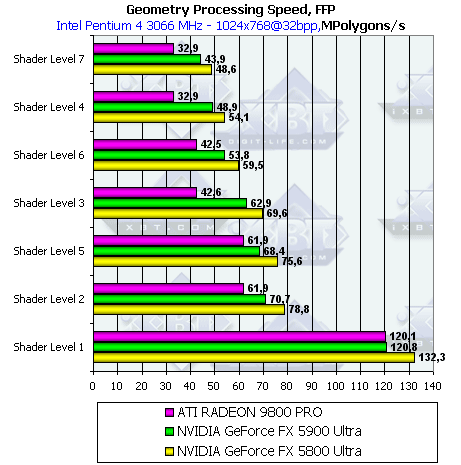

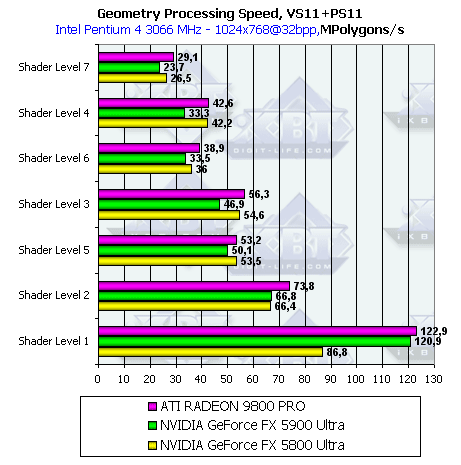

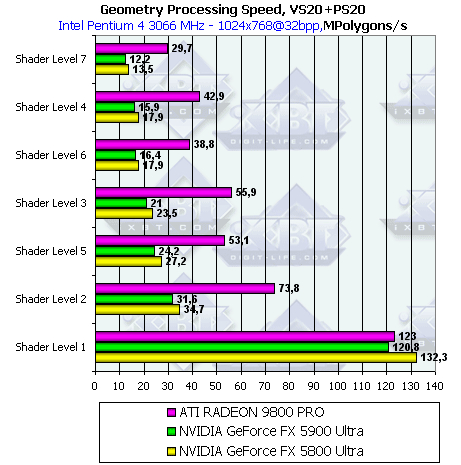

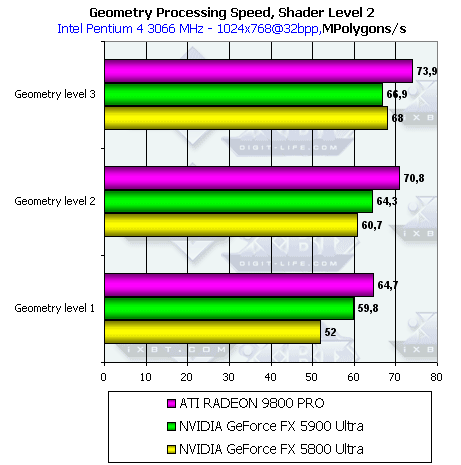

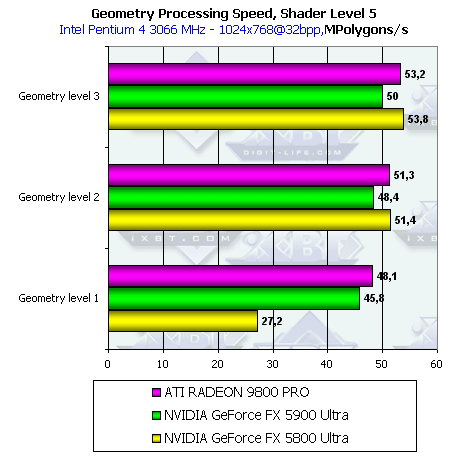

Geometry Processing SpeedThe results are sorted according to the complexity degree of the light model used. The lower group represents the simplest variant which corresponds to the accelerator's peak vertex throughput.

The scores' difference is equal to the frequency difference - the GeForce FX 5900 Ultra is 10% slower than the GeForce FX 5800 Ultra (450 vs 500 MHz)  In the simplest case (Shader Level 1) the GeForce FX 5800 Ultra card has much lower results than when the fixed TCL settings, functionally equal, are used. The GeForce FX 5900 Ultra has comparable results with the fixed TCL and VS 1.1, - since the driver version is the same, the microcode couldn't be optimized. In case of the more complex shader the difference makes 10% again.  Again, the difference between NV30 and NV35 is equal to the frequency difference. In case of compilation of the Shader Level 1 for VS 2.0 the NV30 has the true results as compared to the lowered ones in case of the VS 1.1. The ATI RADEON 9800 PRO processes VS 2.0 with loops as efficient as VS 1.1 though earlier the Radeon 9700 Pro was less speedy. Probably, the driver unwinds the loops into one big shader program when compiling the shader into a microcode. The NV3x series chips have to emulate loops with dynamic transitions with the microcode developed for dynamic execution (dependent on data of the current vertex). That is why the loop overheads are considerable for the NV3x! Well, the instruction flow dynamic management must cause noticeable delays in loop execution. Speed is the cost of flexibility. But if it's true that the ATI RADEON 9800 PRO unwinds shaders, this optimization has the reverse side. A microcode of such shader will take much more space in comparison with loops, the driver will be able to store fewer shaders in the chip's cache, and time taken for loading a new shader into the chip can bring to naught all the optimizations. If the constant that sets the number of loops is changed, the driver has to regenerate and reload the shader's microcode.   When the detail level is low (i.e. the geometrical unit is less loaded as compared to the pixel pipelines) the GeForce FX 5900 Ultra outdoes the 5800 Ultra probably due to the much higher bus throughput and the optimized cache of the frame buffer. The 5800 Ultra takes the lead when the geometrical unit gets more load. Hidden Surface Removal

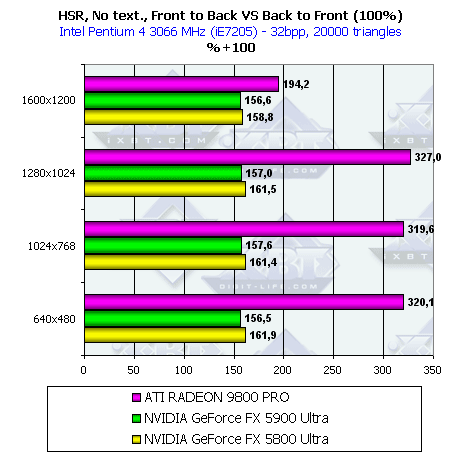

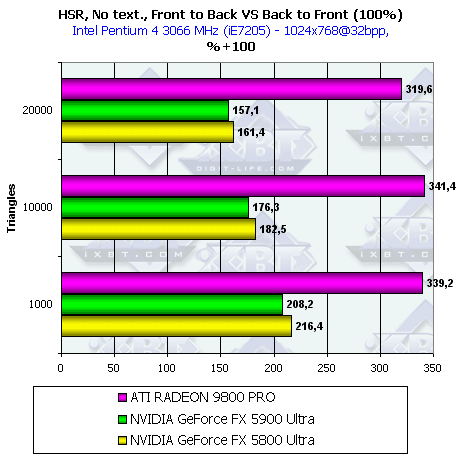

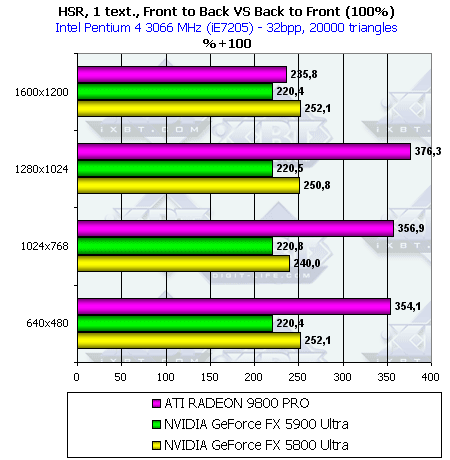

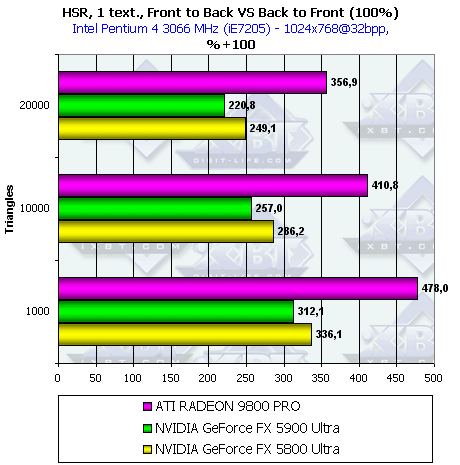

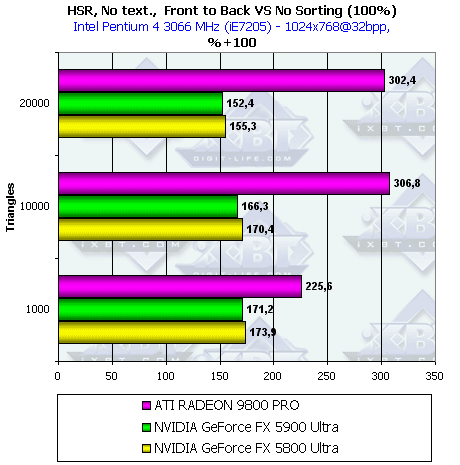

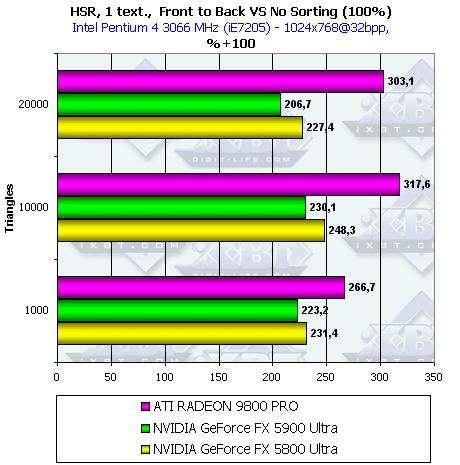

The HSR is less effective on the NV35 because of the higher bus throughout as compared to the NV30 because even in the worst case (back to front sorting) the bus is able to pump through the data volume increased. But the R300 has the best HSR effectiveness anyway since it uses the hierarchical structure and often makes clipping at higher levels, while the NV30 has only one decision-making level combined with tiles used for depth information compression. In 1600x1200 the HSR becomes much less effective on the R300 probably because of the limited cache of the hierarchical buffer which is not used in this resolution, and decisions are made only at the lowest level combined in the compressed units in the depth buffer, like in the NV3x. HSR effectiveness vs. scene complexity  In the NV3x with only one tile level, the fewer the polygons - the more effective the HSR. R300 keeps to the golden mean in this aspect. Its HSR has just started spreading its wings on the scenes of average complexity.   All the chips have more effective operation thanks to the early Z cull. With the textures accounted for, all the chips prefer scenes with fewer polygons. In all other respects, the situation is similar to the above one.   Correlation of the HSR effectiveness of the different chips is the same, but the gain with the HSR is lower. But anyway, the gain is more than twice when the textures are used. So, even if the scene is originally unsorted, the gain is great. If you want to benefit from the HSR you should sort scenes before rendering. The speed will rise several times! Pixel Shading

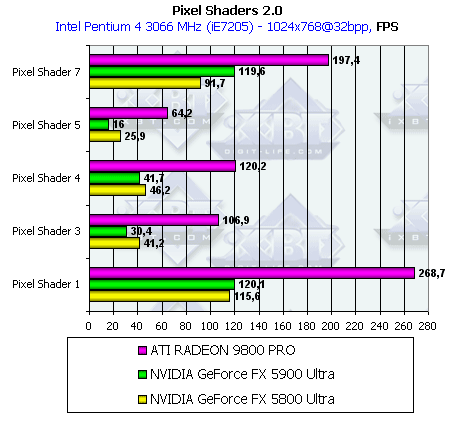

The test using PS 2.0 demonstrates queer results for the NVIDIA's chips,

especially

because the pixel shaders of DirectX9 are twice as powerful in the NV35.

Taking into account that the clock speed of the GeForce FX 5900 Ultra is

10% lower we have the following performance gap at the same frequency in

different tests:

If you look into the shader code you will see that shaders 1 and 7 (as well as 6) use much more texture samples (moreover, shaders 6 and 7 also sample data out of 3D textures) relative to the arithmetic shader commands in comparison with the other tests. So, the performance grows due to the higher bus throughput and improved caching algorithms. But what about the performance drop in other tests if the growth is announced to be twice! The matter is that the GeForce FX 5900 Ultra executes floating-point operations in PS 2.0 with the true 32bit precision (128 bits per register), contrary to the GeForce FX 5800 Ultra where the precision is limited by 16 bits (64 bits per register) in the driver. Later we will test more carefully the GeForce FX 5900 Ultra chip in PS 2.0. As to the Radeon 9800 Pro, it remains a leader. Point Sprites

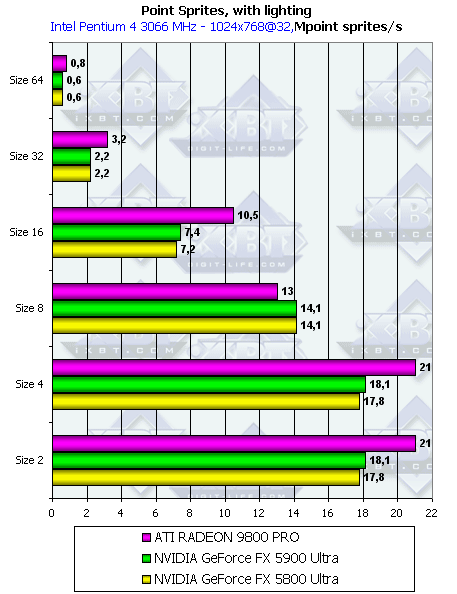

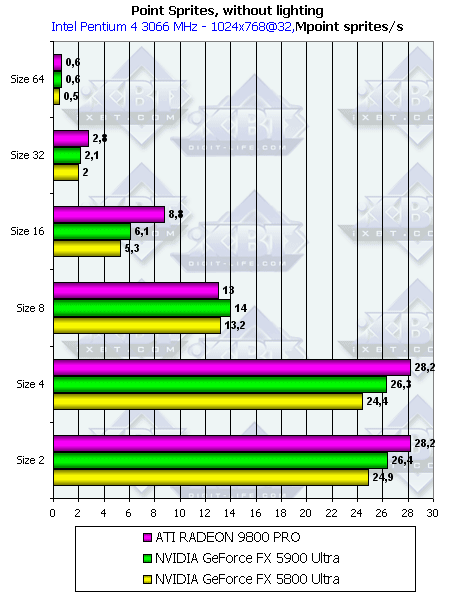

As expected, lighting influences only small sprites; as they grow the performance gets limited by the fillrate (approximately at the size of 8). So, for rendering systems comprising of a large number of particles the size should be less than 8. By the way, size 8 is optimal for the NVIDIA's chips as they outedge the Radeon 9800 Pro. This is probably connected with the size of tiles cached in NVIDIA. The GeForce FX 5900 Ultra also works better with the frame buffer: with light (i.e. the load of the geometrical unit is increased) over 500 the Ultra goes on a par, it takes the lead when the load is mostly on the frame buffer unit. Still, the Radeon 9800 Pro is ahead in most modes thanks to 8 pixel pipelines rendering 1 pixel per clock with one texture applied. 3D graphics, 3DMark2001 SE, 3DMark03 synthetic testsAll measurements in 3D are taken at 32bit color depth. Fillrate in 3DMark2001 SE

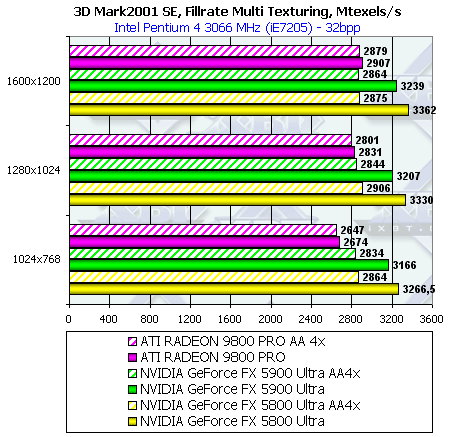

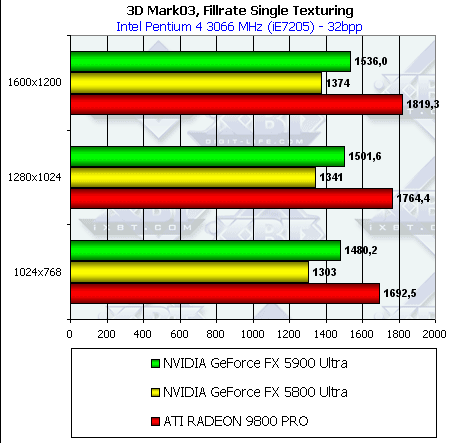

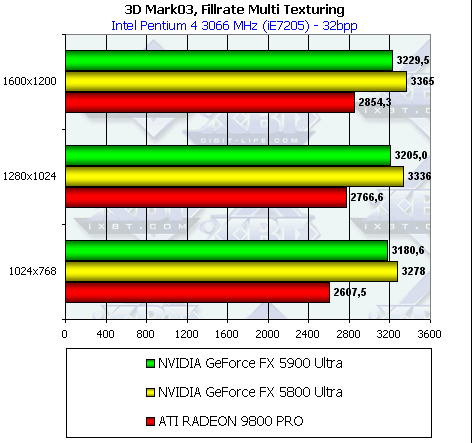

The GeForce FX 5900 Ultra performs better in the AA mode, though the Radeon 9800 is still more efficient in spite of its lower bus bandwidth. Contrary to RightMark 3D, in 3Dmark 2001 the GeForce FX 5900 Ultra outruns the 5800 Ultra even without the AA since the 3DMark 2001 needs a higher bus throughput. Multitexturing:  In case of multitexturing the NVIDIA chips have a higher speed than the Radeon 9800 Pro. As the number of textures grows up, their sampling and filtering are getting more important, and they mostly depend on the core clock speed, rather than on the number of pixel pipelines or bus bandwidth. In this case the chips have 8 texture units each, and the chip's clock speed becomes the determining factor. That's why the best one is NV30 (500MHz), then goes NV35 (450 MHz) and then R350 (380MHz). In the AA mode the performance of the NV3x chips falls down more than that of the R300 in spite of the frame buffer compression in MSAA in all chips and greater memory bandwidth of the NV35. Fillrate in 3DMark2003

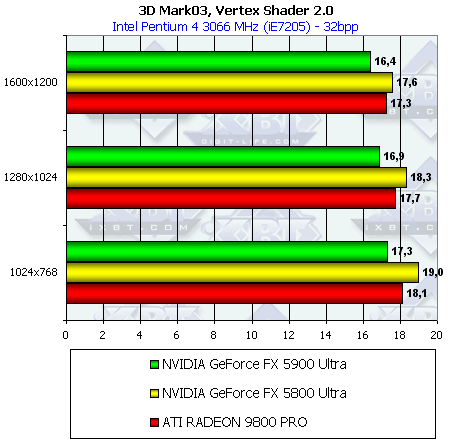

The picture is similar to the 3DMark 2001; the absolute values are a little lower - the test is less precise, and the figures are farther from the limiting values. Multitexturing:  Similar to the 3DMark 2001. Pixel Shader 2.0Simple variant: In this test the GeForce FX 5900 Ultra outdoes the 5800 Ultra, and in higher resolutions it outpaces the Radeon 9800 Pro. This test is functionally close to the RightMark 3D, Pixel Shading in Shader Level 6 and 7 which also use procedure textures. That is probably why it much depends on the texture sampling speed, and the GeForce FX 5900 Ultra runs faster than its predecessor. If we keep on comparing to the Shader Level 6 and 7 of RightMark 3D we will see that in RightMark 3D the Radeon 9800 Pro beats both NVIDIA chips, while there is no such an advantage in the 3DMark03. Well, optimization is obvious, especially if you look at the GeForce FX 5800 Ultra with the driver v43.45. Vertex Shaders 1.1

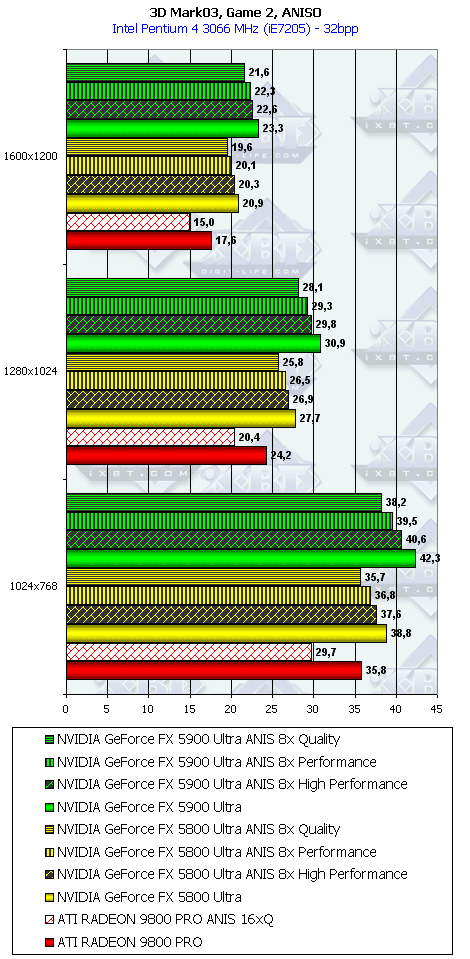

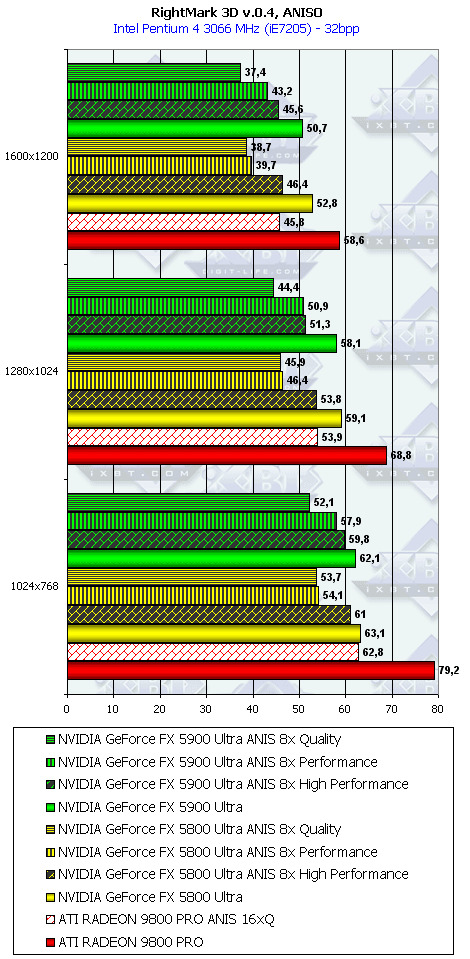

Although the VS test used in the 3DMark03 differs a lot from the RightMark3D, the scores are similar: GeForce FX 5900 Ultra loses about 10 % to the 5800 Ultra, and the Radeon 9800 Pro keeps on the same level with the NVIDIA's chips. Anisotropic FilteringThe anisotropy algorithms haven't changed. There are the same three modes mentioned above (see the driver settings). A bit later the quality section will demonstrate the screenshots.

Summary on synthetic testsLet's sum up the estimation of various units of NV35 in the synthetic tests.

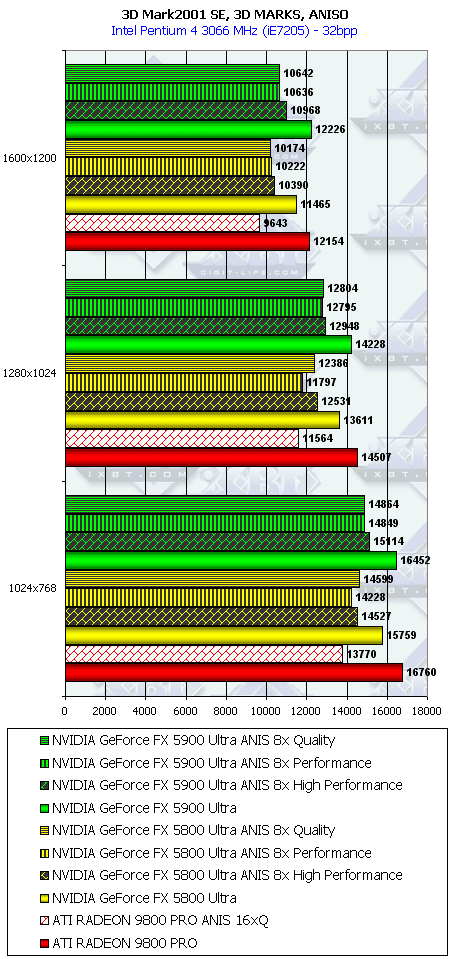

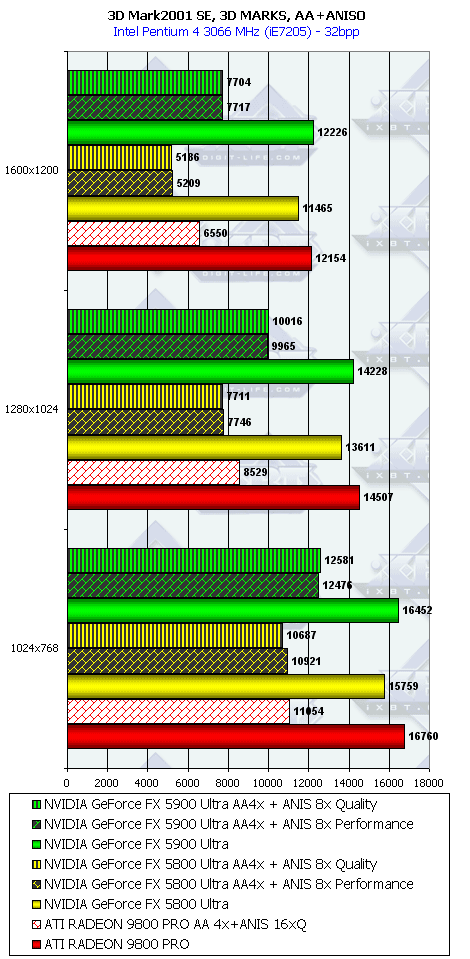

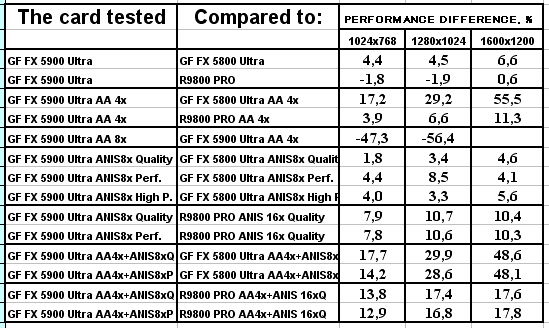

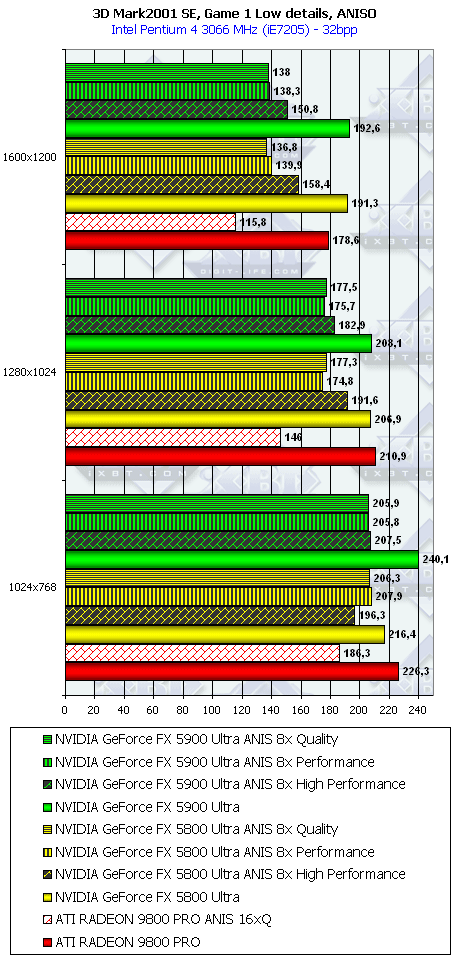

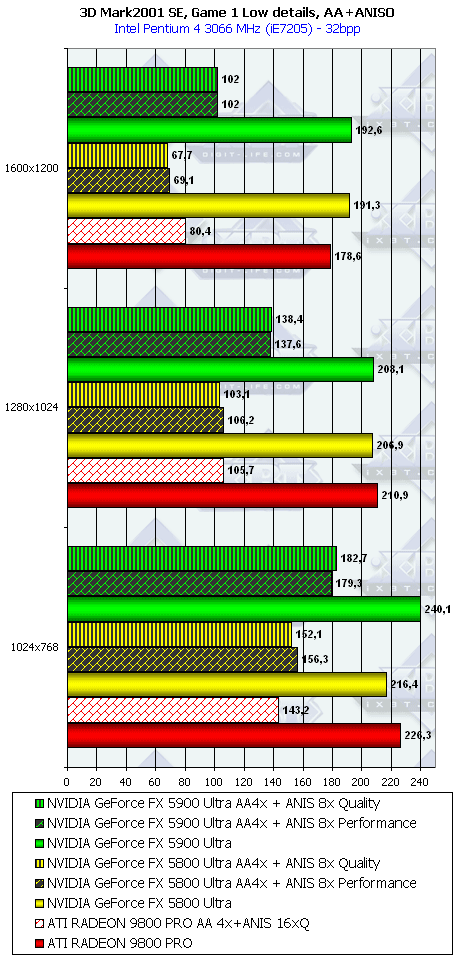

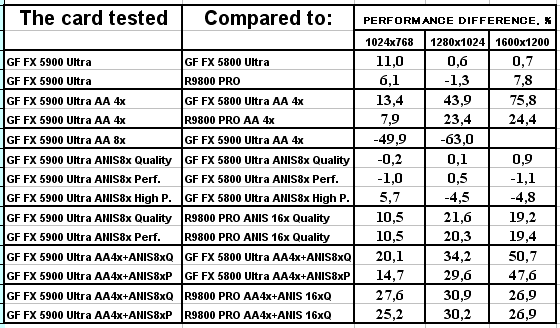

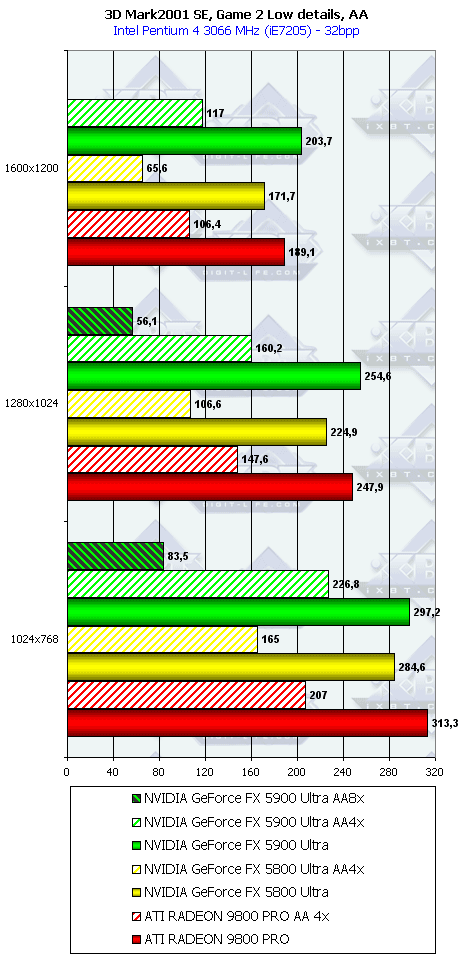

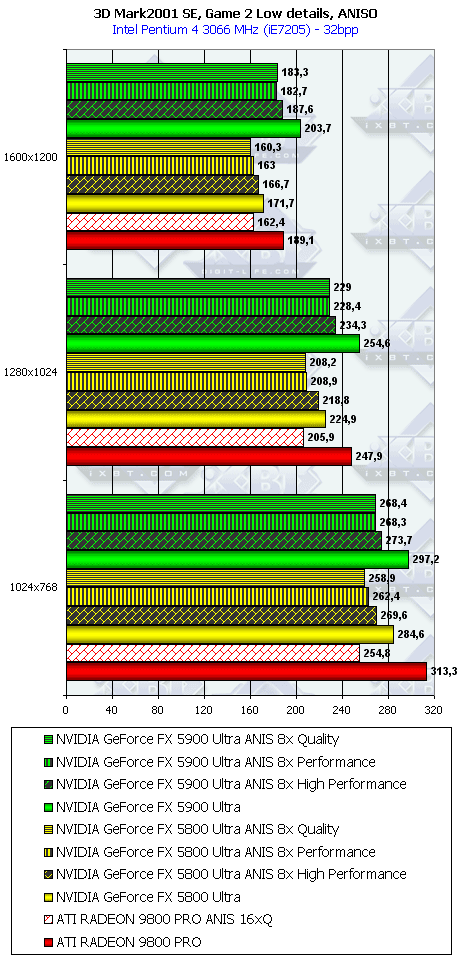

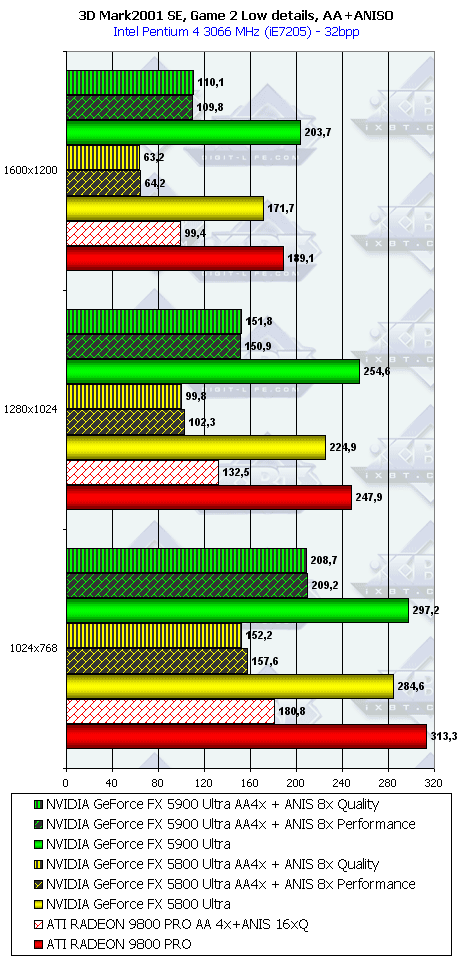

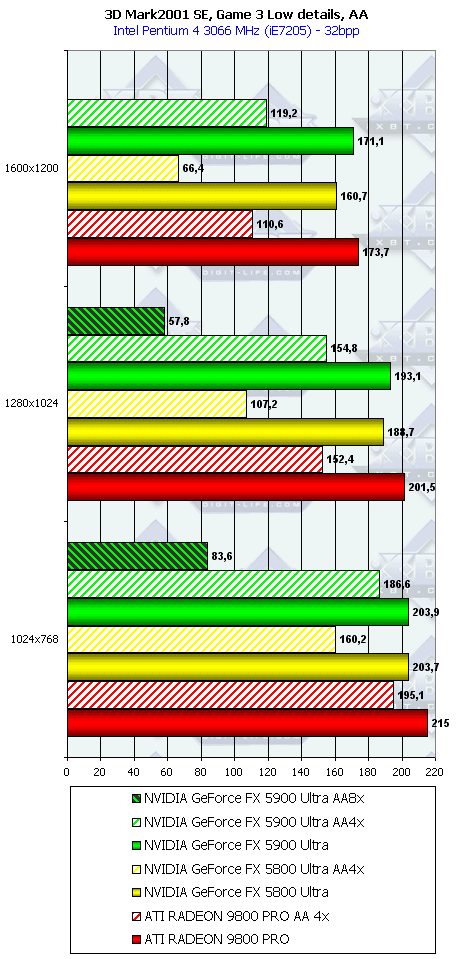

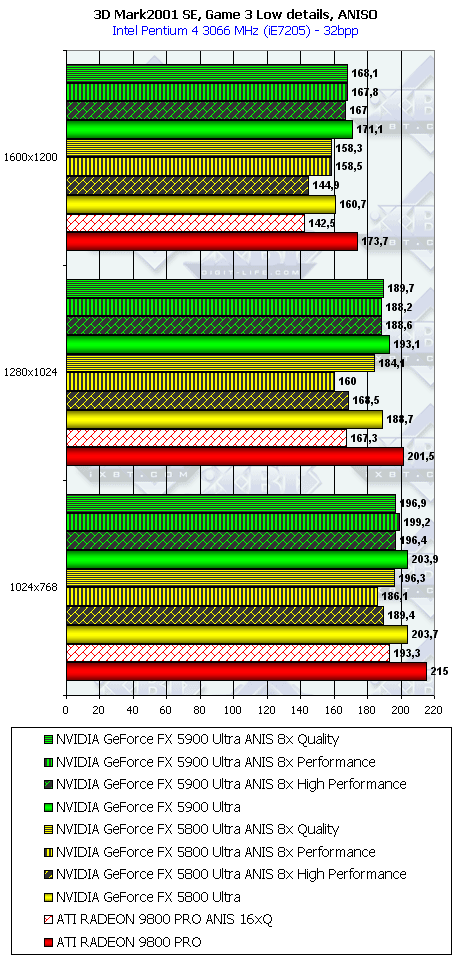

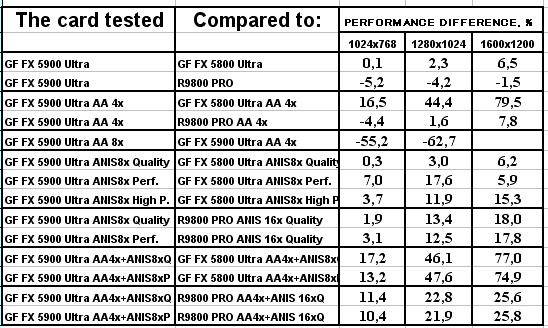

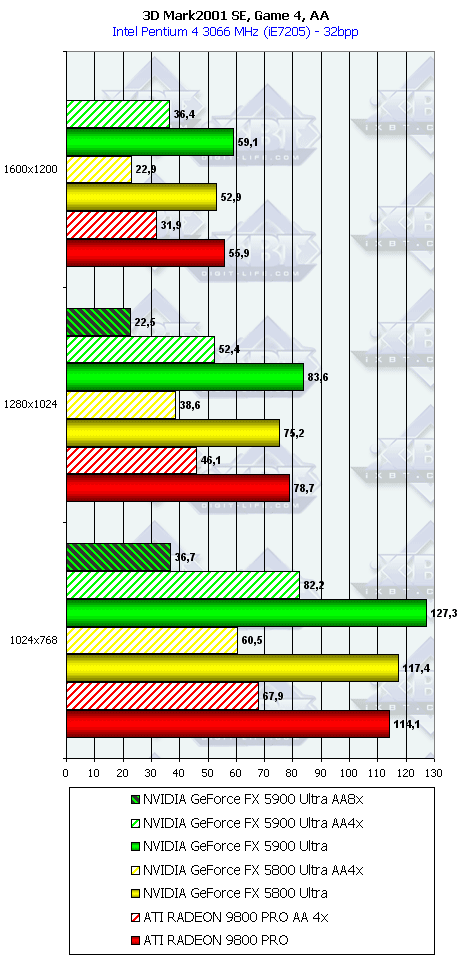

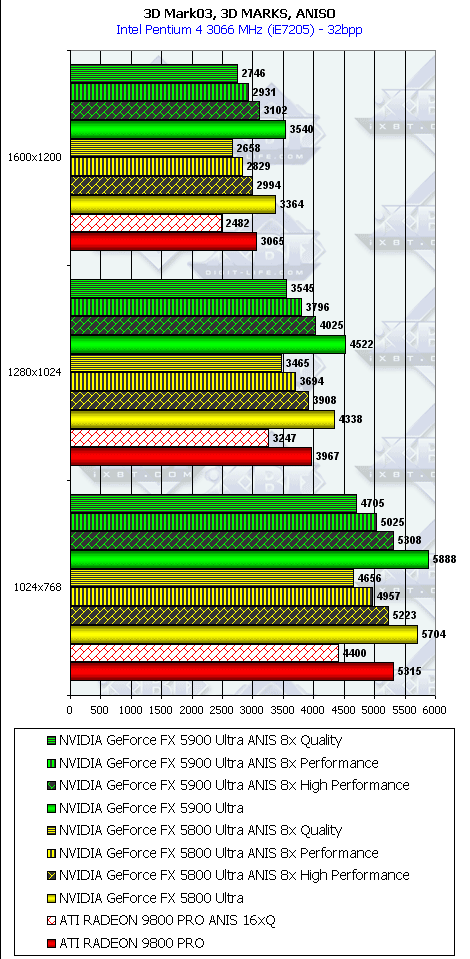

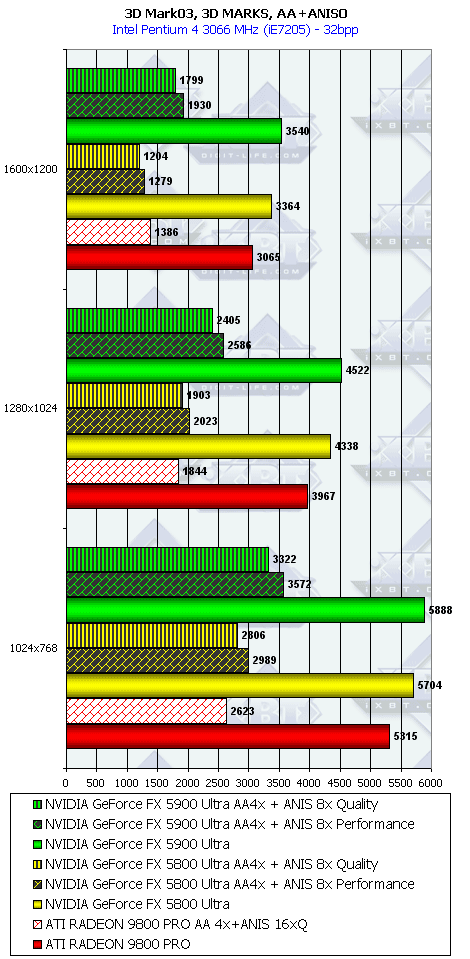

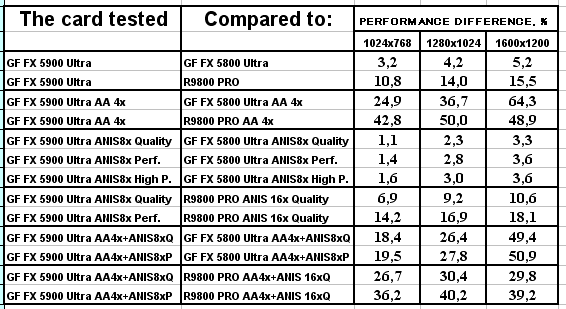

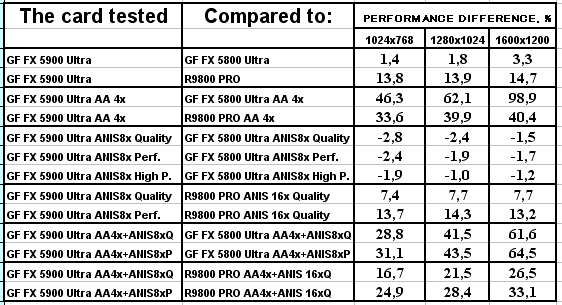

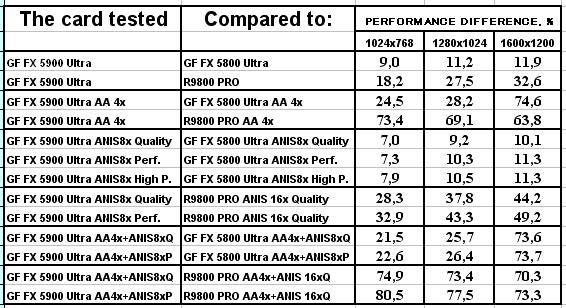

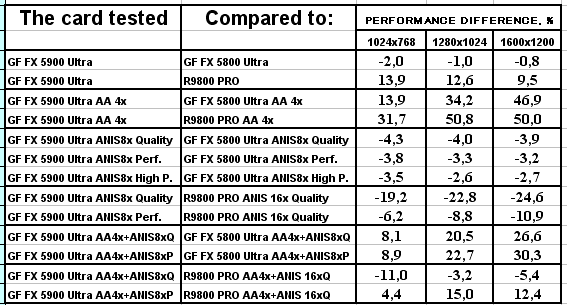

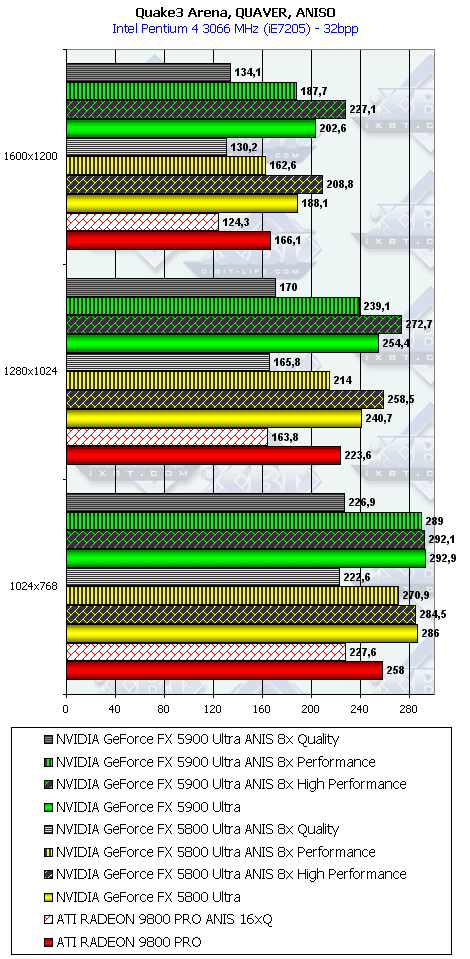

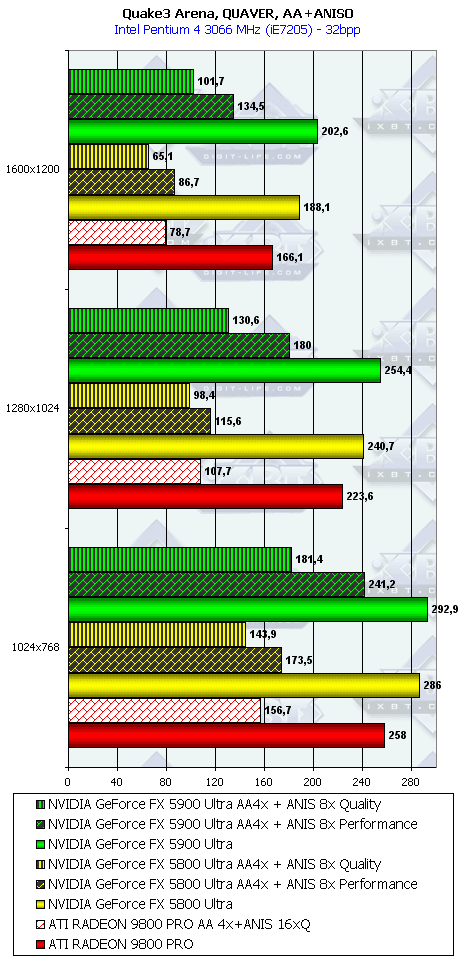

3D graphics, 3DMark2001 game testsAnisotropy was set to 16x for ATI's cards and to 8x for NVIDIA because algorithms

of this function considerably differ (we discussed it in NV30

Review). The criterion is just one: maximum quality. The screenshots were

shown several times earlier. Besides, it's interesting to compare NVIDIA's different

anisotropy modes (Application, Balanced, Aggressive) with the ATI's high-quality

mode; our readers can estimate how speed and quality correlate from the screenshots

from the NV30 Review that demonstrates

anisotropic quality. 3DMark2001, 3DMARKS

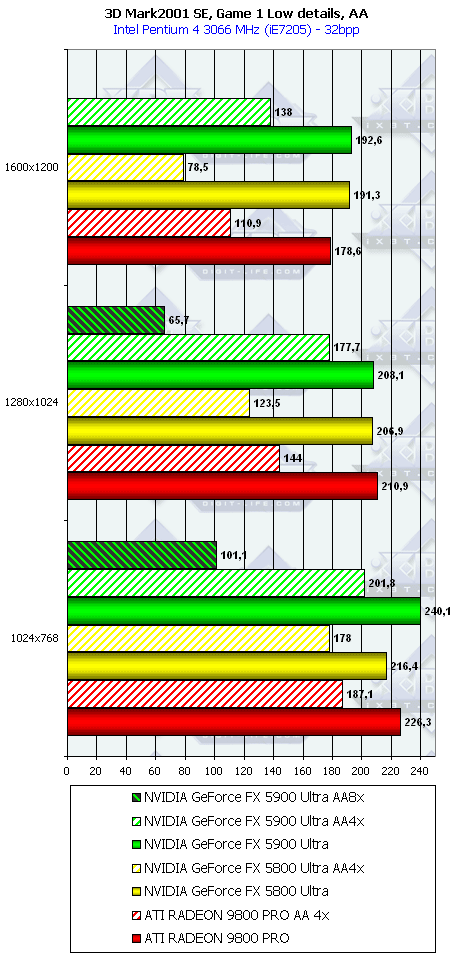

3DMark2001, Game1 Low details    3DMark2001, Game2 Low details    3DMark2001, Game3 Low details    3DMark2001, Game4

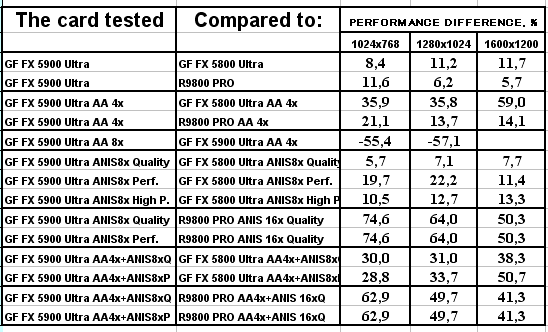

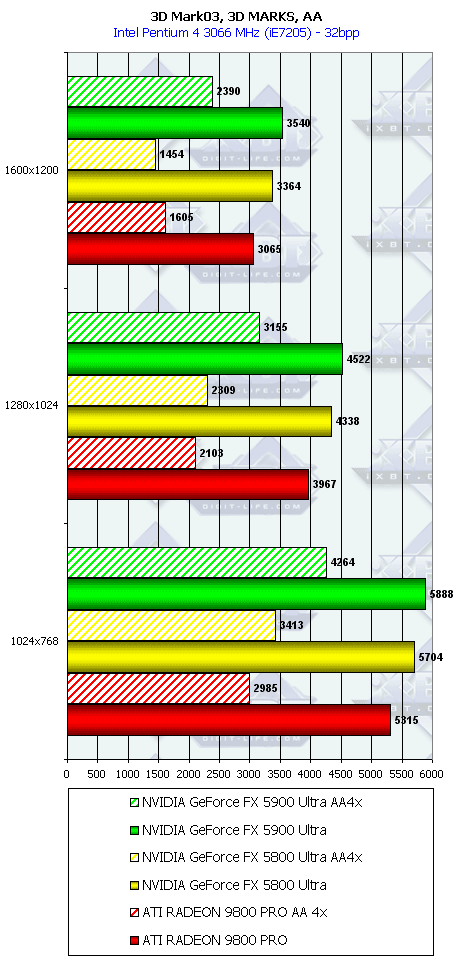

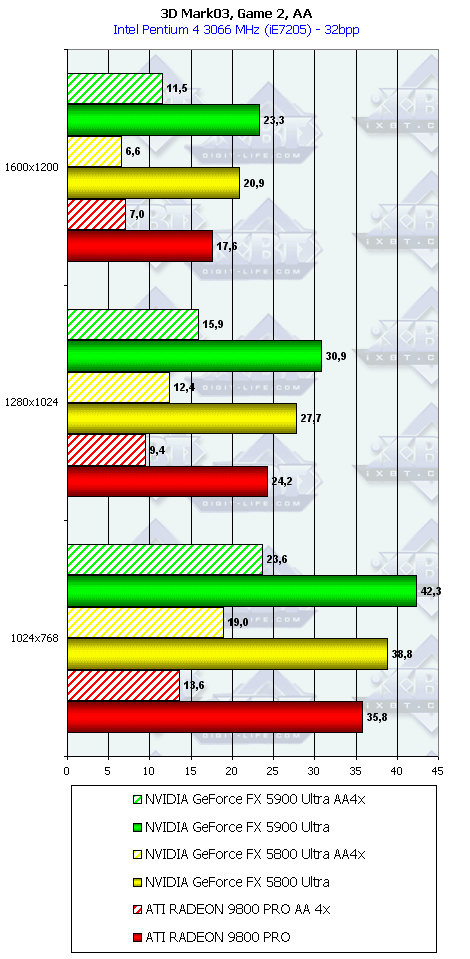

3D graphics, 3DMark03 game tests

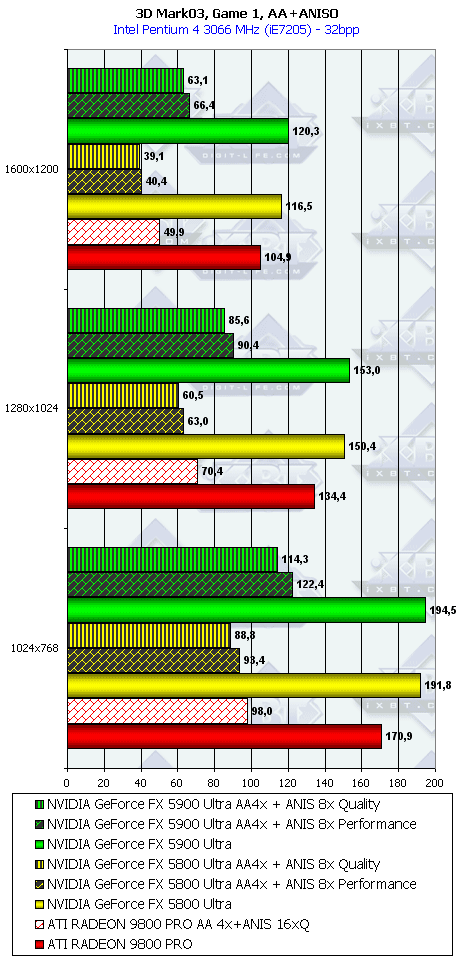

3DMark03, 3DMARKS    3DMark03, Game1Wings of Fury:

3DMark03, Game2Battle of Proxycon:

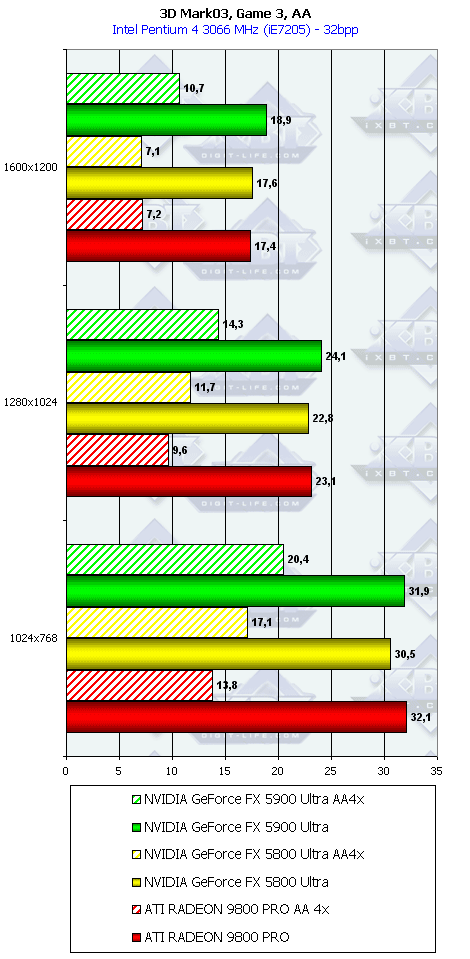

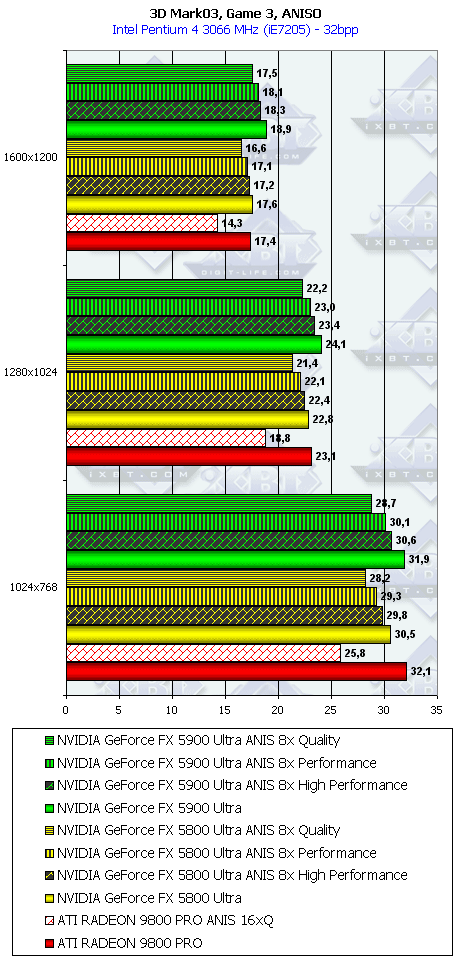

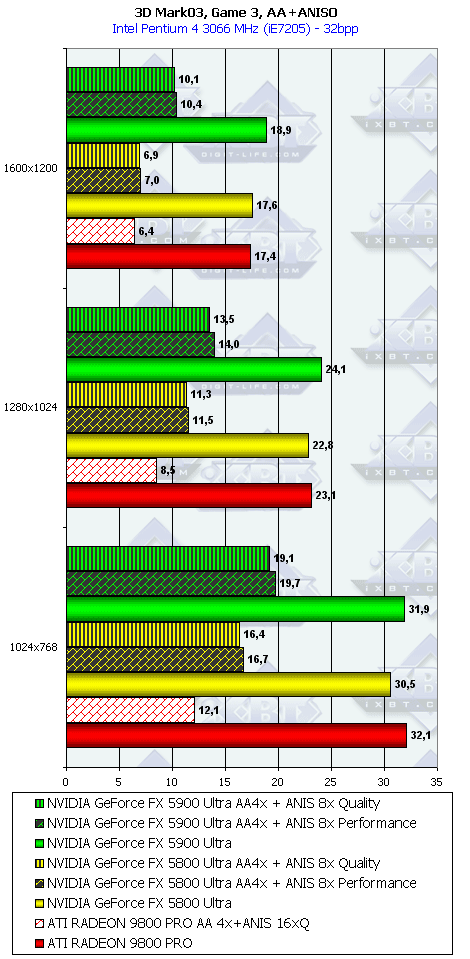

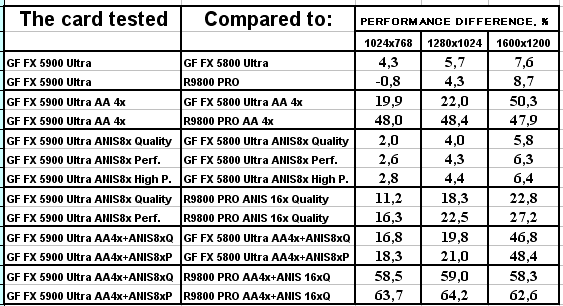

3DMark03, Game3Trolls' Lair:

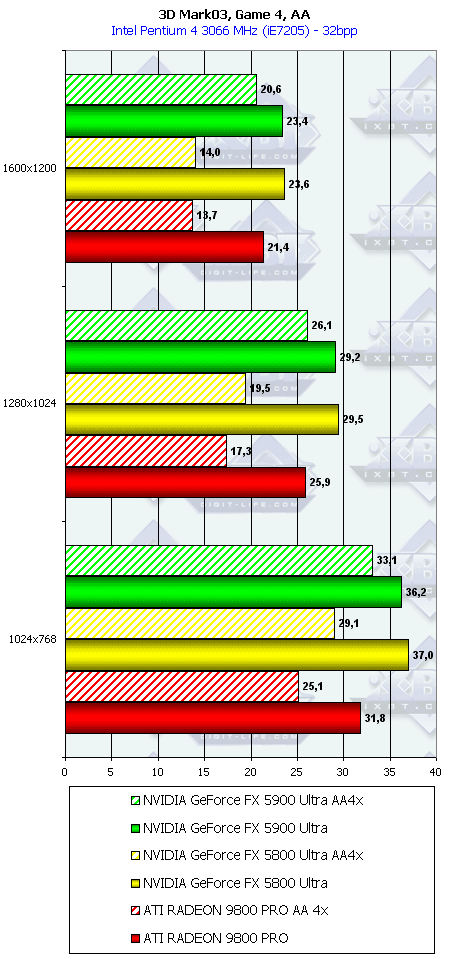

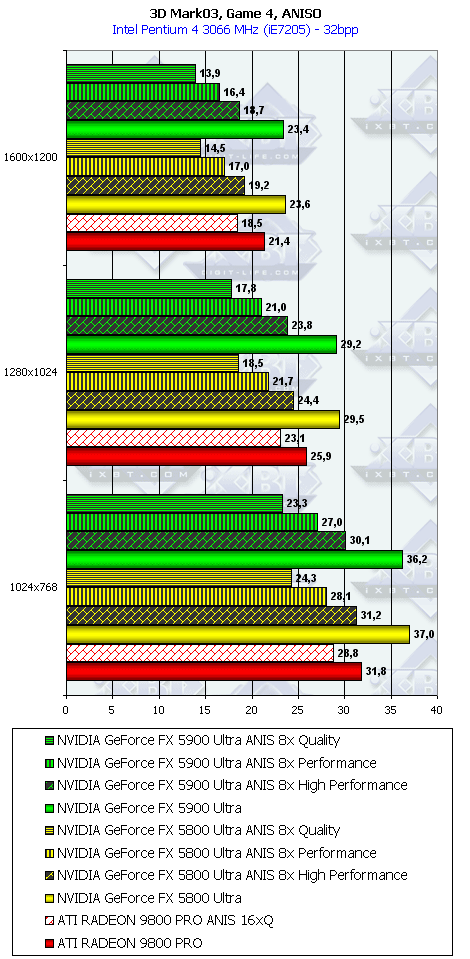

3DMark03, Game4Mother Nature:

3D graphics, game tests3D games used to estimate 3D performance:

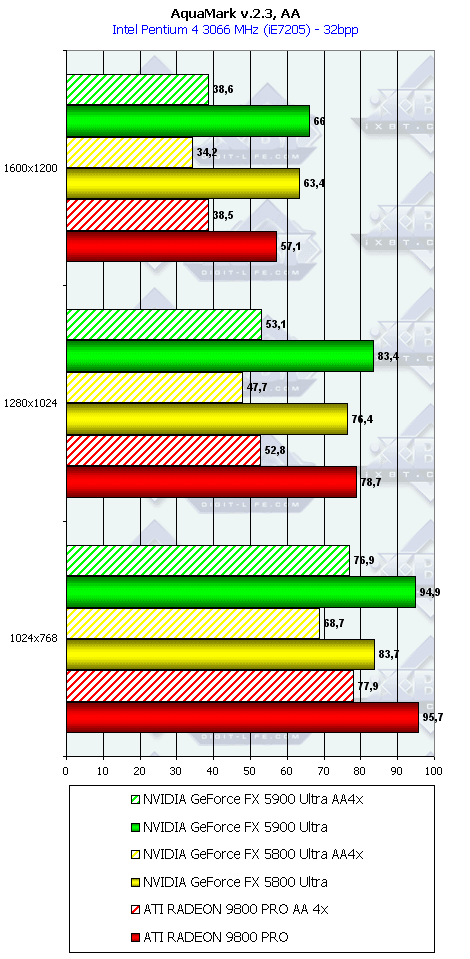

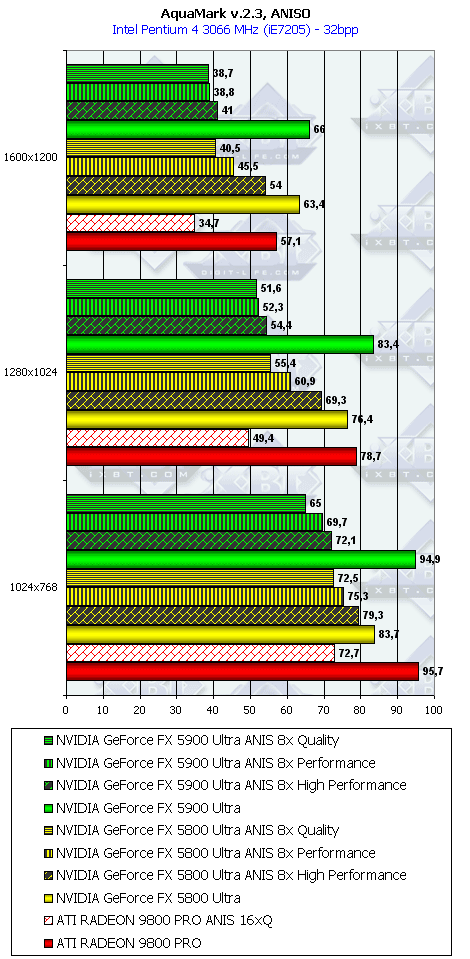

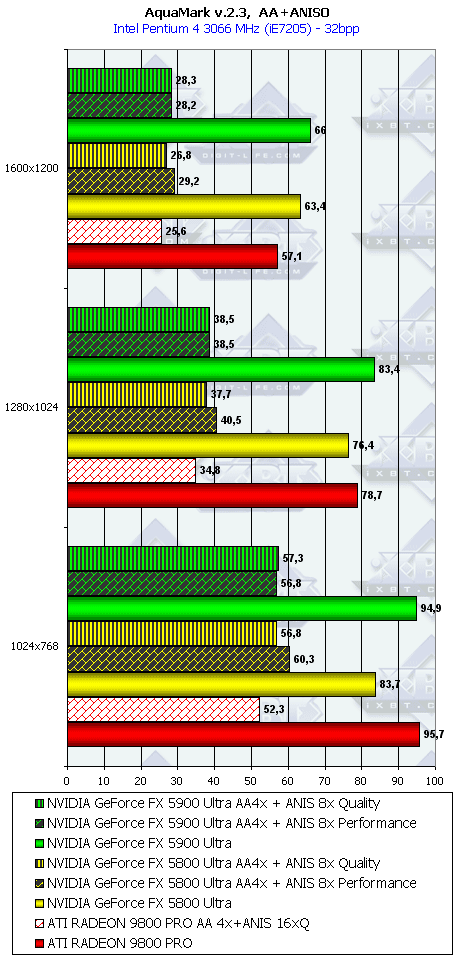

AquaMark (Massive Development) test demonstrates operation of cards

in the DirectX 8.1, Shaders, HW T&L.

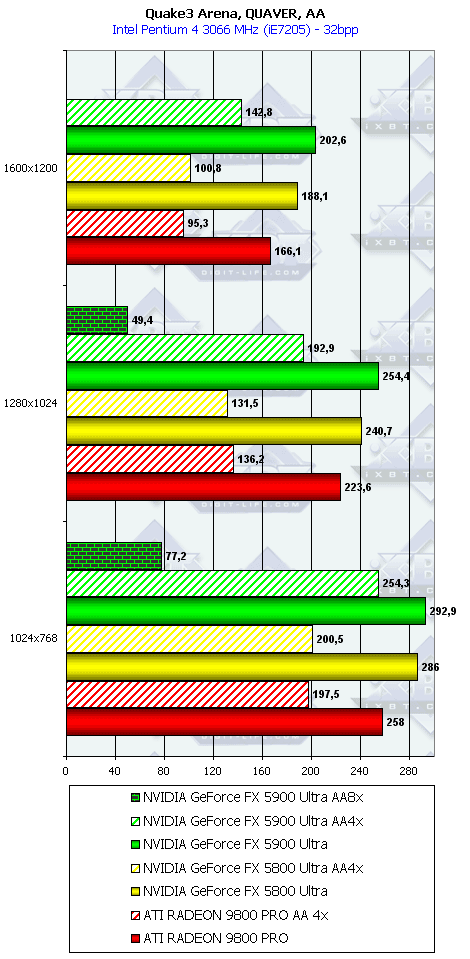

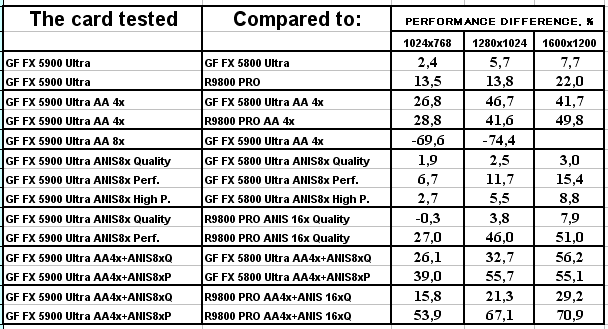

Quake3 Arena, Quaver

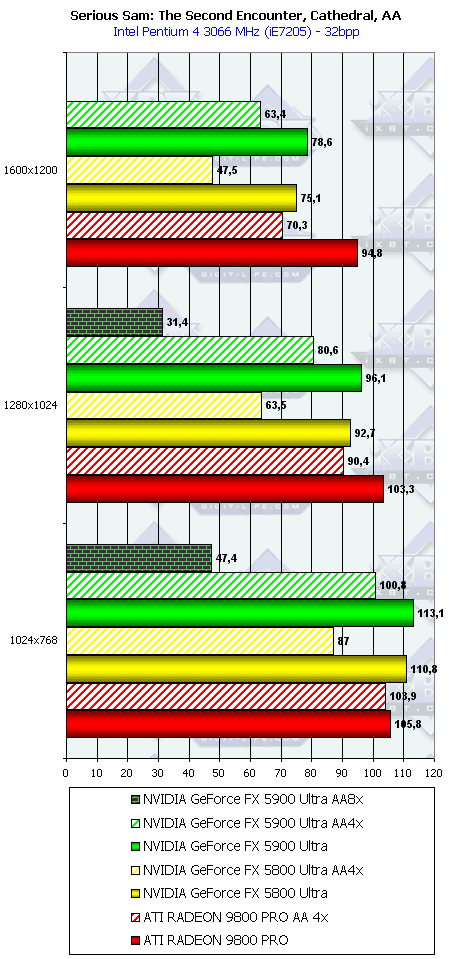

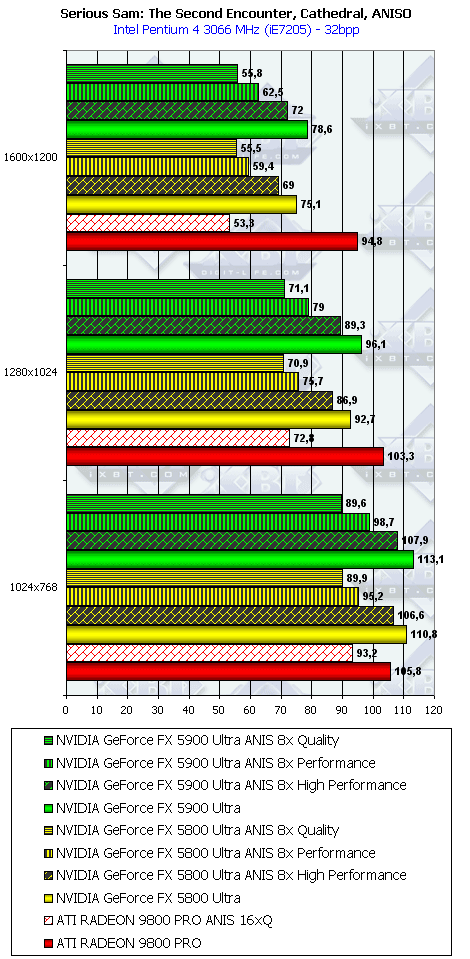

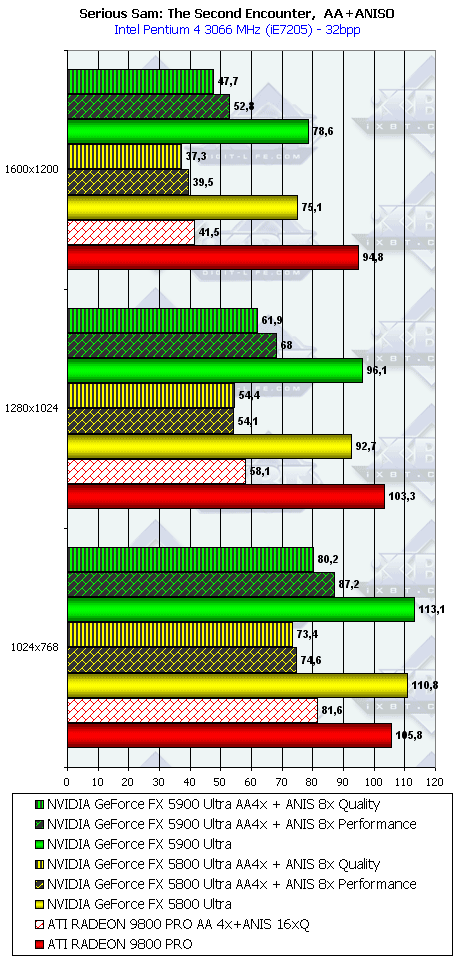

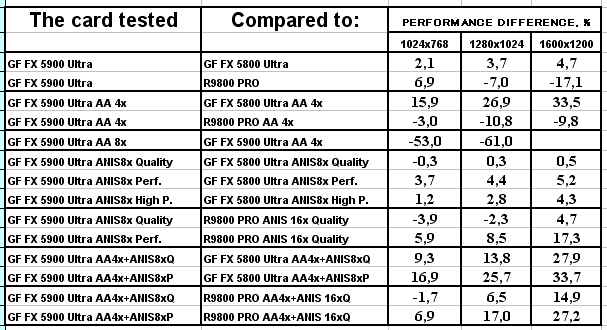

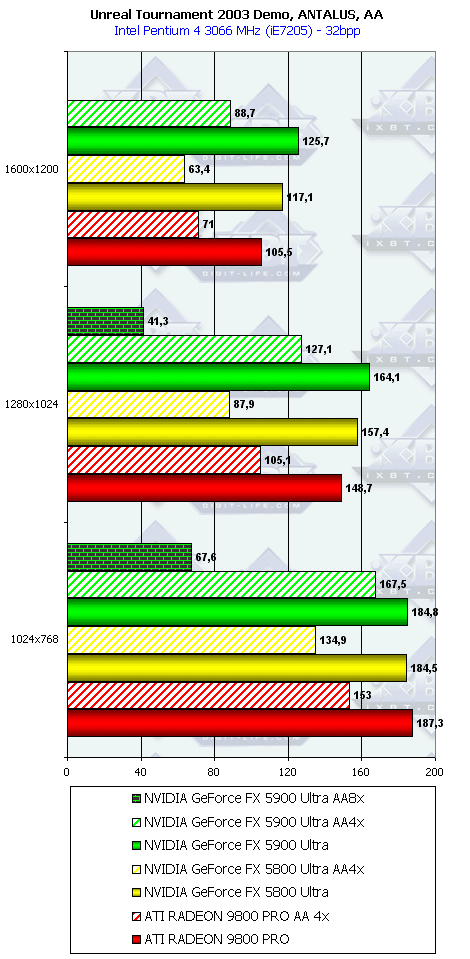

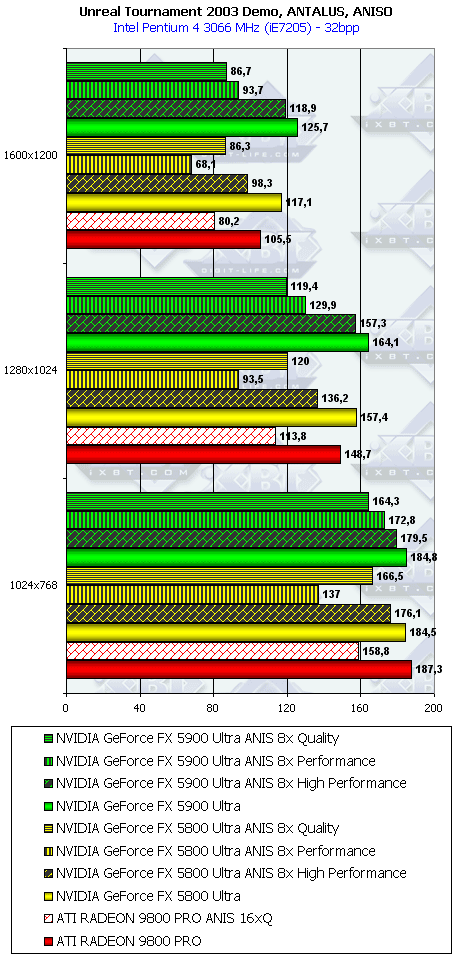

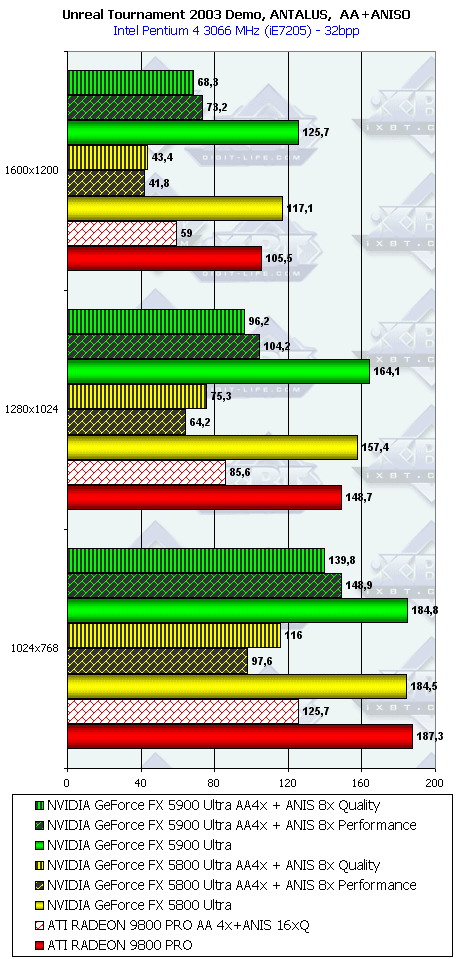

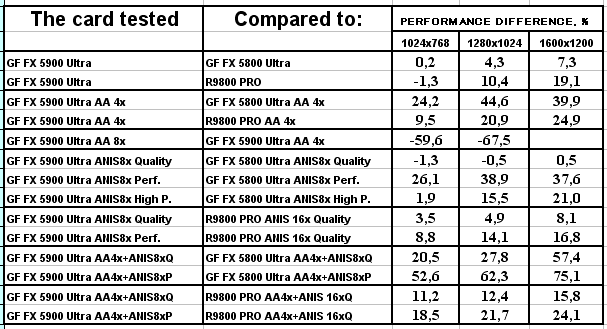

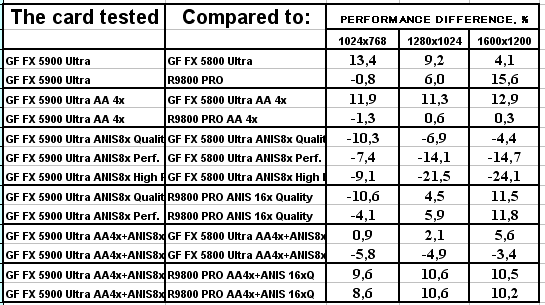

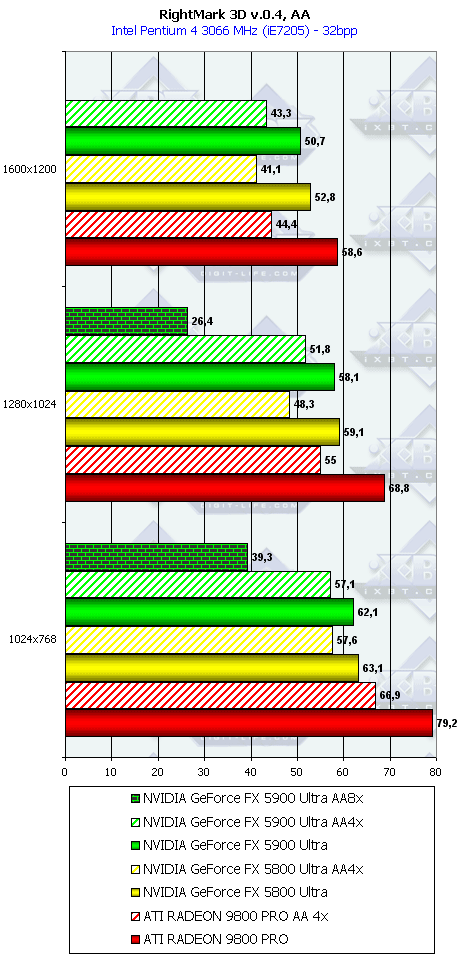

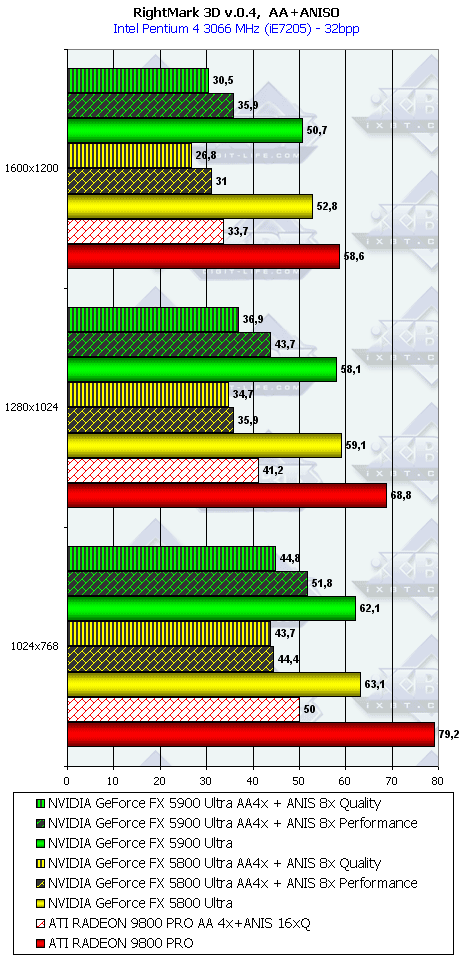

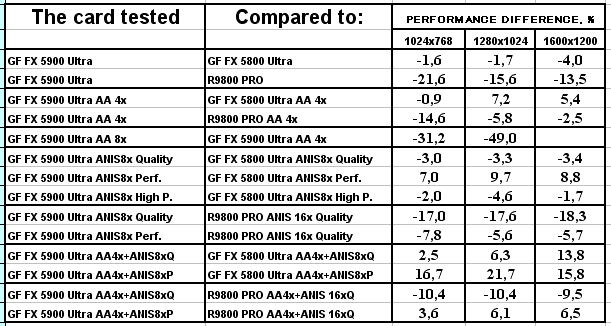

NVIDIA's promise to outscore the RADEON 9800 PRO in the modes with AA and anisotropy by 50-80% becomes a reality. The 256bit bus helps the NV35 to succeed in AA. The anisotropy is good as well: the frequencies are lower and the speed is higher (I mean NV35 and NV30). Remember the review of Aleksei Nikolaichuk. Serious Sam: The Second Encounter, Grand Cathedral

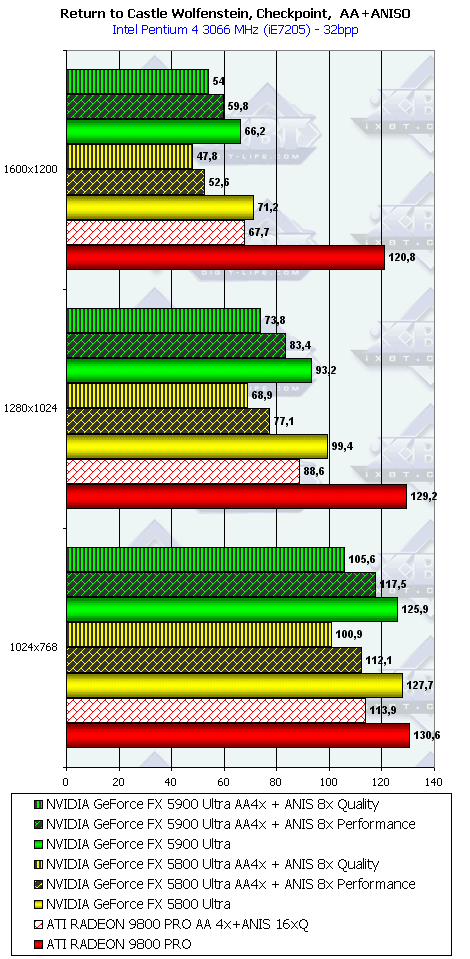

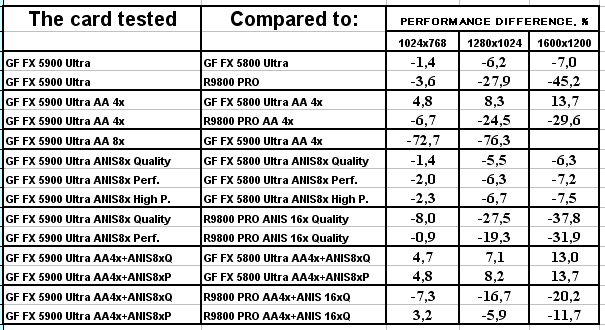

ATI optimized its drivers long ago, but NVIDIA ignores this popular benchmark to some reason. Well, the NV35 loses to the RADEON 9800 PRO in the no-AA and no-anisotropy modes (except 1024x768). But even AA can't help the NV35. It's only the fast anisotropy which can be a ring-buoy. Return to Castle Wolfenstein (Multiplayer), Checkpoint

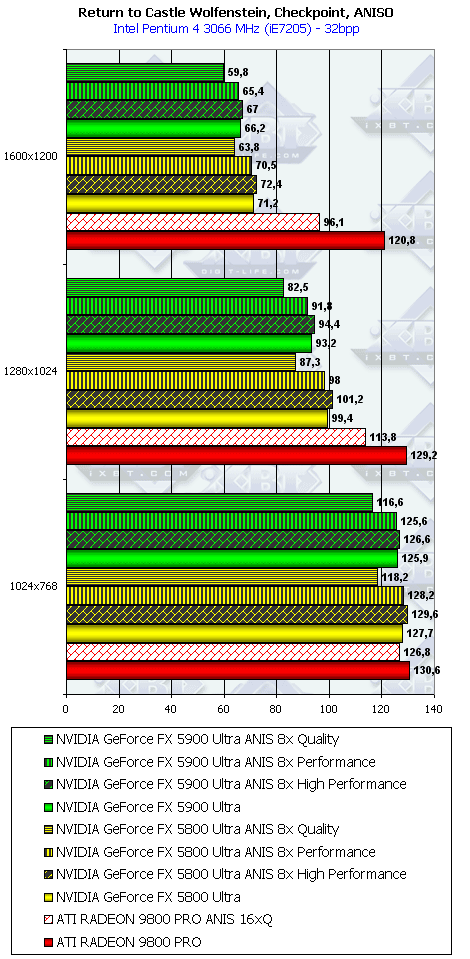

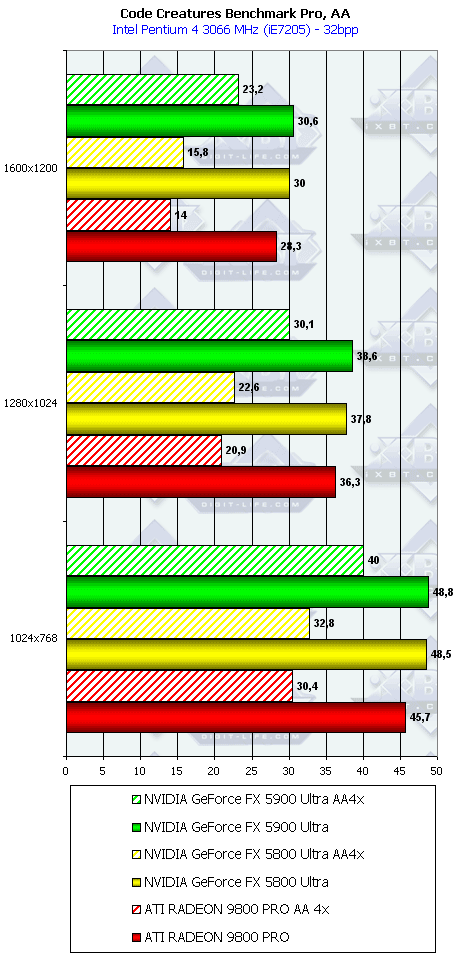

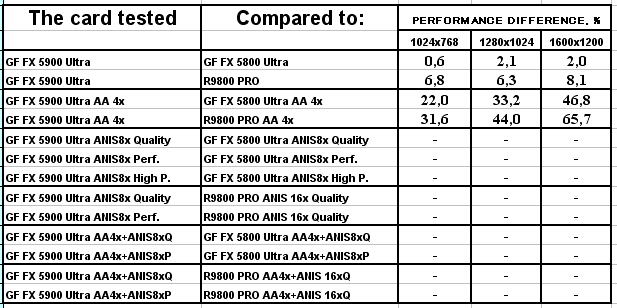

After the driver version 42.82 the performance dropped much yet on the NV30. NVIDIA has done nothing so far to cure it! The NV30 could have had over 120 fps in 1600x1200! And now it has only 70! It's not just 8-10 fps! Obviously, the NV30 and NV35 work incorrectly in this game, the driver wrongly forms the rendering architecture of so flexible processor. It's strange that this game was developed on the base of the Quake3's engine together with id Software, the company for products of which the programmers at NVIDIA optimize the drivers for a long time already. Code Creatures  Unfortunately, the anisotropic filtering of the NV30/NV35 does not work in this test, and we failed to test these modes as well as the combined modes including AA and anisotropy. It's also NVIDIA's mistake, like in case of the Elder Scrolls III: Morrowind, where the AA was not supported (only the 4xS mode could work!). Unreal Tournament 2003 DEMO

Here everything is OK, though the advantage of the NV35 over its rival is not great. AquaMark

In this test the NV35 in the anisotropy mode works slower than even the NV30 (probably the drivers were not optimized for this test), the advantage with the AA is not great, and the overall breakaway of the NV35 is intangible. RightMark 3D

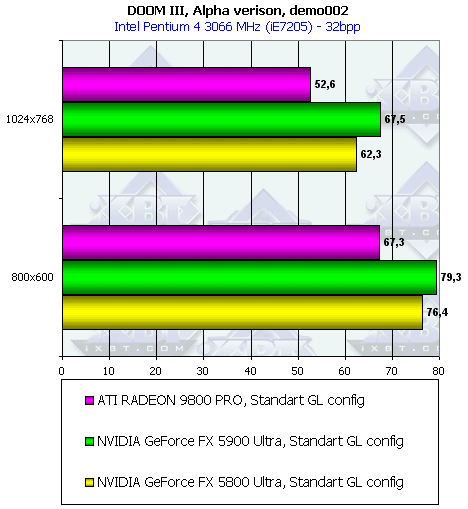

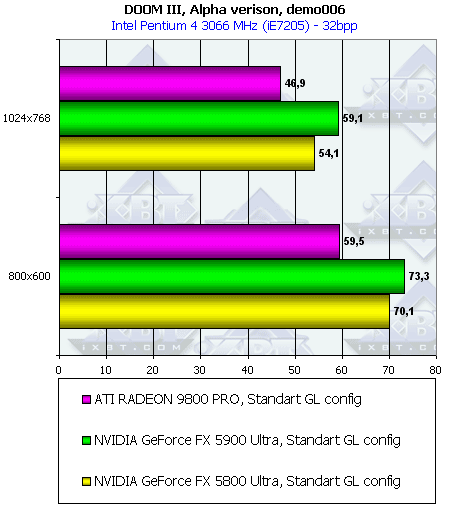

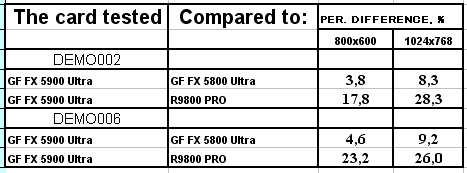

The drivers were not optimized for this test because the benchmark itself was not available on the Net. The pixel shaders are very important here, and the NV35 loses to the NV30 (the core clock speeds are again a determining factor). DOOM III Alpha

Since this test utilizes all innovations of the NV3x, in particular, the accelerated shadow processing, the results are obvious. A great amount of data pumped through the memory require a high throughput, that is why the 256bit bus has come in handy. 3D graphics qualitySince the NV35 is actually a copy of the NV30 with some optimizations, they do not differ in games support. That is why I'm not going to shows again the screenshots again that demonstrate anisotropy quality in the IntelliSample modes as well as different AA levels. NVIDIA's and ATI's AA and anisotropy modes were already estimated closely in the GeForce FX 5800 Ultra Review (theory, tests), and in the GeForce FX 5600/5200 Review (practical anisotropy comparison). In the RADEON 9800 PRO review you can find some interesting facts as well. Now we will focus on some peculiarities appeared with the drivers v44.03.

First of all, the trilinear filtering has new presets. If you remember,

Quality-Performance-High Performance modes differ in the trilinear filtering

optimization degree:

If the scene consists of a great deal of curved surfaces and colorful textures, there is almost no difference between the IntelliSample modes. If so, why to pay more by setting the Quality mode? ConclusionSo, what can we say about the fastest game accelerator NVIDIA GeForce FX 5900 Ultra?

Andrey Vorobiev (anvakams@ixbt.com)

Aleksei Barkovoi (clootie@ixbt.com) Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||