|

||

|

||

| ||

If you ask an average gamer which GPU's feature he considers the most important for image quality in modern games, you will most probably be answered: anisotropic filtering. Unfortunately, the anisotropy, this high-quality but resource-hungry (because of the universal direct realization) element, is the weakest point of the NV25 graphics processors. No wonder that the follower of the NV25, NV30, was expected to come with fast anisotropic filtering able to provide a high playable fps level in high resolutions. NVIDIA did understand it. Exactly to eliminate that weak point and prevent that awful performance drop because of the anisotropic filtering they launched the Intellisample technology announced together with the NV30 family. To all appearances, the term Intellisample comes from Intelligent Sampling, i.e. NVIDIA hints at certain intelligent scene analysis technologies that reduce the computational resource consumption for anisotropic filtering by simplifying its algorithms if the image quality does not suffer much. As you know, yet the OpenGL v28.90 driver had scene geometry analysis algorithms that could texture certain polygons without anisotropy enabled and allowed for a considerable performance gain in the multitexturing mode. Determination of the texture stage indices and application of this algorithm only to the stages with nonzero indices let NVIDIA make the polygon culling algorithms unnoticeable for image quality tests because mostly secondary textures (like light maps) that were not determining for image sharpness were simplified. This algorithm was enabled by the OpenGL driver in the Balanced and Aggressive modes with the anisotropic filtering forced, but it was the only component of the Intellisample technology available for the whole GeForce family in the OpenGL. Today we are not going to touch upon the code specific for NV3x processors (optimizations concerning trilinear filtering, LOD bias etc.), but we will deal with anisotropy optimization algorithms general for the whole GeForce family. The Detonator 43.45 and 43.51 drivers, arrived quite recently, raised the level of anisotropic filtering in OpenGL and got the support of cardinally new optimization algorithms for all GPUs of the GeForce family. These optimizations will be the today's subject. So, the image quality level and speed have noticeably increased with the anisotropic filtering enabled in the OpenGL with the latest Detonator drivers. The names of the Intellisample modes were changed several times (first Application / Balanced / Aggressive were renamed into Application / Quality / Performance, then to Quality / Balanced / Performance), which means that the Intellisample modes are the foreground task for the guys at NVIDIA. However, no one has given a clear answer yet what optimization methods are applied in the filtering algorithms in the latest driver versions that greatly boost the performance. Let's try to answer this question today. To find out it I opened the OpenGL driver to see how OGL_QualityEnhancements

key that controls the Intellisample modes is interpreted there. Well, depending

on the Intellisample mode selected the driver uses one of four pregenerated

anisotropy level reduction tables:

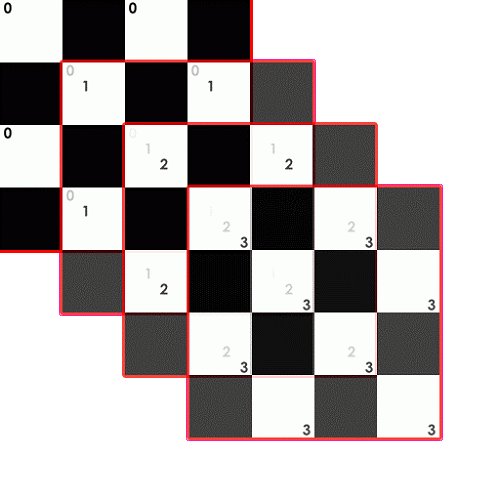

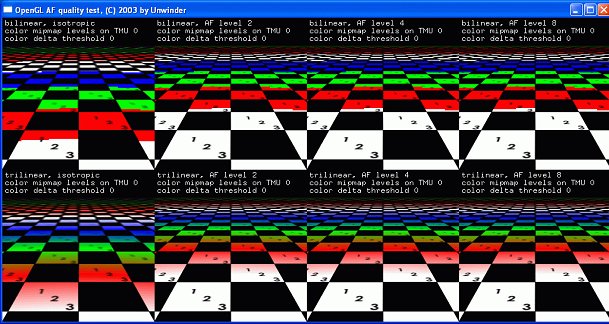

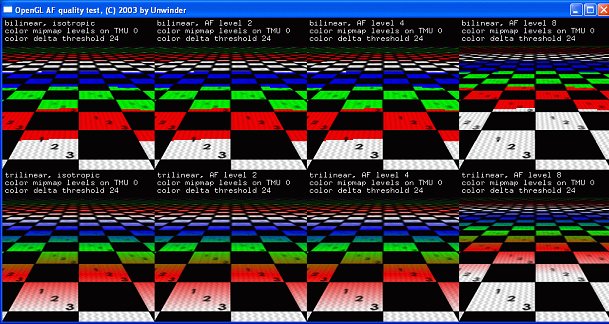

As you can see, the top-quality Level 8 can be replaced with Level 1 or Level 2 in the Performance mode and with Level 2 or Level 4 in the Balanced mode. When we didn't know the criterion of table index selection yet we assumed that they used the texture stage index as such, and that they simply lowered the anisotropy level at the first and second texture stages. We developed OpenGL anisotropic filtering quality test v1.0 (160 Kb) to see if we were right; the test applies up to 4 textures at a pass to the only surface in the multitexturing mode:  Besides, our test application allows selecting a current texture stage to associate it with a uniform white texture with color mipmap levels (alternating white, red, green and blue colors). The possibility to choose a current stage using a texture with mipmap levels of different colors and support of simulataneous displaying of the same scene with different combinations of bilinear (isotropic), anisotropic and trilinear filtering are to prove or disprove whether the anisotropy level lowers at different texture stages. Fortunately, the anisotropic filtering quality does not depend on the texture stage index. Moreover, the anisotropy tables were not used at all until we forced anisotropy in the driver's settings. After that the result neared the expected one though it didn't become dependent on the stage:  Quality mode  Balanced mode  Performance mode So, the screenshots show that the table indexed 2 is used in our application:

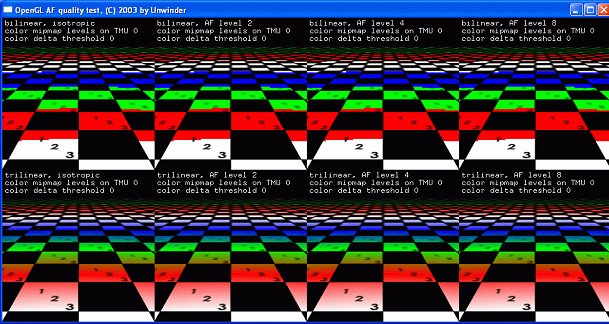

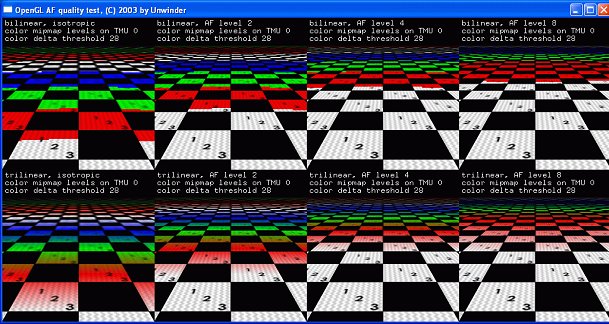

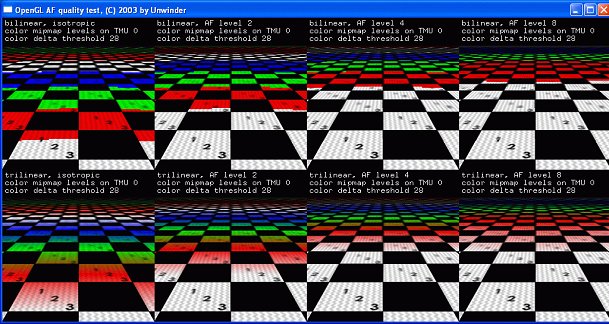

But how is a table index selected? It doesn't depend on the texture stage. Moreover, the OpenGL driver set the table 2 for our application, and it didn't change with the angle of rotation or size of the scene, i.e. it doesn't depend on geometry. To find it out we had to analyze the OpenGL code more carefully. So, what makes the driver use exactly the most roughest table for our application? The peculiarity of our test is that it consists of a uniform texture. So, the maximum anisotropy level now depends on the contents of the texture filtered. Well, the programmers are right: why to make the GPU average colors of 32 texels to form the final texture color if the texel colors are the same, and averaging of 4 textures from the standard bilinear sampling brings the same result? Now the Intellisample algorithms of the OpenGL can be considered intelligent indeed, because the driver calculates the sharpness degree for each texture at the texture creation stage, and it is the sharpness degree which is used as an index of the anisotropy optimization tables. To demonstrate operation of the Intellisample algorithms that analyze texture's sharpness and select a table respectively, we added the texture sharpness adjustment feature to our test. The mipmap level of different colors of the current texture are now filled with a pattern changing according to the following formula: color = 255 - threshold + threshold * sin(tx + cos(ty)) Here, color is the texture's color, tx and ty are its nonstandardized texture coordinates, and the threshold determines the maximum difference in the texture texels' colors, i.e. its sharpness level. The threshold can be changed with the keys [w] and [s], - it determines an anisotropy optimization table picked by the OpenGL driver. Now have a look at the test results obtained with the different threshold parameters:  Balanced mode, nonuniform texture of average sharpness  Balanced mode, nonuniform texture of high sharpness  Performance mode, nonuniform texture of average sharpness  Performance mode, nonuniform texture of high sharpness As you can see, when the texture sharpness crosses a certain threshold, the driver switches to the table 3 and then to the table 4 disabling the optimization at all. So, now we know the principles of operation of the anisotropy optimization methods appeared in the OpenGL with one of the latest Detonator versions. It a very original solution to use sharpness analysis and anisotropy level lowering for uniform textures, but I'd like to draw your attention to two aspects which can bring to naught the effect. First of all, the anisotropy optimization algorithms based on texture sharpness analysis were not enabled until we forced the anisotropic filtering in the driver settings. We studied the OpenGL ICD code and arrived at the conclusion that the driver calculates texture sharpness at the texture creation stage only if the anisotropy level is set greater than 1 before the texture is created. Such approach guarantees that the texture sharpness analysis will work when the anisotropy is enabled, but it doesn't guarantee its operation if anisotropy is controlled by an OpenGL application. To force the texture sharpness analysis algorithms in our application we added the anisotropy level adjustment code at the value of 2 before texture creation using glTexImage2D. But I can't promise that all developers of OpenGL applications will do the same, and it's possible that some texture sharpness analysis algorithms won't work in some applications that control anisotropy themselves. Secondly, the sharpness analysis algorithms do not support operation with compressed textures. That is why if a certain application uses such textures, the OpenGL driver won't enable these algorithms. To demonstrate it we added texture compression support to out application for the format GL_COMPRESSED_RGB_S3TC_DXT1_EXT (command line option is -tc):  Performance, uniform DXT1 texture It's clear that if the texture is compressed the sharpness has no effect on the anisotropy quality, and the driver does not activate the optimization. Fortunately, it's easy to do with the restrictions since we know their nature. Now, when we now how the algorithms work and what problem may occur, let's have a look at the real games. Here is the testbed:

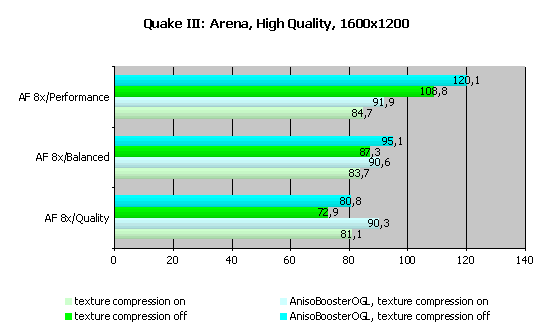

The testbed worked under the Windows XP Professional and Detonator 43.51. Quake III : Arena is one of the applications which do not use the optimization entirely at the texture sharpness analysis level because the compression is set by default. The test results are given for the texture compression forced and disabled in order to estimate the performance growth. Besides, you can look at the test results of our script AnisoBoosterOGL included into the RivaTuner utility which can make polygons culling more effective by locking the texture stage index checking procedure, - the performance grows since the polygons culling algorithms are applied to the zero texture stage as well:  The results of the Intellisample are almost identical when the texture compression is used (81.1 FPS in the Quality mode against 84.7 in the Performance mode - the difference makes only 4.5%); and when the compression is disabled the difference runs into 50% (72.9 FPS in the Quality mode against 108.8 FPS in the Performance mode). Moreover, the AnisoBoosterOGL script raises the efficiency by 10 % more. Now we have 120 FPS at most. If you remember, when we carried out the same tests a short while ago in the same conditions but without the polygon culling algorithms and texture sharpness analysis methods we got only 50 FPS. I hope that the Intellisample algorithms will keep on developing rapidly and without strong quality degradation. ConclusionUndoubtedly, the anisotropic filtering optimization algorithms based on the texture sharpness analysis are praiseworthy. But they are supported only in the OpenGL driver. I hope that the situation will soon change, and the Direct3D will get them as well. Besides, I hope NVIDIA will also integrate the AnisoBoosterOGL script into the driver in the near future releasing us from the necessity to provide its support ourselves. The 10% gain is not that bad after all. Taking into account the principles of operation of the anisotropy optimization algorithms based on the texture sharpness analysis, we can give the following recommendations for those who want to reach the maximum performance level in OpenGL applications with anisotropy enabled: I recommend switching off the texture compression in the Balanced and Performance anisotropy modes because the OpenGL ICD driver is not able to analyze compressed data, that is why if the compression is enabled at the application level the texture analysis algorithms and, therefore, the optimizations won't work. Note that the texture compression works by default in the Performance mode, and the problem of shortage of the video memory induced by compression disabling shouldn't worry you. Moreover, the OpenGL driver allows managing the texture compression forcing, and if the forced compression in the Performance mode irritates you or you want to control this function irrespective of the Intellisample settings do not miss the next version of the RivaTuner.

Aleksei Nikolaichuk aka Unwinder (unwinder@ixbt.com)

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |