|

||

|

||

| ||

Attention! Before setting about this article we recommend that you first read the Parhelia-512 published in the middle of May. Contents

The Canadian Matrox Graphics (further referred to as Matrox), which is a subdivision of Matrox Electronic Systems Ltd, unexpectedly released a unique product. What is its uniqueness in? You can get more detailed information on it in our preview mentioned above, and now I must say that the main peculiarity of this solution is that this fruit was being developed by the company during several years (!). After 1999 we heard almost nothing from the Canadian company (G450, as well as G550 were just weak attempts to modify the G400, because they had neither considerable improvements in capabilities nor a 3D speed growth). The rumor only had that the G800 and G1000 were to appear. Actually, even after the news that Matrox was going to come onto the market with something new, the latter was referred to as G1000. So, now we had it - the Parhelia-512. I must repeat that the theoretical aspects and peculiarities of the given product are worth being studied in the review of this chip. Line of productsThe company promised to release several cards on the Parhelia-512 with different memory sizes and, probably, frequencies. Secondly, the already available Matrox Parhelia 128 MBytes has two revisions:

Let's take a look at the difference between characteristics of the real product and what was announced before (in the parentheses we indicate whether they correspond to the specs):

TheoryTaking into account the remarks above, I should warn you that you'd better read the preview on the Parhelia-512 if you haven't done it yet, because we won't analyze real capabilities of the card, but just give a comparison table.

Here is a list of OpenGL extensions supported in the current drivers:

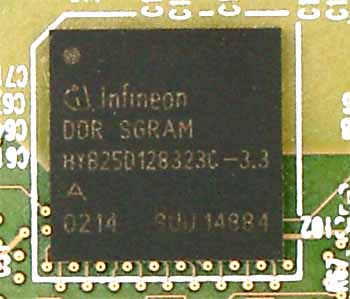

As compared with the previous products from Matrox, this is a super-revolution! It even makes no sense to give specs of the G400/450/550. But as compared with the current solutions from ATI (RADEON 8500) and NVIDIA (GeForce4 Ti) the Parhelia looks quite modest. Although 16 texture units place this chip on a higher level, our tests will show that almost no applications can work with the multitexturing mode of "4 pipelines X 4 texture units". Such rich capabilities of the texture units allow for fast bilinear and anisotropic filtering. But only from the theoretical standpoint. Each texture unit can select 4 samples to form bilinear filtering. This is a standard. 4 texture units give 16, 4 pipelines - 64. That is why the Matrox's marketers indicated 64 texture samples at a clock. Thus we get either 4 bilinear-filtered textures, or 2 trilinear- or anisotropic-filtered (8 samples) textures or 1 anisotropic-filtered one (16 samples) per clock. For old (which amount ot 99.9%) two-texture applications anisotropic filtering based on 8 samples must go without speed losses. But it isn't so in practice, which will be shown later. The Parhelia has, in fact, only 1 function from the DirectX 9.0 suite - the Displacement Mapping technology which increases realistic and detailing degrees of relief surfaces. Contrary to typical relief types which are laid on triangles' surfaces during shading and which doesn't affect visibility of certain pixels (the bump maps model only pixel's illumination, not its real position) the displacement maps allow creating geometrically correct relief, and their crossing in the space doesn't look like an ideal straight line. Here is a bright example:  This demo program uses all of 16 texture units and shows an effect of the displacement maps. The cards will be bundled with a luxurious demo program which emulate a part of animate nature of a reef. Here you can also notice an effect of the displacement mapping. I must say that the Vertex Shaders 2.0 should be expected with the DirectX 9.0. It is possible that 4 units of these shaders will begin working to their full capacity only with the DX 9.0. So, let's start examining the card itself. CardRemember that we examine not a preproduction sample, but a production card based on the Matrox Parhelia-512. The card has the AGP x2/x4 interface, 128 MBytes DDR SDRAM (8 chips located on the front side of the PCB).   Infineon memory chips, BGA form-factor. 3.3ns access time corresponds to 300 (600) MHz. But the memory runs at 275 (550) MHz  The memory chips in the new BGA package appeared not so long ago but they became quite popular with the manufacturers (some companies (ASUSTeK, SUMA) produce cards of their own design with such memory chips). This form-factor provides better cooling for the chip, and the memory does not overheat at its rated frequency (though the memory on the Parhelia 128 MBytes works at a frequency lower than the rated one for 3.3ns). Here are frequencies of the production cards (core/memory):

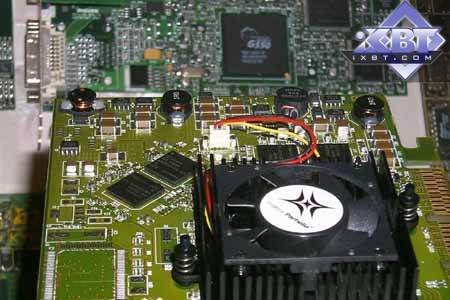

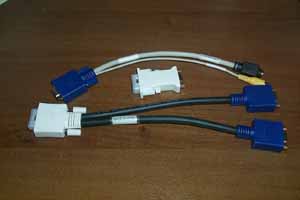

As you can see, Matrox follows ATI and sets different frequencies for cards based on the same GPU but of different package types (OEM or Retail). At the same time, the chipset has a single name. But taking into account that Matrox produces all cards itself, there must be no fraud. Though "dealers" who would like to make a profit out of it won't lose their time. The card has an original design. Of course, the 256-bit high-speed bus definitely makes an effect. First of all, there is a screen protecting from pickups:  There are also a lot of components controlling stability of the card. The developers have done their best to prevent any pickups which can be caused by such a complicated layout (as you remember, the first high-speed cards on the GeForce2 Ultra looked terrible: ripples were well noticeable on the screen).  The card has two DVI-outs that is why you need an adapter if you want to connect usual CRT monitors. Quality of the output filters is paid very careful attention to (probably Matrox is afraid that third companies can simplify the design and, therefore, it doesn't hand over the production to them). On the whole, the PCB is quite expensive which will certainly affect the prime cost. The memory modules are located around the chip which looks unusual but logical (to make the distance between them and the chip smaller). By the way, look at the GPU's size:  This is a real giant as compared with others! It also looks unusual: the core is entirely covered with a metallic lid. The Parhelia-512 heats up considerably even at 220 MHz. I think it will be almost impossible to overclock it. However, the cooler has an ordinary shape (probably the engineers had been fighting with overheating quite long by reducing the chip's frequency until they found the compromise between a still acceptable speed and an inexpensive cooler).  The DVI connectors look like universal ones, not just pure DVI, because each has several functions. Let's take a look at the accessories the card is bundled with:

Let's try to connect three monitors:  After activation of the respective mode in the drivers (the settings will be mentioned later) we have the desktop extended onto three monitors:  Looks really unusual :-) Especially when installing something :-)  Unfortunately, we found out too late how to enable the similar Surround Games mode in the Quake3 (we gave back the card by that time), and we failed to find out it for the UT2003, that is why we tested only one strategic game where each monitor showed views from different cameras:  Here is the above mentioned demo from Matrox which shows life of the coral reef:    Looks undoubtedly impressive!   The TV-out works excellently! Apart from settings in the drivers which have everything necessary for TV image optimization, the Parhelia offers the DVDMax technology which allows displaying movies on TV in a full-screen mode, thus, making free the desktop on a monitor. Well, everyone has a too long way to go to the level of quality and capabilities of TV-out from Matrox! First of all, it refers to NVIDIA and its nView which still has some bugs in the drivers. Here is the box the card will ship in:  OverclockingWhen we started testing the card at the rated frequencies we came to the conclusion that at such high heating level overclocking will be almost impossible. And it turned out that it is completely impossible because the latest version (3.20) of the PowerStrip can't work with the Parhelia now. Test system and driversTestbed:

The test system was coupled with ViewSonic P810 (21") and ViewSonic P817 (21") monitors. In the tests we used Matrox drivers of the 2.26 version. VSync was off. For comparison we used the following cards:

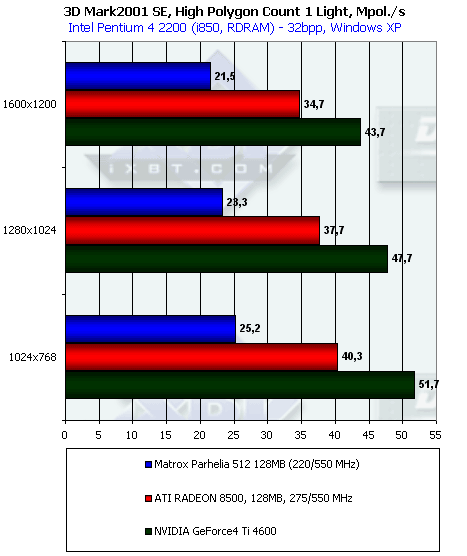

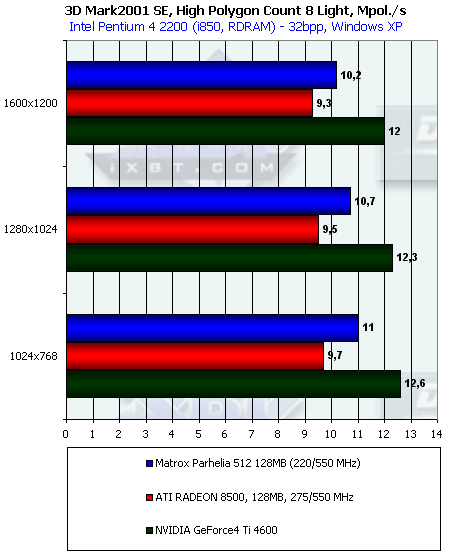

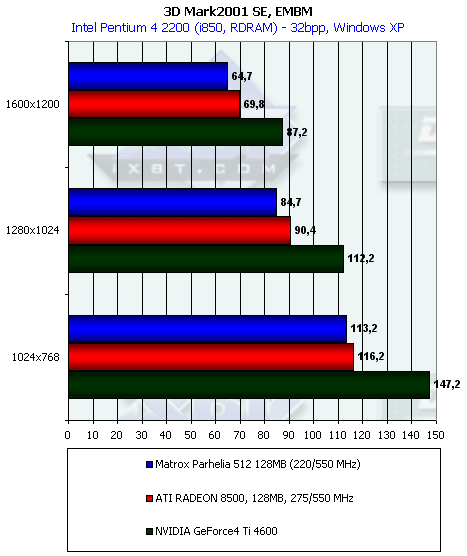

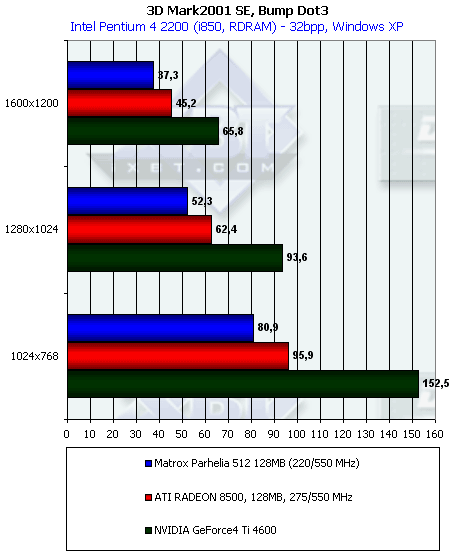

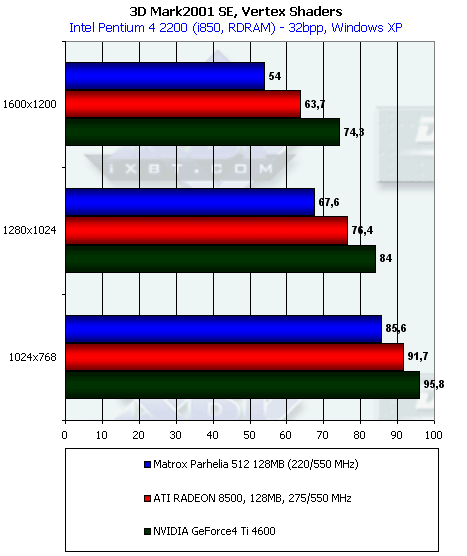

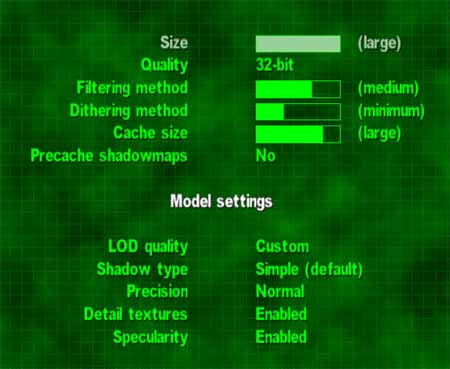

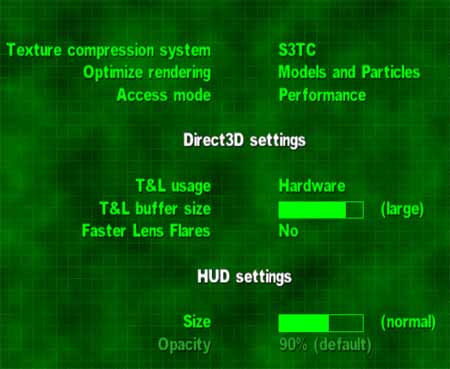

Driver settingsYou can download this animated GIF file (850K) to look at the summary picture of the driver settings. We will examine all settings in turn. Note:

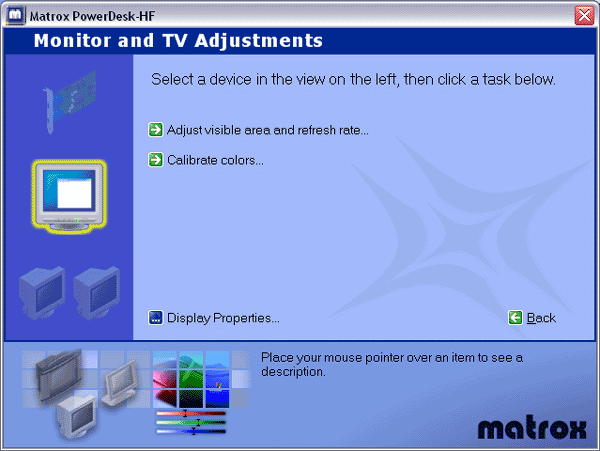

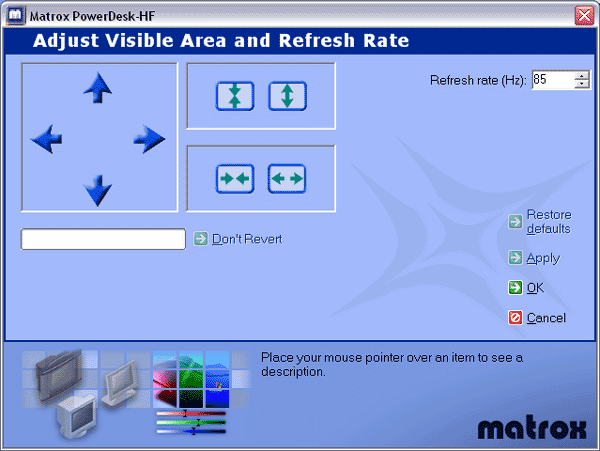

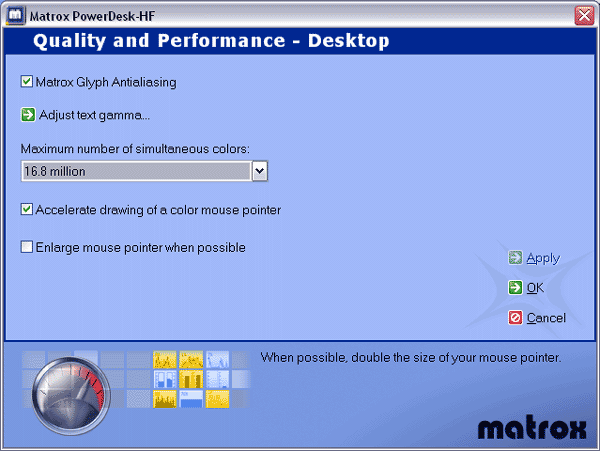

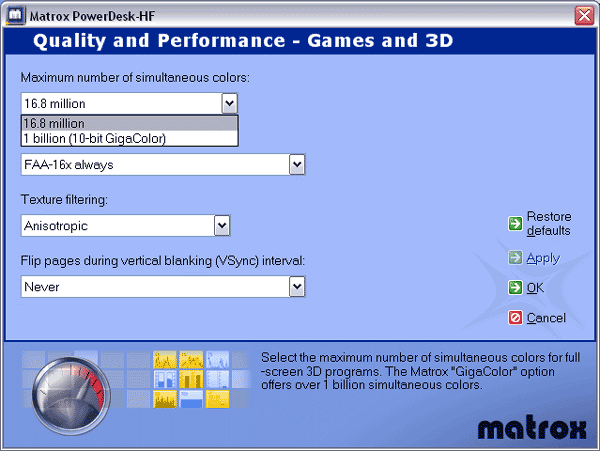

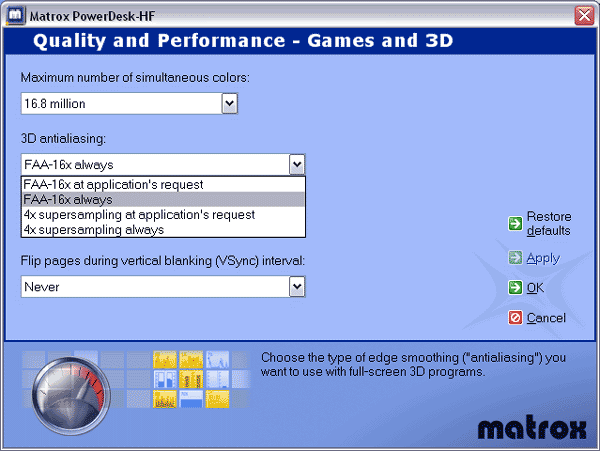

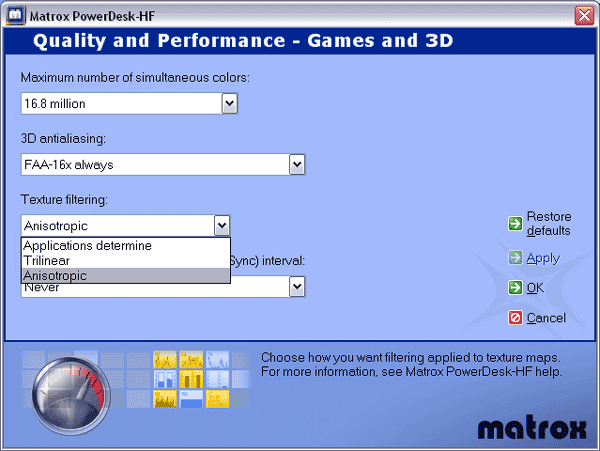

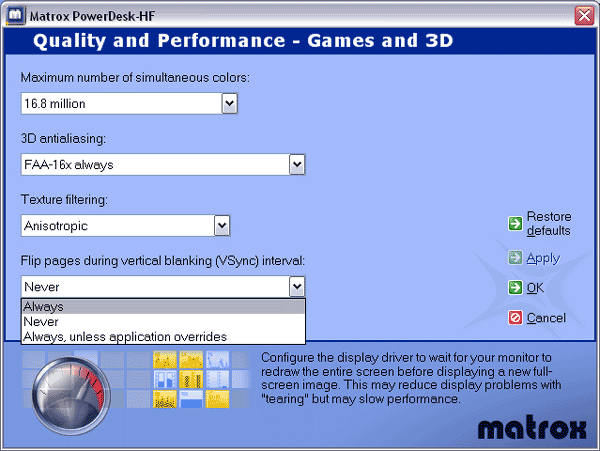

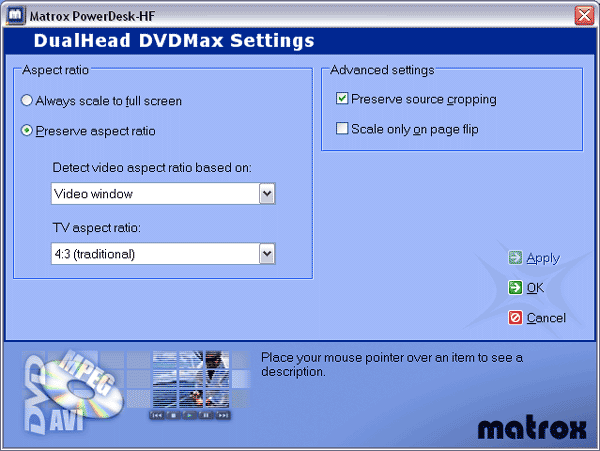

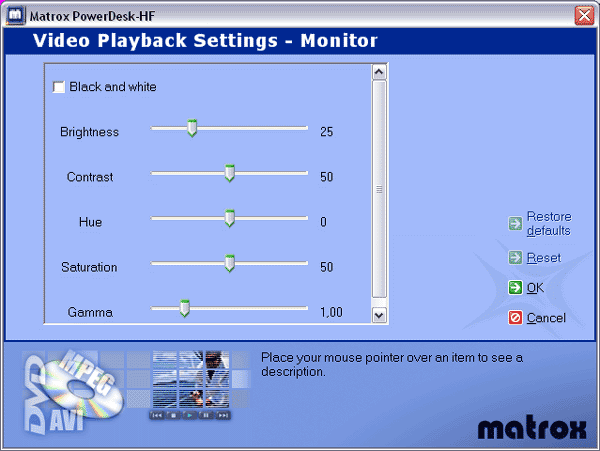

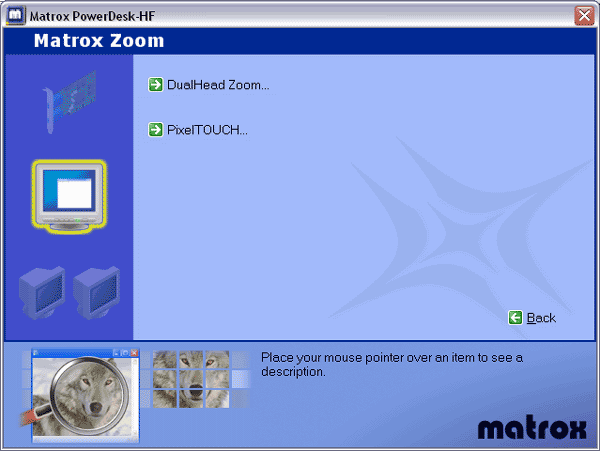

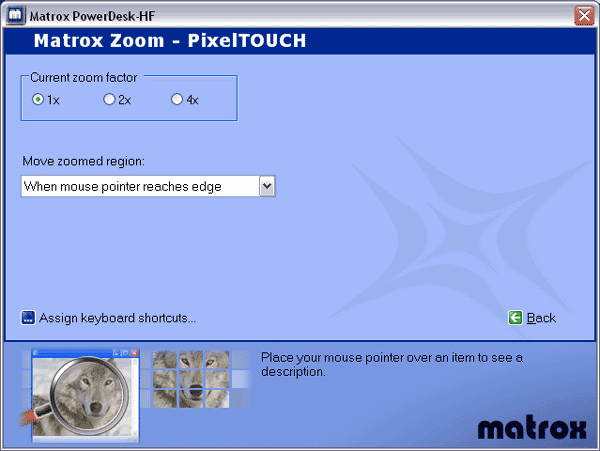

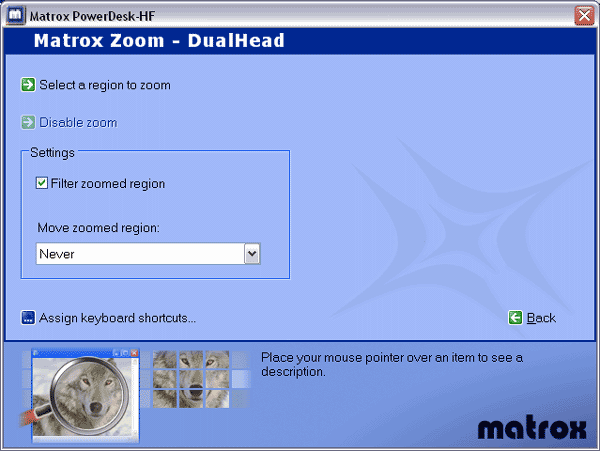

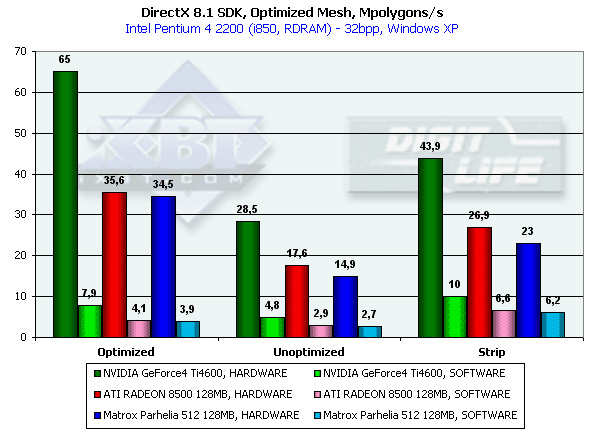

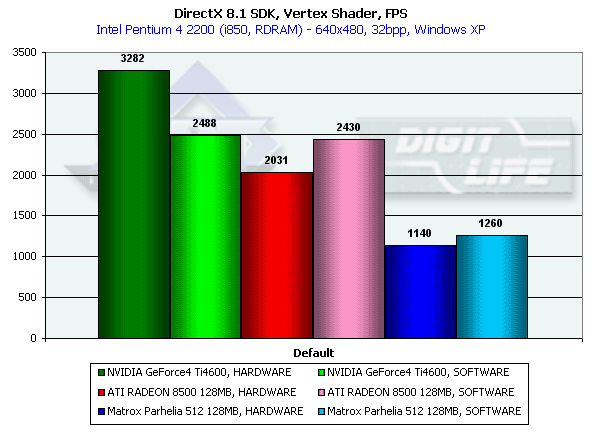

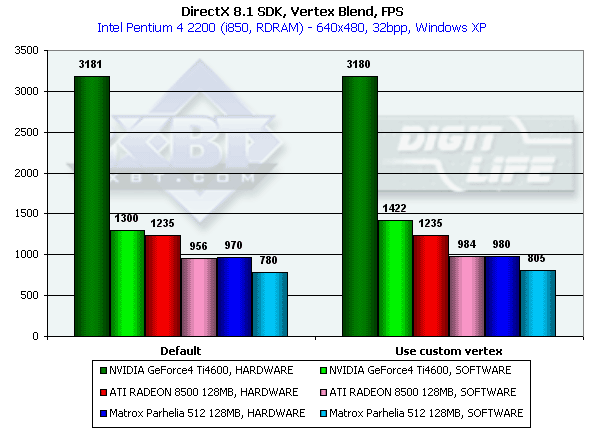

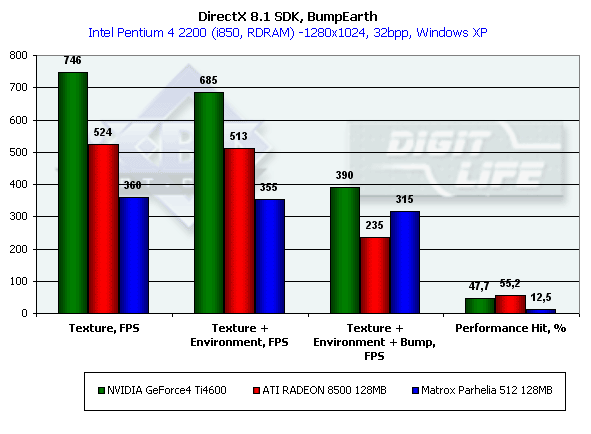

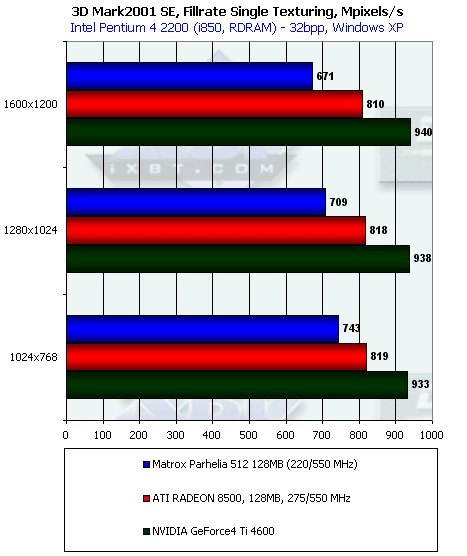

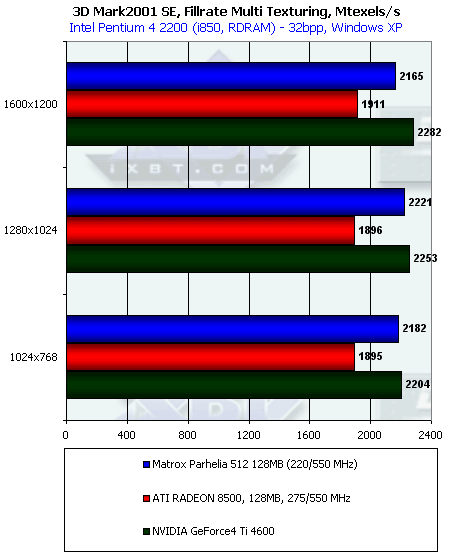

This is the main menu, and there is a rich set of settings and adjustments.  Here you can choose a display mode.  Here you can adjust colors, geometry etc. for monitors or TV. On the left you can select either a monitor or a TV.  Well, here are geometry and display settings. It's interesting that there are more of them for TV: you can change dimensions in 8 directions (including diagonals).  Here are 2D settings. We can enable the Matrox Glyph antialiasing technology, select a card's mode (32-bit as 24+8 or Gigacolor as 30+2). Which are advantages of the 10+10+10 (30bit) mode as compared with 8+8+8 (24bit) (in 2D)? None at present, but this is an issue of the future. At the moment all "old" 24-bit images will look identically, but there are some editors which have higher accuracy of operation with colors. And here, in creation of 3D images which usually have a great deal of hyper-fine color transfer, the Gigacolor of Matrox will help much. Of course, such 30-bit images must be viewed only with the Parhelia. But it's possible that future products from ATI or NVIDIA can also use modes with higher bit capacity per channel. But there is also the reverse of the medal. It concerns 3D. Additional 8 bits for an alpha channel were almost never used when 16-bit textures reigned. That is why it was justified to sacrifice the bit capacity of the alpha channel to increase accuracy of rendering of the basic graphics (textures). But it was before. Today a lot of developers use a 32-bit format and those 8 bits of the alpha channel can be useful. Besides, does it makes sense to use these capabilities of the 10+10+10 mode to improve quality of graphics (in this case it's necessary to develop 30-bit textures purposely, or to use not 24, but 30-bit accuracy in calculation of effects)? I doubt. This is not a standard. And it's well known that things become standard if they are supported by most accelerators. And we have the overwhelming majority of accelerators which can work only with the "old" 32-bit color. According to the forecasts, ATI and NVIDIA won't mess about such 32-bit color in 3D, they will provide support right for 64-bit or even 128-bit color. That is why Matrox is too late with this innovation. It's unlikely that anyone will use it in 3D graphics. How to view this 30-bit color? If you don't have the Parhelia, you won't see anything. It's like stereo effects: without special equipment you can't see anything. Matrox uses banding demonstration methods to distinguish 24- and 30-bit images. Frankly, speaking, it's unfair.  Here we have 3D settings. Not many. However, all main functions are provided.  First of all, we can choose a 32-bit color mode we spoke above earlier.  Then we can enable the Matrox's proprietary Fragment AA 16x (or 4x supersampling).   And after that select a filtering mode and adjust VSync. Now we have settings related with MPEG2 playback and overlays on the whole.   These panels provide settings for DVDMax which is the technology that uses Dualhead for playing movies on TV or a second monitor.  And here it's possible to adjust the Overlay mode. The rest of the settings control convenience and additional functions in desktop management:     The Multidesktop technology is also supported. We can arrange virtual desktops and adjust them:   That's all we had to say about peculiarities of the drivers of Matrox. Test results2D graphicsTraditionally, 2D quality is very high, at 1600x1200x100 Hz it looks superb on both heads. You know quite well, that estimation of 2D quality is subjective. In this case such cards are produced only by Matrox, that is why quality doesn't depend on a certain sample, just on friendliness of a monitor to it, and quality of a monitor and a cable. I think the speed of the Parhelia in 2D needs no measurements, as all cards of the latest generation has it very high. 3D graphics, MS DirectX 8.1 SDK - extreme testsFor testing different extreme characteristics of the chips we used modified (for higher convenience and control) examples from the latest version of the DirectX SDK (8.1, release). Let's carry out the tests that are well known to our readers: Optimized MeshThis test defines a real maximum throughput of an accelerator as far as triangles are concerned. For this purpose it uses several simultaneously displayed models each consisting of 50,000 triangles. No texturing. The dimensions are minimal - each triangle takes just one pixel. It must be noted that the results of this test are unachievable for real applications where triangles are much greater, and textures and lighting are used. The results are given only for 3 rendering methods - model optimized for the optimal output speed (with the size of the internal vertex cache on the chip accounted for) - Optimized, Unoptimized original model, and Strip - unoptimized model displayed in the form of one Triangle Strip:  In case of the optimized model, when the memory subsystem has a minimal effect, we measure almost pure performance of transform and setup of triangles. The Ti 4600 is a leader. 65M triangles/sec is almost twice more than the result of the RADEON 8500 and Parhelia. I understand that this is the effect of the second T&L unit of the Ti 4600 as compared with the RADEON 8500, but why did the Parhelia with its 4 vertex shader units fall so much behind? It seems that just one pipeline works. Besides, all GPUs get a considerable advantage in case of forced activation of software geometry calculation. The chip has received a considerable gain thanks to the FastWrites mechanism which allows direct transfer of geometrical data from the processor to the accelerator omitting the system memory. In case of the Strip the gain is zero because of a twice smaller volume of data transferred. Vertex shader unit performanceThis test allows determining the maximum performance of the vertex shader unit. It uses a complex shader which deals with both type-transformation and geometrical functions. The test is carried out in the minimal resolution in order to minimize the shading effect:  Again the "double" T&L of the Ti 4600 is far ahead. And the Matrox Parhelia loses again. It's interesting that since the last big review of the NV25 the speed of the RADEON 8500 in processing vertex shaders hasn't grown at all, but the increased processor's power of the testbed allowed the software emulation to outdo the hardware processing in speed. It also refers to Matrox. It is probable that both the ATI's and the Matrox's cards are limited by the frequencies of their GPUs, and the 2.2 GHz Pentium 4 works here faster. Vertex matrix blendingThis T&L's feature is used for verisimilar animation and model skinning. We tested blending using two matrices both in the "hardware" version and with a vertex shader that implements the same function. Besides, we obtained results in the software T&L emulation mode:  This time software emulation yields to the hardware implementation everywhere, being limited by the geometry transfer speed through the AGP (note how similar the results with the shader and without are). In case of the hardware implementation we can see again the growth of the Ti 4600 due to its 2 pipelines of vertex shaders, and an inconsiderable growth of the RADEON 8500 and Parhelia. It's interesting that the hardware blending on the RADEON 8500 is equal to, and on the Parhelia slower than the shader one, but the difference is not significant. EMBMIn this test we measure performance drop caused by Environment mapping and EMBM (Environment Bump). We set 1280x1024 because exactly in this resolution the difference between cards and different texturing modes is the most discernible:  The Ti 4600 takes the lead again outscoring all the rest in the effective fillrate in all three modes. The EMBM has the greatest effect on the R200, but the difference is not considerable, however the general efficiency of any (even without EMBM) shading on the R200 is still too low. The Matrox's chip has a lower fillrate but it is more efficient at the full capacity than on the R200. Pixel Shader performanceWe used again a modified example of the MFCPixelShader having measured performance of the cards in high resolution in implementation of 5 shaders different in complexity, for bilinear-filtered textures:  The Ti 4600 thrives, and the dependence on the shader's complexity and the number of textures looks like a stable horizontal line with some drop in the beginning. The same concerns the Parhelia. The same number of pixel pipelines of the Ti 4600 and Parhelia with the difference only in frequencies resulted in the constant gap between these cards. And the R200 performs weakly, especially on complex tasks. The reuse of texture units costs much more for it than for the solutions from Matrox and NVIDIA. So, in the DX 8.1 SDK tests the Matrox Parhelia 128 MBytes has a very low position. We didn't expect that because it has 4 vertex shaders but enables just one. It may be caused by the imperfect drivers or errors in the chip. Operation with the pixel shaders of the Parhelia is superb. Though it's still slower than the Ti 4600. Later we will return to these tests (in August maybe), when we have an opportunity to check VS 2.0 in the DirectX 9.0. 3D graphics, 3DMark2001 SE - synthetic testsAll measurements in all 3D tests were done in 32-bit color. Fillrate The theoretical limit of this test is 880M pixels/sec for the Parhelia, 1100M for the RADEON 8500 and 1200M for the Ti 4600. The Parhelia is the closest to the peak value.  Remember that the peak values for this test are 3520 (1760) M texels/sec for the Parhelia (the second value (in the parentheses) is for 4 pipelines with two texture units on each), 2200 M for the RADEON 8500 and 2400 M for the Ti 4600. Now the Ti 4600 is the closest to its peak value, the RADEON 8500 is the second. As to the Parhelia, it seems that it used 4 x 2 instead of 4 x 4, but the real fillrate turned out to be much lower than its peak value. It proves again that the chip doesn't enable its full potential. Why? Better ask the engineers of Matrox (or programmers). Scene with a large number of polygonsIn this test you should pay more attention to the minimal resolution where the fillrate makes almost no effect:  In case of one light source the Ti 4600 is an absolute leader. It performs almost twice better than the Parhelia and almost reached the maximum throughput for triangles obtained with the Optimized Mesh from DX8.1 SDK. It shows again how powerful the double T&L of the Ti 4600 is. However, the RADEON 8500 is also near its peak value obtained in the SDK's test. In case of the Parhelia its speed of operation with one light source is too far behind the limit for polygons. Again drivers?  With 8 light sources the performance of the Parhelia falls down slower than that of the RADEON 8500; on the whole, the Matrox solution easily outdoes the R200. But the Ti 4600 is still a leader. Bump mappingLook at the result of the synthetic EMBM scene:  Although in the extreme tests the Parhelia runs at a higher speed of bump mapping than that of the RADEON 8500, in this case it falls behind the latter. And now the DP3 relief:  The situation is similar. Vertex shaders This test proves that only one T&L pipeline of the Parhelia works because it lags behind the RADEON 8500 according to the frequencies. By the way, as the resolution grows up both the RADEON 8500 and the Parhelia perform worse being limited by the fillrate; the speed of the Ti 4600 falls down slower. Pixel shaderTaking into account that too low resolutions are limited by geometry and too high ones by the memory bandwidth let's take a look at 1024x768 and 1280x1024: In the tests of pixel shaders in the SDK the Parhelia bested the RADEON 8500. And here it loses. Taking into consideration that the 3DMark2001 SE test is easily adjustable for cards, I think we will soon see a patch which will increase the speed of the Parhelia. Now let's carry out the Advanced Pixel Shader test. The situation is similar. Note that in this case the cards from Matrox and NVIDIA fulfill the task in two passes using the Shaders 1.1, while the RADEON 8500 takes only one pass with the Shaders 1.4, but it doesn't help it. Sprites In this field the Parhelia feels really awful. So, in the synthetic tests the Matrox Parhelia 128 MBytes loses to its competitors (except operation with a large number of light sources). However, it was expected to some degree because its frequency is much lower than that of the others, despite a twice greater number of texture units. Besides, all of 4 vertex pipelines are not enabled. I hope its operation will improve with new drivers (if it's because of them) or with improved specs (if it's because of the chip). But remember that only results of real applications will let us estimate overall performance and balanced operation of the Parhelia. 3D graphics, 3DMark2001 - game tests3DMark2001, 3DMARKS In general, the Parhelia falls behind its competitors, even

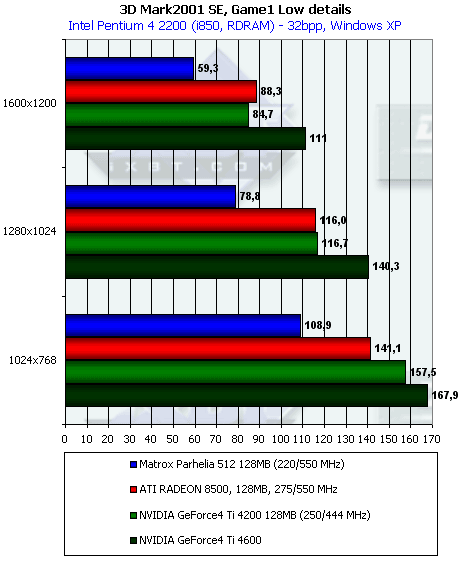

the Ti 4200. And now let's carry out more detailed tests. 3DMark2001, Game1 Low details   Test characteristics:

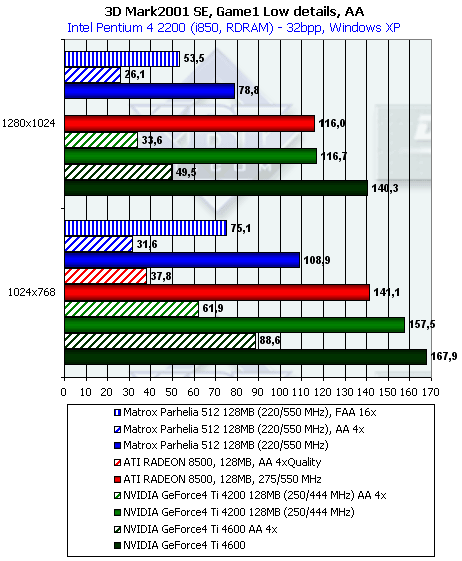

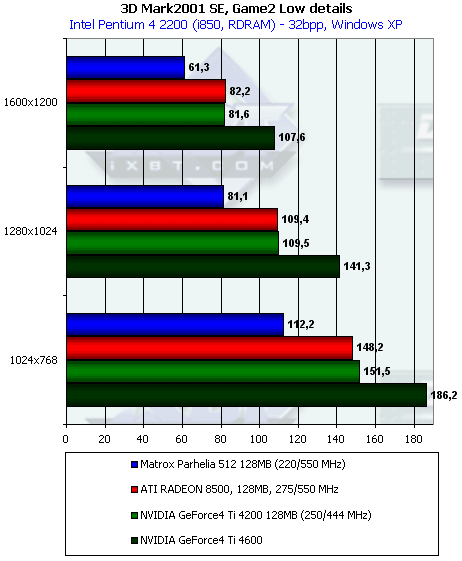

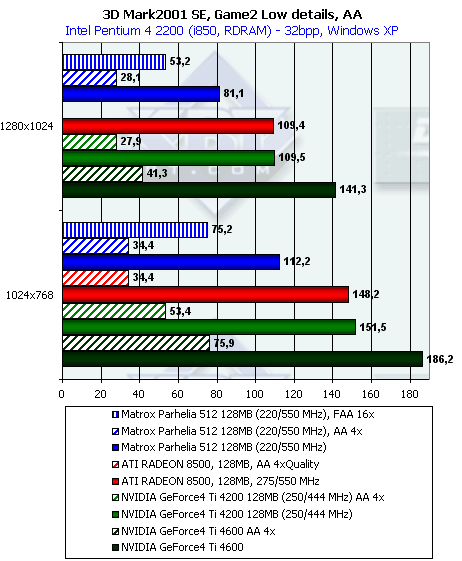

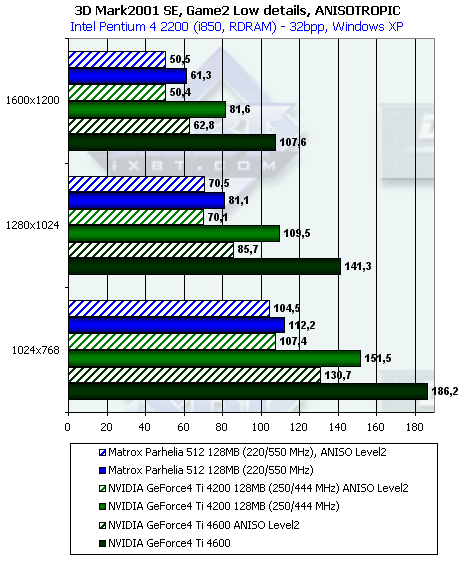

The gap between the Parhelia and the others in 1600x1200x32 is the following: it falls behind the Ti 4600 by 46.7%, behind the Ti 4200 by 30%, behind the RADEON 8500 by 32.8%. As for AA is concerned, the FAA 16x from Matrox looks much better than 4x from NVIDIA. The quality will be examined later. Despite the assumed free anisotropy at 8x sampling, the performance drops though not so much as compared with the GeForce4 Ti. Some texture units do not work again. 3DMark2001, Game2 Low details   Test characteristics:

In 1600x1200x32 the Parhelia falls behind the Ti 4600 by 43%, behind the Ti 4200 by 24.8% and behind the RADEON 8500 by 25.4%. In case of the AA the FAA 16x from Matrox again bests the 4x from NVIDIA, not to mention the 4x from ATI. In this test the performance drop in case of the AA enabled

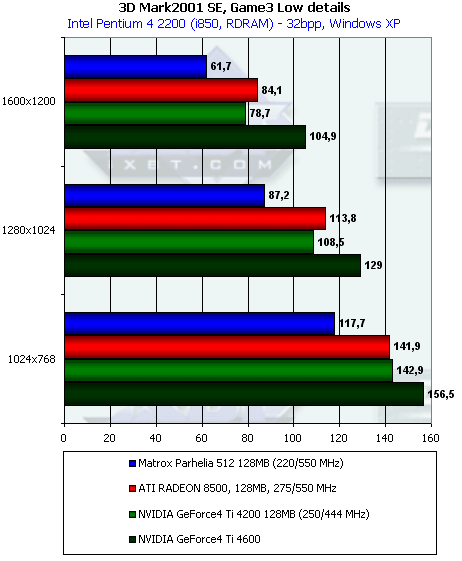

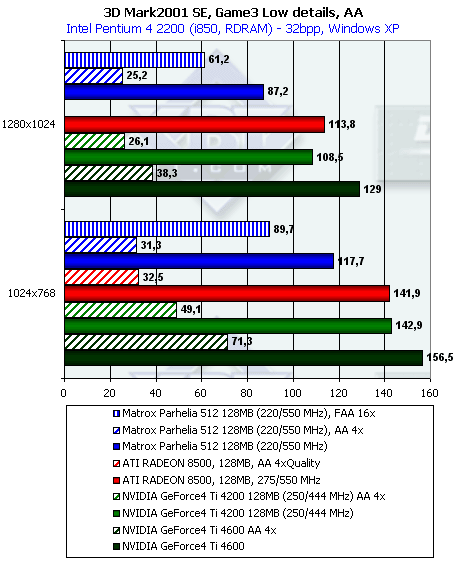

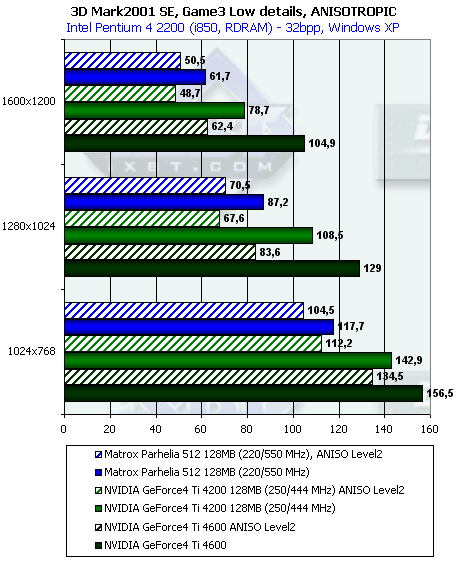

is much lower for the Parhelia than for the GeForce4 Ti. 3DMark2001, Game3 Low details   Test characteristics:

In 1600x1200x32 the Parhelia falls behind the Ti 4600 by 41%, behind the Ti 4200 by 21.6% and behind the RADEON 8500 by 26.6%. When the FAA 16x of the Parhelia is enabled its performance comes out very good, but what about quality? We will check it later... The performance drop of the Parhelia in case of the AA is almost

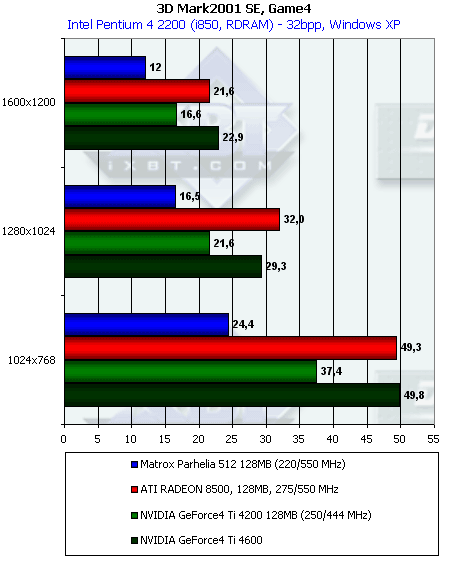

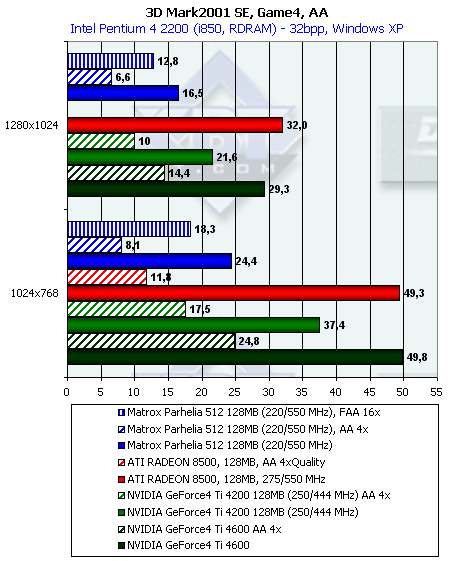

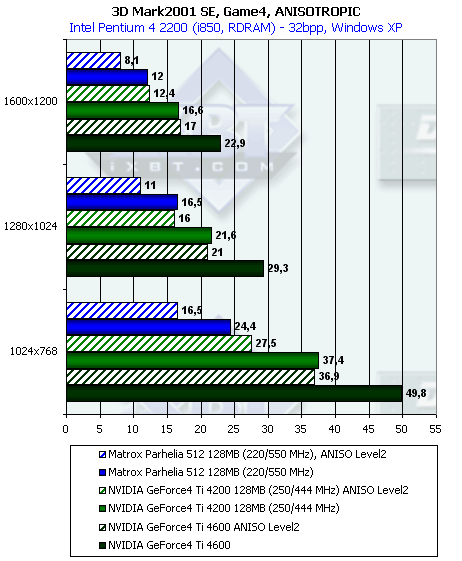

equal to that of its competitors. 3DMark2001, Game4   Test characteristics:

In 1600x1200x32 the Parhelia falls behind the Ti 4600 by 47.6%, behind the Ti 4200 by 27.6%, behind the RADEON 8500 by 44%. In this test even the FAA 16x didn't help the Parhelia because the speed is too low. However, this test is very complicated though in its time ATI managed to find a way of optimization of operation of its cards in the Game4, and since that time the RADEON 8500 has been thriving there. The performance decrease of the Parhelia with the anisotropy used is really huge! Evidently, a part of texture units of the Matrox's solution do not work. On the whole, in the 3DMark2001 the Parhelia loses much to its competitors which cost much less and have lower potential power. The "4 x 4" scheme doesn't work. Thus we have "4 x 2" at 220 MHz of the chip's frequency. And even the super-great bandwidth of the memory bus can't help lifting up the GPU's potential. Besides, the promised 4 T&L pipelines are lacking. 3D graphics, game testsFor estimation of 3D performance in games we used the following tests:

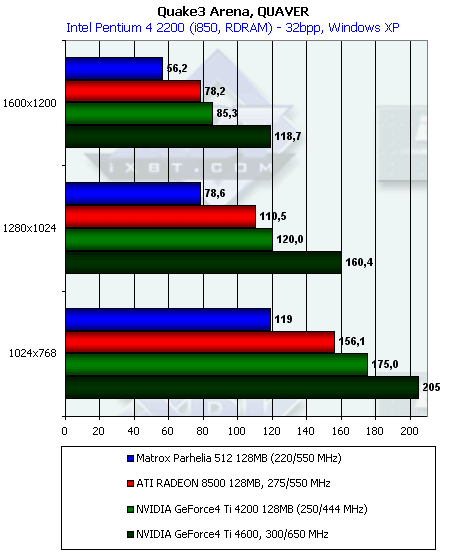

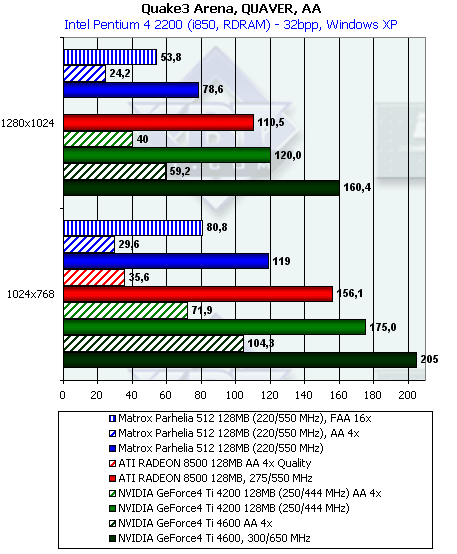

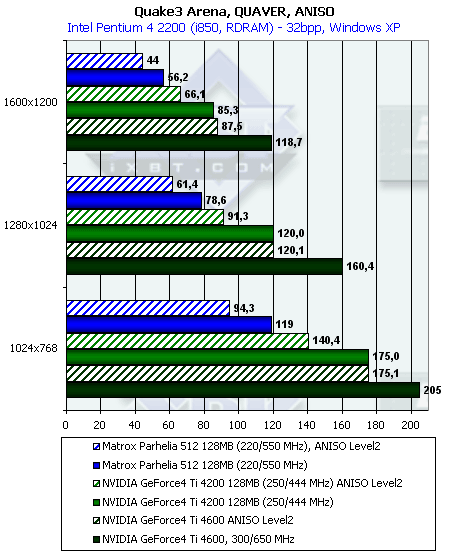

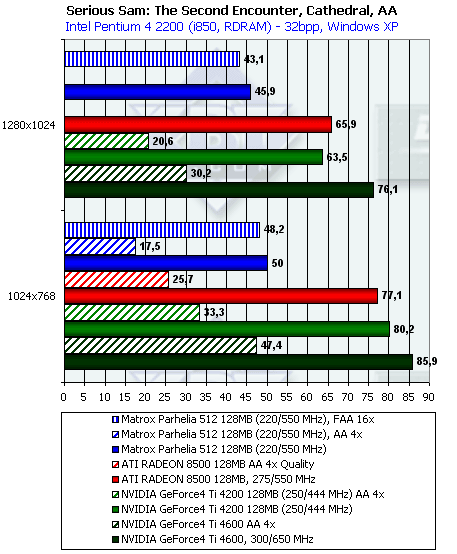

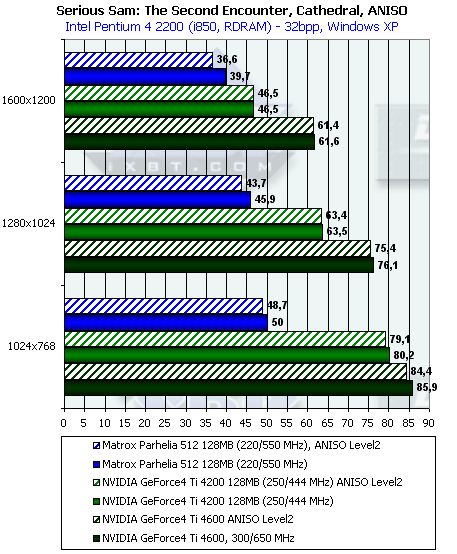

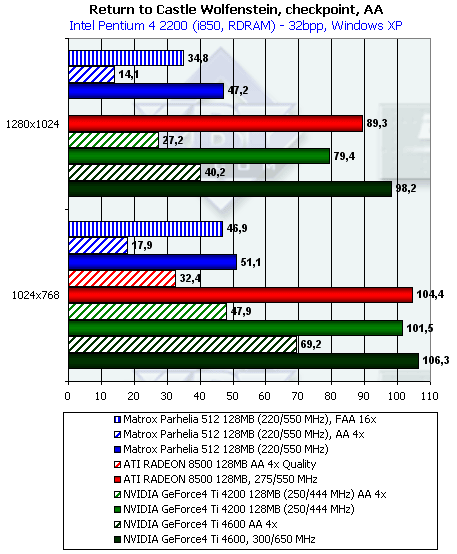

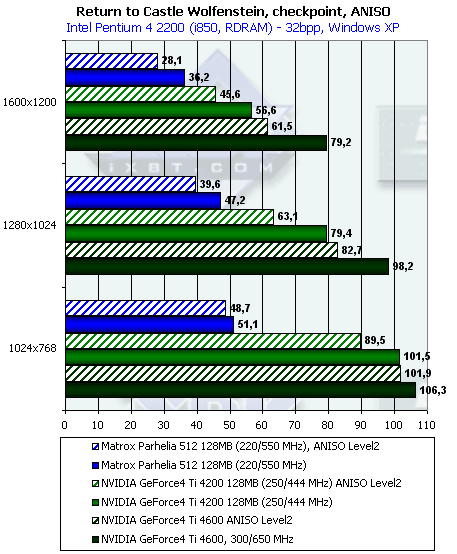

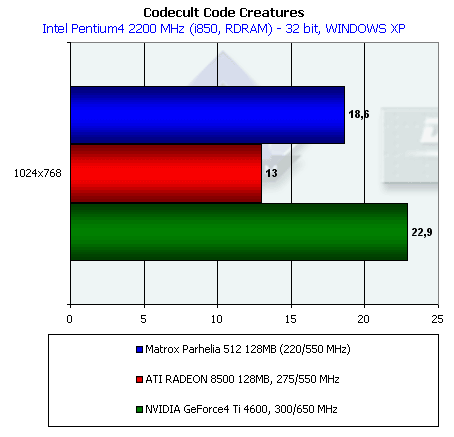

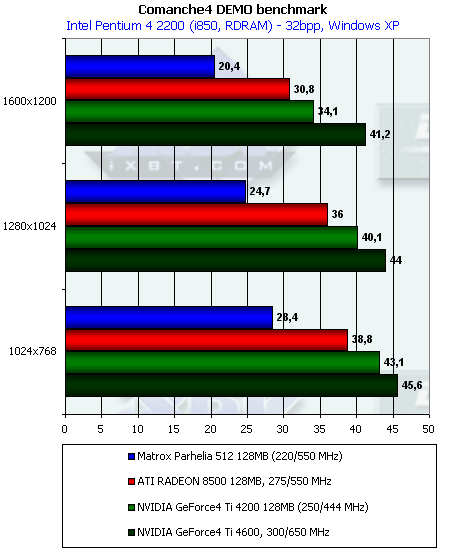

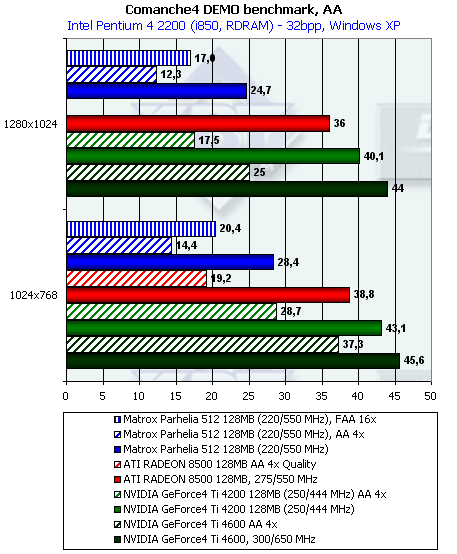

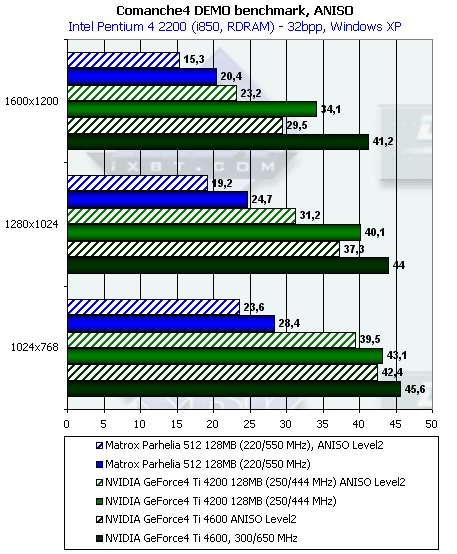

Quake3 Arena, Quaver   Nothing good for the Parhelia. 220 MHz and 4 x 2 instead of 4 x 4 make their effect. Even the FAA is not able to help here. Serious Sam: The Second Encounter, Grand CathedralHere are screenshots with the test settings:       Here are the results:    Despite the lag in this test the Parhelia managed to preserve the performance at the same level as the resolution was growing. In this game it's already possible to enable 4 texture units on each pipeline. However, the low frequency of the GPU and saving on caching didn't allow this card to catch up with the competitors despite the complete utilization of the pipeline's potential. However, with the FAA the Parhelia thrives. Return to Castle Wolfenstein (Multiplayer), Checkpoint   The Parhelia's scores are awful! Even the FAA doesn't help it to catch up with the leader. However, the Ti 4200 with AA4x in 1280x1024 loses, but the gap is inconsiderable. The scores of the RADEON 8500 with AA 4x Quality are so low that this mode becomes unacceptable for practical use. Code CreaturesThis test is based on the CodeCult engine which serves the base for several games. The engine uses almost all modern capabilities of video cards of the latest generation. The demo program contains very complex scenes as far as texture size, geometry and used effects are concerned.  As the test is so tough we carried out it only in 1024x768, because even in this mode the performance of the most powerful cards fall down much. The Matrox's solution is not in the last position. The pixel shaders operate at quite a high speed in case of the Parhelia (in this test pixel shaders form a water surface and sky), but their performance drops in the 3DMark2001 which means that this program dopesn't know this GPU. The gigantic memory throughput also helps much. The performance is limited by just a low frequency of the graphics processor. Comanche4 DEMO   This test is also very tough for accelerators, and it depends much on a CPU. Unfortunately, it doesn't enable Parhelia's capabilities, half of the texture units do not work again, and the Canadian's creation has some problems with vertex shaders. Unreal Tournament 2003 DEMO b.927 Taking into account peculiarities of operation of the RADEON 8500 in this test (see 3Digest), we carried out this test on the VIA KT333 (Athlon XP) based platform. It's known that this application uses vertex shaders quite actively. Well, the Parhelia again loses everywhere. 3D graphics qualityANISOTROPIC FILTERINGOperation of this function is described in the section of our 3Digest devoted to this subject. We know that realization of this function by different manufacturer differs. And speed characteristics of anisotropy of, for example, ATI and NVIDIA, differ quite much. Only the resulting quality is similar. The gallery of screenshots of the above mentioned section of the 3Digest is replenished with samples of anisotropy quality of the Matrox Parhelia. You will see yourselves that quality of anisotropic filtering is on the level of the GeForce4 Ti of Level2 degree. ANTI-ALIASING (AA)This function fights against jaggies of objects called artifacts of aliasing. The matter is that AA has better appetite than anisotropy (if it is a high AA level which improves image quality considerably). I recommend again that you read our analytical review on the Matrox Parhelia, where the new AA method from Matrox is described in simple terms. This is, in fact, supersampling, up to 16 samples per pixel. But it is applied only to pixels on polygons' edges (only 3..5% of a typical scene):  The main advantage is obvious: contrary to multisampling, redundant data are not stored in the memory and transferred via the bus! The total size of the frame buffer doesn't grow up considerably, not more than twice, even at 16x. A special rendering pass is used to find border pixels, when an accelerator records in a special buffer only border pixels of polygons without calculating texture values and shading intermediate pixels. Besides, as only polygons edges are processed, textures do not become less sharp, which is typical of FSAA and some hybrid MSAA techniques. However, such intellectual AA method is able in some cases to bring in artifacts. Besides, it can't process correctly border smoothening mixed up with semitransparent polygons (for example, clouds, fog, glass, fire in games). A user can switch a well-known classical 4x (2x2) MSAA mode also supported by the chip. Let's take a look at the quality.

In the AA 4x the Parhelia damages less textures than the GeForce4 Ti does. And there are no differences between the FAA 16X and AA4x. Besides, this mode doesn't result in significant performance drop. Have you noticed that below on the left the border of the pistol's handle is not processed in FAA 16x? Such artifacts are typical not only of this test. Well, it reminds me of the ATI's anisotropy. 3D quality on the wholeIn general, the programmers from Matrox have done their best. I don't know who is guilty that only one vertex pipeline works instead of 4 (maybe all of them work but only very weakly), and why instead of the promised shaders 2.0 we've got just the version 1.1. Probably, this is an error in the drivers. However, in the first release of the drivers WE FOUND NO ARTIFACTS IN THE 3D GAMES! Even in Warcraft III and Morrowind:   We ran 14 games and noticed no flaws. And the last thing. The drivers of the Parhelia allow for the forced trilinear filtering. I must say it works in all games. And even if a certain application manages filterings itself we will still have the correct trilinear one:  Conclusion

The new card from Matrox will be an excellent solution for upgrade for professional designers who sometimes feel a desire to play a little, and for aesthetes who consider that better 2D quality is impossible. However both will have to pay a lot of money for such upgrade ($400). Unfortunately, Matrox hasn't yet offered a cheaper version of the Parhelia-512 based solution that is why we can't recommend this card to average users. But time flies, and probably prices will go down swiftly. Anyway, if the Matrox Parhelia 128 MBytes were available at $300, it would be a serious competitor for the GeForce4 Ti 4600, despite the lower 3D speed (because it is the best compromise between the trustworthy and adamant quality and capabilities in 2D and modern speeds and features in 3D). Remember that the current low performance of the card can rise

considerably with the DirectX 9.0, that is why we will continue this subject a

bit later.

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||