|

||

|

||

| ||

Part 3: Performance in gamesBenchmarksYou can look up the testbed configuration here We used the following test applications:

You can download test results in EXCEL format: RAR and ZIP If you are familiar with 3D graphics, you will understand our diagrams and draw your own conclusions. However, if you are interested in our comments on test results, you may read them after each test. Anything that is important to beginners and those who are new to the world of video cards will be explained in detail in the comments. First of all, you should look through our reference materials on modern graphics cards and their GPUs. Be sure to note the operating frequencies, support for modern technologies (shaders), as well as the pipeline architecture. You should understand that the 8800 family of accelerators abolished the notion of a pipeline. These cards have streaming processors, which process shader instructions in a game. Texture units process pixels (applying textures, filtering) by themselves. That is, the processes are fully divided. Data is written into a memory buffer as always, but only after texture operations. The old procedure was to take a triangle, calculate it with shader instructions, apply texture(s), filter it, and send it along. This procedure is not used anymore. It's up to a driver and a thread processor to distribute tasks between stream processors. The same concerns texture units. ATI RADEON X1300-1600-1800-1900 Reference NVIDIA GeForce 7300-7600-7800-7900-8800 Reference If you have just begun realizing how large a selection of graphics cards is, don't worry, our 3D Graphics section offers articles about 3D basics as well as new product reviews. Currently there are just two companies that manufacture GPUs: ATI (the graphics department of AMD) and NVIDIA. So, most of the information is divided into these two sections. We also publish monthly i3D-Speed (former 3Digest) that sums up all graphics card comparisons for various price ranges. In the February 2007 issue we analyzed dependence of modern graphics cards on processors without antialiasing and anisotropic filtering. We did the same for AA and AF in the March 2007 issue. Thirdly, we'll have a look at the tests. S.T.A.L.K.E.R.Test results (charts): S.T.A.L.K.E.R.

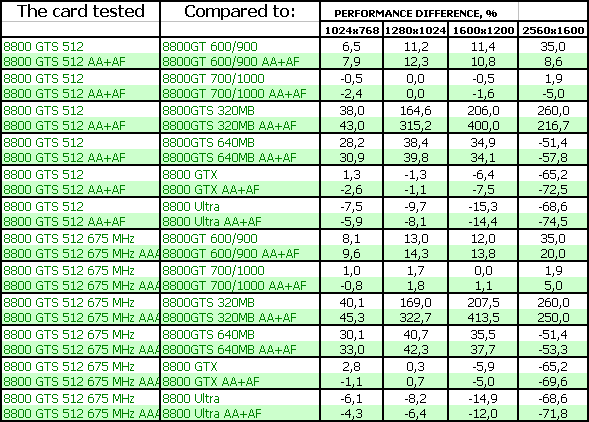

We know that this game has high requirements to texturing units and consequently to memory bandwidth. So we expect that the battle with the 8800 GTX/Ultra will be lost, because the latter cards have more ROPs and higher memory bandwidth, in spite of lower core clock rates. That's exactly what happens. The overclocked modification of the 8800 GT and the 8800 GTS 512 demonstrate practically identical results. Perhaps, the much higher core clock rate has made up for 16 shader processors locked in the 8800 GT. The card from BFG is overclocked just by 25 MHz (only the core), so its results are only a little higher. It happens because its memory bandwidth hasn't changed. CRYSIS (demo), DX9Test results (charts): CRYSIS (demo), DX9

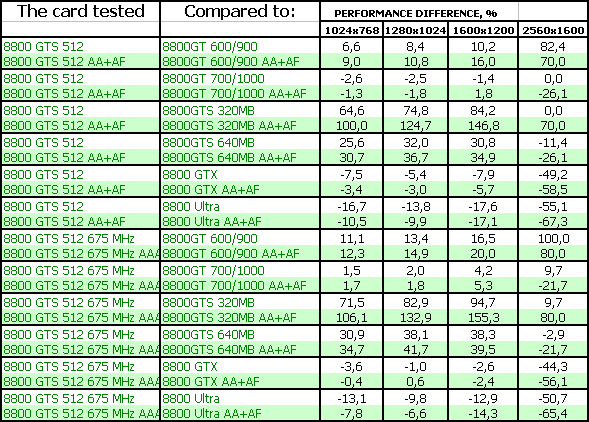

This game is much more complex. It has high requirements to GPU characteristics (and its speed) as well as to memory size. Memory bandwidth is the third in the importance list here. We know that textures used in the test scene take up 620-650 MB, so even a 512 MB card will not accommodate them all, and some textures will have to be stored in system memory. It reduces the overall performance. No matter how fast the bus is, it cannot compare with the local video memory in transfer rates - it works almost twice as fast as system memory. That's why the new card is outperformed by the 8800 Ultra, although it's on a par with the 8800 GTX (higher clock rates of the core did their part). It's outperformed only in 2560x1600, where video memory shortage is already pressing. However, you cannot play the game in this resolution - it will be too slow. We can also see that the overclocked 8800 GT is practically on a par with the 8800 GTS 512 (700 MHz and 112 processors again perform on a par with 650 MHz and 128 processors). CRYSIS (demo), DX10Test results (charts): CRYSIS (demo), DX10

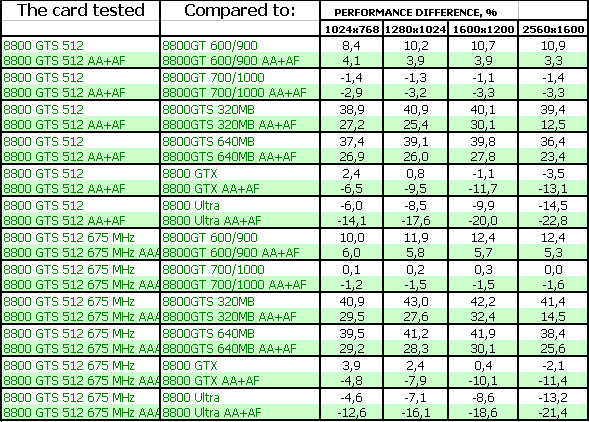

It's a similar situation, only the difference between the 8800 GTS 512 and the 8800 GTX/Ultra has become more contrasting. Strange as it may seem, the new product works worse in DX10 despite its core clock rate and the number of processors, while the old cards improved their positions. That's one more proof that DX10 was added to this game artificially, probably in the last moment (we can even guess under whose pressure and for what purposes). It only increases the load on texturing units and memory bandwidth instead of the shader potential of the core. Splinter Cell Chaos Theory (HDR)Test results (charts): SCCT (HDR)

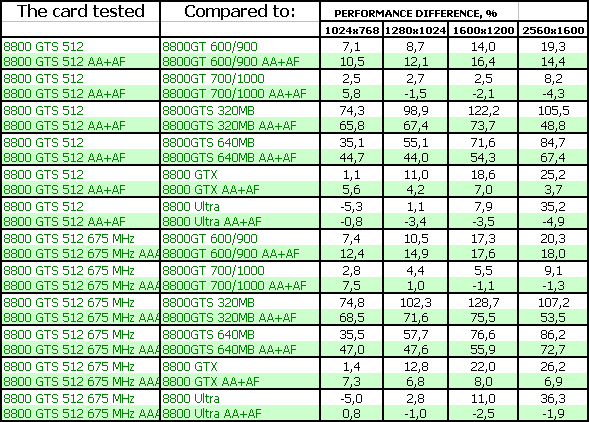

There is nothing surprising that the situation is almost the same here. This game has similar hardware requirements. It's just that all requirements are much higher in CRYSIS than in SCCT, but the proportions are almost the same. That's why the 8800 GTS 512 performs on a par with the 8800 GTX. The latter is faster only in heavy modes and high resolutions because of insufficient memory bandwidth. The 8800 GT operating at the increased frequencies (700 MHz at the core) is even a tad faster, although these 3% can be written off to a measurement error. Call Of Juarez This game is similar to the latest Lara Croft games (as far as requirements to shader units are concerned). The new card from NVIDIA wins all tests here (but it's on a par with the overclocked 8800 GT). That's how shader units work, when the load on memory bandwidth is not high, and the difference in memory bandwidth does not make a big difference. Company Of Heroes This game has higher requirements to texturing units and consequently to memory bandwidth. That's why the 8800 Ultra is the winner in heavy modes, especially in high resolutions. On the whole, the 8800 GTS 512 performs very well here. It's a surprise to see the effect of a different memory bandwidth value of the overclocked 8800 GT, when this card becomes a leader in the AA mode in high resolutions. Serious Sam II (HDR)Test results (charts): SS2 (HDR)

This game is just the opposite of Call Of Juarez, it has much higher requirements to texturing units and memory bandwidth. The load on shader units is not very high, so performance is limited by system resources in low resolutions, when a graphics card cannot reveal its potential. So the FPS difference between 1024x768 and 1280x1024 is very small or non-existent. As a result, the new product is outperformed by the 8800 GTX/Ultra, because these cards have more ROPs and higher memory bandwidth. Even memory bandwidth of the 8800 GTS 640 allows this card to compete with the 8800 GTS 512. Prey This game loads shaders even less, but its load on texturing units is greater. So the new product is outperformed even by the old 8800 GTS modifications with weaker cores (as far as shaders are concerned), but with higher memory bandwidth owing to a wider memory bus. It's logical, but you shouldn't be disappointed, because such games are gradually becoming a thing of the past. More games appear with high requirements to shader units. 3DMark05: MARKSTest results (charts): 3DMark05 MARKS

Synthetic tests that actively use shader features of the new graphics cards show the advantage of the 8800 GTS 512 - the new card outperforms all its competitors. 3DMark06: SHADER 2.0 MARKSTest results (charts): 3DMark06 SM2.0 MARKS

A similar situation. Some failures in certain resolutions can be written off to the quality of drivers. 3DMark06: SHADER 3.0 MARKSTest results (charts): 3DMark06 SM3.0 MARKS

On the whole, it's a similar situation. Only a tad higher requirements of this test to memory bandwidth let the 8800 Ultra become a leader. ConclusionsThis comparison does not include competing products from AMD (ATI), because this company hasn't launched a Hi-End product to oppose the 8800 GTX/Ultra. All new cards from AMD belong to cheaper segments, so they cannot participate in this comparison (cheaper products will surely be outperformed by more expensive ones). NVIDIA GeForce 8800 GTS 512MB PCI-E is a very efficient card of a relatively small size (lengthy 8800 GTX cards became a thing of the past) with a quiet two-slot cooling system (at last!) Besides, this cooler throws hot air out of a system unit - this is very good. This graphics card is no worse than the 8800 GTX in most tests. Sometimes it performs on a par with the 8800 Ultra, although the new card has a narrower memory bus and fewer local memory. Should these cards be equipped with 1 GB of memory? - I think that it's not necessary, despite the situation in CRYSIS. However, if NVIDIA allows it, some of its partners may launch the 8800 GTS 1 GB. Now about the negative side. We again see manufacturers try and confuse users with their product names. OK, we had the 8800 GTX and GTS. Everything is clear: these cards differ in cores, frequencies, memory sizes, and buses. Now we have a hybrid with a GTX core (with some exceptions), a narrower bus (compared to GTS), higher frequencies than in both older products. Why not give it a new name? Invent a new suffix or a number? But no... It's called the 8800 GTS. Our tests show a huge difference between the new card and the old GTS model! So inexperienced users may decide that the 8800 GTS 640MB is better than the 8800 GTS 512MB, because the former has more memory. So retailers will have a chance to cash in on dummies, as they get rid of the remains of the old 8800 GTS cards. I don's understand why NVIDIA is doing that. Now it should be said that all old 8800 GTX/GTS/Ultra cards were manufactured at two plants by NVIDIA's orders, so partners had no access to the production process. They bought ready cards from NVIDIA and just applied their stickers. It's different with the 8800 GT - NVIDIA sells ready cards, but it also sells GPUs, so some partners have already started making such cards on their own. We should take into account that the 8800 GTS 512 is actually based on the same core and has a similar PCB. We can assume that only the first lots of these cards will be complete copies of the reference design, that is bought from NVIDIA. So there will be no differences between them. Later on these products will be manufactured by partners on their own. That's why both cards from Zotac and BFG are actually copies of the same graphics card with different stickers and different frequencies (the core of the BFG card is slightly overclocked). So we have nothing to add about these cards. I want to repeat that BFG now pays much less attention to its boxes (I don't like the design, and the "noname" approach to filling boxes with bundled components). Only six months ago we admired bundles, boxes, and designs from this company. On the positive side we have a 5-year warranty. What concerns Zotac, this company apparently improves its positions in this respect. Although the bundle is very poor (the "noname" approach again). Besides, the company shouldn't have abandoned the dragon theme on the boxes. And another thing that we are not tired to repeat from article to article. Having decided to choose a graphics card by yourself, you have to realize you're to change one of the fundamental PC parts, which might require additional tuning to improve performance or enable some qualitative features. This is not a finished product, but a component part. So, you must understand that in order to get the most from a new graphics card, you will have to acquire some basic knowledge of 3D graphics and graphics in general. If you are not ready for this, you should not perform upgrades by yourself. In this case it would be better to purchase a ready chassis with preset software (along with vendor's technical support,) or a gaming console that doesn't require any adjustments. To find more information regarding the current graphics card market and the performance of various cards, feel free to read our monthly special i3D-Speed (former 3Digest). We'd like to thank

Zotac and Carsten Berger BFG Russia and Mikhail Proshletsov for the provided graphics cards

Andrey Vorobiev (anvakams@ixbt.com)

December 20, 2007 Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |