Professional software

In our test procedure, we define professional software as programs that are usually used in big organizations, not at home or in small offices. As a rule, this type of software has the following distinctive features: big size, a wide range of functions, designed for industrial production, and finally, high prices. Laymen can also use such software, of course. But these people should have high requirements.

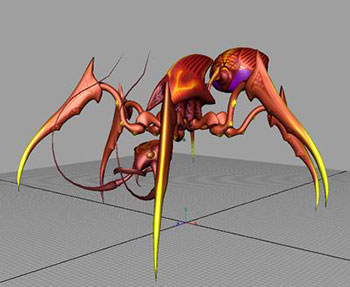

3D Modeling and Rendering

In this group of tests we are traditionally interested in two performance aspects affected by a processor: the speed of processing dynamically changing scenes as a users works in a given application and the speed of rendering static scenes. Tests in 3ds max 9 and Maya 2008 meet these requirements, the Lightwave test benchmarks only the rendering speed. Analyzing test results, we traditionally truncate I/O performance results, as they depend mostly on HDD performance and have nothing to do with the main object of our tests (CPU). On the other hand, we take stock of the points earned in graphics speed, because performance of a graphics system, at least in modern computers, may depend much on CPU performance (probably because some functions are not implemented on a purely hardware level (GPU), but on the software level as well - in video drivers).

We have been using SPEC tests for 3ds max for a long time already (many versions of this application and its benchmarks). In our previous test procedure we had to use SPECapc for 3ds max 8 with 3ds max 9 (the temptation to include only 64-bit applications into this group of tests was too strong). Fortunately, by the time we decided to update our test procedure SPEC had already released SPECapc for 3ds max 9.

A small note about rendering tests: as most users do not use the built-in renderer of 3ds max (scanline) :), we risked "improving" the SPEC test by installing V-Ray 1.5 SP1 and setting it as the default renderer in 3ds max. So the SPEC test benchmarks a different renderer. Theoretically, such results should be more interesting.

SPECapc for Maya hasn't been updated for a long time, so it was asking for this: this benchmark failed after the latest update of the application (an error message popped up in the process of executing the test script). Fortunately, it was not as fatal as it looked: we modified one of the test scenes, and the error message disappeared. So if you want to test Maya 2008 x64 with SPECapc for Maya 6.5, you may download the benchmark from SPEC website (see above) and the modified file (overwrite the original file) here.

As the SPEC benchmark lacks the rendering speed test, we have to run an additional test. This time we decided to use Mental Ray instead of the standard renderer (Maya Software) and added various effects (to complicate the task) - raytracing, caustics, shadows, etc. That's why the scene we used in our previous test procedure is rendered much longer now.

Nothing has changed in this test for a long time already, only program versions. Unfortunately, we have nothing to offer to users of this application, only the rendering speed test - we haven't heard of interactive benchmarks (tests that imitate user's operations) for Lightwave.

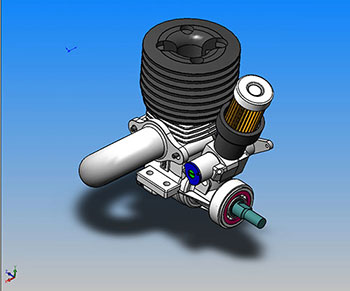

CAD/CAM/CAE

This group of tests includes pure CAD (Computer-Aided Design) applications, such as SolidWorks, as well as programs that combine features of CAD, CAM (Computer-Aided Manufacturing), and CAE (Computer-Aided Engineering), such as Pro/ENGINEER and UGS NX. In general terms, it's an old conservative class of programs, which slowly adapt to innovations in the world of computer hardware: such software is so complex that its authors have no time to add minor SSE optimizations or the like. :) Anyway, this class of software has high hardware requirements, so it's of great interest to us.

We've been using SolidWorks with the SPEC benchmark for many years already. In the new test procedure we've updated versions of the application itself and the benchmark. Besides, it's the first time that we use the 64-bit version of SolidWorks. Besides, judging by the CPU usage graphs, this application has finally added multiprocessing support to its list of features. It's not optimal yet (the additional core is used only by some tasks for several seconds only). Nevertheless, we are pleased that developers have finally started to move in this direction.

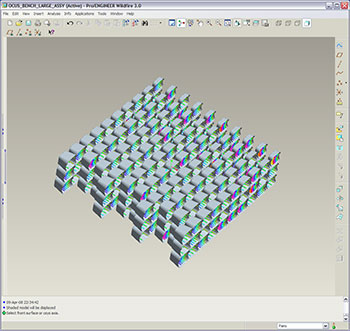

We already used this engineering application in the previous version of our test procedure, but this is a new version (3.0 versus 2.0), and it's finally a 64-bit modification. Besides, we were forced to change the benchmark, because SPEC did not release a new version of its benchmark for Pro/ENGINEER Wildfire 3.0 - now we use OCUS Benchmark. Unfortunately, we still don't see this application use more than one processor (CPU core) effectively, even though it's a new 64-bit version. However, there is a little chance that the problem is in the benchmark, which cannot use multiprocessing features of the new Pro/ENGINEER.

In this version of our test procedure we included tests in one of the main competitors of the previous application (Pro/ENGINEER) - UGS NX. Like Pro/ENGINEER, it's widely used in aircraft and automotive industries. However, such companies as LEGO also use it. :) Like the previous applications in this group of tests, it's a 64-bit version. Judging by CPU usage graphs, it has limited support for multiprocessing: our testbed with a quad-core CPU was loaded only by 50%, which is an indirect proof that the application can use two cores, but not more (the 100% load of one core in the quad-core system is indicated as 25% of the total CPU load - 100%/4). On the other hand, the total CPU load very often rose above 25% (which corresponds to the 100% load of one core out of four). On the whole, it's very good news, because this group of tests used to contain only single-core programs - neither dual- nor quad-core processors got any advantage here (which runs counter to the general tendency).

Write a comment below. No registration needed!