|

||

|

||

| ||

TABLE OF CONTENTSWe have recently taken a look at NVIDIA's new cards: GeForce 7900GS and GeForce 7950 GT, and have found out that at the moment ATI versus NVIDIA competing series look like that:

There appeared two titles in the table above: RADEON X1650 XT and RADEON X1950 PRO. Both feature new-generation GPUs made using 0.08 µm process technology. We'll provide the technical specifications later. RV560/570 GPUs were long expected in the market, but their release was repeatedly delayed. Perhaps, it had to do with the 0.08 µm process technology, still new to ATI/NVIDIA. But there were other reasons as well. The features of RV570 are close to those of already selling RADEON X1900 GT, which, in turn, is a paired-down R580. It would be logical to assume that Canadian vendor accumulated enough rejects of this GPU that it had to sell somehow. And this has been done with X1900 GT. In the same way they did with X1800 GTO - to get rid of R520 stock after pairing it down. But if, in case with R520, they sometimes were locking pipelines and ALUs on fully-fledged chips as well (to sell them at any cost), in case of R580 they, most likely, used GPUs with defects that didn't prevent normal operation in the paired-down state. This was the reason of 36 pixel shader units instead of 48; 12 texture units instead of 16; 12 ROPS instead of 16. Vertex pipelines were not touched as well as 256-bit bus. PCB also generally remained the same. They just were installing lower-capacity memory chips totalling 256MB and changed the cooling to that of X1800 XL. The latter was rather questionable, because at 575 MHz even a paired-down core was very hot and that weak cooler was not able to cool it down adequately without increasing fan rotation speed and, therefore, noise. I think that was the reason why ATI decided to abruptly reduce core and increase memory clock rate. I personally was indignant about that totally inadequate decision, because one day the vendor claims that modern applications require still more and more GPU power, so they are increasing the number of pixel shader units. And the next day they easily underclock X1900 GT by almost 70 MHz, promising that increased memory clock rates will compensate. They contradict themselves, saying things favorable at that very moment and changing aims so easily. I personally believe that any significant clock rate change is a reason to change card's name as well. You can't have the 575/1200 MHz and 512/1320 MHz cards named the same. But that's completely on their conscience. Besides, X1900 GT is leaving the market anyway, making room for a more up-to-date and cheaper solution. Indeed, let's remember, for instance, GeForce 6800 on a paired-down NV40. It cost so dearly! So, while moving to PCI-E, NVIDIA decided to not pair down very expensive NV45 GPUs, but released cheaper NV41/42 with fewer pipelines. Same here: paired-down R580 is being replaced by RV570. Yes, it has the same 36 pixel unit, 12 texture units, etc. Everything's the same. But the chip itself has smaller footprint and is generally cheaper. Clock rate is nearly the same - 580 MHz versus 575 MHz. Just the memory clock rate was raised from 1200 MHz to 1400 MHz. The 1.4 ns memory is widely available now, so why not use it as well. Add to this a simpler PCB and you get a $199 solution that can please everyone. At the same time its core supports all innovations and DX9 technologies. But why name the novelty "X1950 PRO", not "X1900 PRO"? It's less powerful than X1900 XT, after all! Marketing specialists interfered and claimed that since there's already a "1950" product (X1950 XTX), no sense in releasing something with a lower index. It's just the same as with NVIDIA's GeForce 7950GT that is less powerful than 7900GTX. Everyone is trying to trick end users with numbering these days. Now about the X1650 XT. Now we know why the old X1600 XT is renamed into the X1650 PRO. It was done to free the XT suffix for the new Middle-End favorite. So the X1650 PRO and the X1650 XT are not the same processors working at different clock rates, as we got used to. They are absolutely different chips. The first chip (RV530) has 12 pixel shader units, 5 vertex units, 4 texture units, the same number of ROPs. The second chip (RV560) contains 24 pixel shader units, 8 vertex units, 8 texture units, and 8 ROPs. That is some characteristics are doubled. At the same time, operating frequencies of the card are practically the same: 590/1380 MHz in the 1650 PRO and 600/1400 MHz in the 1650 XT. But the bus hasn't been changed either. So memory bandwidth remains practically the same, which may have a negative effect on performance in general. There is obviously no need to design a new PCB, there are just some CrossFire modifications. Now about these modifications. Both cards, RV560 and RV570, now have connectors for special cables to build CrossFire. Read the details below. By the way, the GPU itself now supports CrossFire without any add-in cards and chips. It's one of the innovations. Now let's take a closer look at the cards.

Video Cards

Obviously, both new cards use unique PCBs, though based on earlier designs. X1950 PRO's PCB is actually similar to the expensive and complex X1900-series PCB. But it is significantly simpler. Memory chips are rotated 90° though they are still aligned in a semicircle. Considering a 256-bit bus and high chip clock rate, this is clear - you need to maintain identical (or nearly identical) distance from the core to each memory chip. The RAGE Theater unit providing VIVO functionality is on its usual place. It features TV-in requiring a special adapter. But the external power interface was moved to the center in card's "tail". The power supply unit was also simplified and now has fewer analog elements. It became more "digital" so to say. Overclockers might like that. Each card has a TV Out with a unique interface. So for both S-Video and RCA output one needs a special adapter supplied with the bundle. You can read about the TV-Out in more detail here. What concerns MPEG2 playback features (DVD-Video), we analyzed this issue in 2002. Little has changed since that time. CPU load during video playback on all modern video cards does not exceed 25%. Analog monitors with d-Sub (VGA) interface are connected with special DVI-to-d-Sub adapters. Maximum resolutions and frequencies:

Now about the X1650 XT. Its PCB differs from usual X1600/1650 cards in the CrossFire layout, as the RV560 supports this mode without installing a master card. The other things (except for some nuances in power circuits) are almost the same. Let's pause on the CrossFire connection. Finally, you can buy two identical cards and install them like NVIDIA's SLI. From the very beginning we were saying that [hard-to-buy] master cards won't justify hopes and will only degrade the idea of multi-GPU operation. It happened exactly so. Judging by Steam statistics, 99% of dual-card systems belong to SLI and only 1% to CrossFire. We can see that card design provides for either two usual adapters or one wide dual adapter. Why so many pins? SLI does with a considerable fewer amount. The matter is that SLI can transmit signals only one way at a time, while the new CrossFire is able to send them both ways simultaneously. Besides, adapters are actually flexible cables, so distance between cards doesn't matter. And SLI uses rigid adapters of fixed length (although they are always shipped with motherboards and therefore are suitable to connect two PCI-E x16 slots). Now about the cooling systems. When I was writing this article, I didn't have reference samples in my possession, so I couldn't describe reference coolers bound to be on the majority of such cards. But PowerColor equipped its products with something special.

You can clearly see that X1950 PRO features a solution from famous Arctic Cooling, which is both very effective and noiseless.

So here's the result! :)

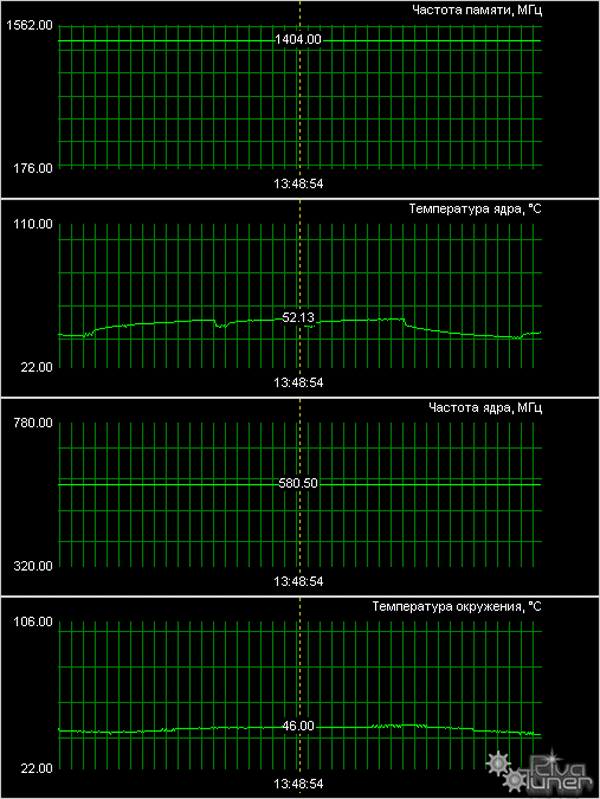

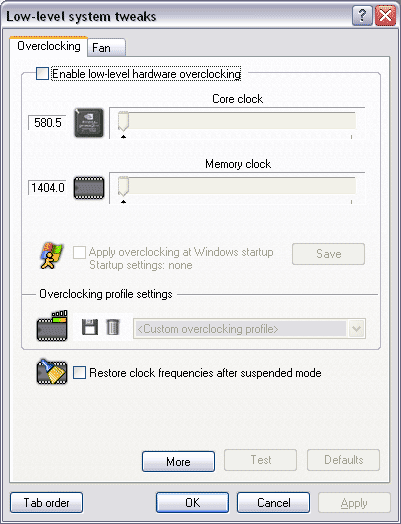

By the way, as you might have already guessed the new beta of RivaTuner by A. Nikolaychuk AKA Unwinder already supports these cards.

And now what concerns the cooler on X1650 XT. It's also a third-party solution, offered by PowerColor engineers.

The device consists of a thick heatsink with a turbine to pump the air in. It's also quiet.

As the core (in this case it's the only element to be cooled) does not get very hot, the temperature does not grow much considering the low rotational speed of the turbine.

Since we detached the coolers, let's have a look at the cores.

Note an interesting fact. Even though the chips have different packages, the dice are absolutely the same in form and size (both dice are 15x18 mm).

What is it? Why are the dice, made by the same process technology and featuring absolutely different characteristics, identical? Of course, we can assume it's the effect of various caches, etc. But I doubt it. I guess that RV560 and RV570 are physically THE SAME CHIPS! They use THE SAME DIE! Some of them are packaged into 128bit FC-PGA, the others - into 256-bit packages with protective frames. In the first case, they are paired down by 1/3 of pixel units, etc. Yep, unlocking X1650 XT to the level of X1950 PRO is out of the question! Different packages mean physically different buses. Besides, ATI is very skilled at locking various sectors in dice on the hardware level, not to be unlocked ever. Considering that these are samples, bundles and boxes are out of the question.

Installation and DriversTestbed configuration:

VSync is disabled.

Test results: performance comparisonWe used the following test applications:

Summary performance diagramsNote that we have run two test sessions: of a single card and of the SLI mode (two accelerators).

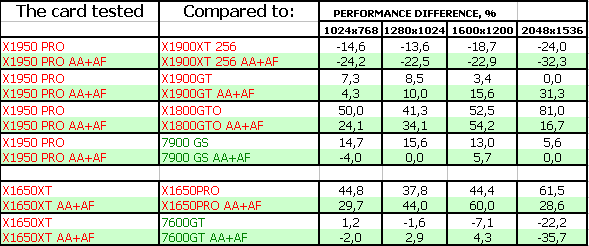

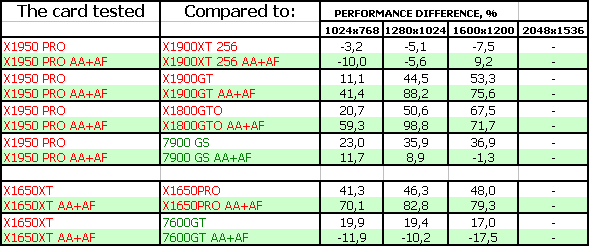

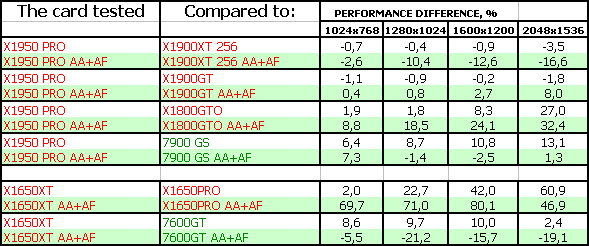

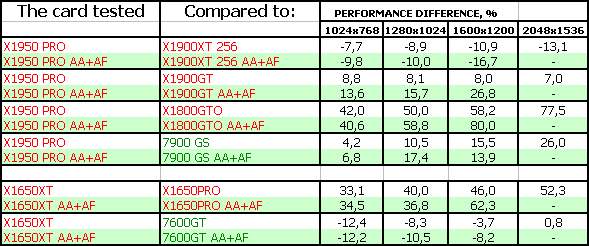

Game tests that heavily load vertex shaders, mixed pixel shaders 1.1 and 2.0, active multitexturing.FarCry, Research First of all, you can see that X1950 PRO outperformed 7900GS. It's also interesting that higher resolution in AA+AF mode increases advantage over X1900GT. This can be explained only by different memory bandwidths. But the X1650 XT does not fare well so far, it's outperformed. But the advantage over the X1650 PRO is impressive. Game tests that heavily load vertex shaders, pixel shaders 2.0, active multitexturing.F.E.A.R. The situation is similar to the previous, but the X1650 XT and the 7600 GT are on a par (we can ignore the defeat in 2048x1536, as card's performance is too low to play). X1950 PRO performs well. Splinter Cell Chaos Theory In this game the new products are victorious, they attack on all fronts. But the RV560 is barely victorious, only in AA+AF mode. But it's still a victory.

Call Of Duty 2 DEMO Considering that it's almost impossible to play this game in AA+AF mode with X1650-series cards, new cards from ATI are again victorious. Half-Life2: ixbt01 demo

But in this game, vice versa, AA+AF is rather comfortable even on mid-end cards. Therefore X1950 PRO and X1650 XT are slightly outperformed. The defeat is insignificant in the first case, almost parity.

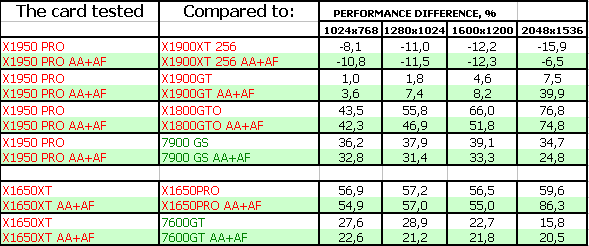

Game tests that heavily load pixel pipelines with texturing, active operations of the stencil buffer and shader unitsDOOM III High mode Chronicles of Riddick, demo 44

Naturally, OGL games radically benefit NVIDIA cards. We have been watching it for several years already. Nothing more to add, but the percent of these games is relatively small.

Synthetic tests that heavily load shader units3DMark05: MARKS When 99% of load falls upon shader calculations, the new cards win unconditionally due to a lot of pixel shader units. 3DMark06: Shader 2.0 MARKS

Here the load is mixed with complex texturing, so X1950 PRO winning percent is lower. The X1650 XT is defeated. 3DMark06: Shader 3.0 MARKS

And in this case the load on shader units grew again, so the new cards were victorious. Especially considering that the rival doesn't support HDR+AA.

Conclusions

ATI RADEON X1950 PRO 256MB PCI-E (RV570) (PowerColor) is a very good, even an excellent product! It comes up to all our expectations for its price. Add VIVO as a bonus. Of course, initial prices will exceed $200, but GeForce 7900 GS wasn't initially sold for this price as well. In other words, it's best choice for this price tag. The card itself worked great without any problems.

ATI RADEON X1650 XT 256MB PCI-E (RV560) (PowerColor). This accelerator is better than its predecessor. But it goes head to head with GeForce 7600 GT. So its price will be the main criterion here. However, we should note that ATI's 3D quality is higher, considering better anisotropy and the overall quality for default driver settings (we already wrote about it). Performance of the card is evidently limited by memory bandwidth, which didn't grow much since the X1650 PRO. But the number of shader units and TMUs was doubled. I guess if manufacturers of these cards equip them with faster memory or overclock it, cards' performance will grow much. This product is generally very good for its price of $150.

The other parameters as well as operating stability, 2D quality - everything is up to the mark.

You can find more detailed comparisons of various video cards in our 3Digest.

ATI RADEON X1300-1600-1800-1900 ReferenceNVIDIA GeForce 7300-7600-7800-7900 Reference

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. | ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||