|

||

|

||

| ||

A much cheaper and a tad slower 2900XTAlmost two years have passed since ATI lost its image of the leading GPU manufacturer. Then the company started to lose the market and to experience financial problems. It all resulted in a merger with AMD, almost all top managers of the former ATI Technologies were fired from AMD. That's natural and not natural at the same time. What a shame! The trademark is lost because of talentless management of the former ATI. Yes, the problems started with the launch of the X1xxx family in October 2005. While Mid-End solutions were quite acceptable (still, there has been a shortage of these cards in the market), top products were plain weak. But the cards got very hot during operation and suffered from other problems. NVIDIA easily launched overclocked modifications of its Hi-End cards to counter ATI products that had been designed with so many difficulties. The age of ATI came to an inglorious end, and nervous reactions of ATI Russia to any criticism about RADEON X1xxx were a solid proof of that fact. But if they had known how much time was left for their office to remain independent... It's history now. We sometimes receive e-mails, where our readers wonder why we constantly criticize AMD, while NVIDIA seems to get more favorable treatment. First of all, only very inattentive readers can write that - our articles in the beginning of 2007 were critical of the Californian company and their terrible drivers. Secondly, if the HD family from AMD have real problems, why should we hush it up? And if the company carries on with its ugly policy of no normal planning (when there are occasionally no new products on the shelves, and partners are groaning from the lack of GPUs or cards) even as part of AMD, should we praise it? - We don't think so. And so on. We'll not repeat all our critical remarks about the recent AMD products. But they are no lies. We criticize real blunders. But clouds split and the sun shone on a new product (even if not for long). But we are surprised to see the RADEON HD 2900 XT modification at $249 instead of $449. That is, the GPU clock was reduced by 150 MHz, memory is left almost untouched. And voila - meet the 2900 PRO, which is cheaper by $200. It's clear even without tests that such a product can be extremely popular! However, the life cycle of this card is limited - just a couple of months, until the RV670 is released. And it won't bring much profits, as its prime costs are similar to those of the 2900 XT. This GPU is not cut down, only its clock rate is reduced by 150 MHz. I suspect that it's reduced artificially. That is these cards are probably based on ready 2900 XT cards, not on R600 rejects. Their clock rate is reduced on purpose. Our sample worked well at the clock of the 2900 XT. So owners of the 2900 PRO might have a chance to get the 2900 XT (they should just try to increase the clock rate manually or flash the BIOS version from the 2900 XT). But they'll get it for $250. I think there is no point in repeating that all these cards are manufactured at third-party plants by AMD's orders. So partners, including HIS, just buy ready 2900-series cards from AMD. In fact, vendors only add entourage: bundle, package, labels, and warranty for dealers or distributors. Graphics card

It's clear at first sight that it's a copy of the reference 2900 XT. However, we shouldn't expect anything else judging by the above said. Remember that the R600 requires a much more complex PCB compared to the R580 (X1950 XTX) because of the twice as wide memory bus. Nevertheless, the manufacturer tried to keep the card no longer than previous solutions and to equip it with a proper cooler (with normal dimensions). Owing to very high power consumption (above 200 Watt), the card has two power connectors. One of them is an 8-pin connector (PCI-E 2.0) instead of the usual 6-pin one. There are presently no adapters for this connector. That's OK, because a usual 6-pin cable from a PSU can be plugged into this connector, and the remaining two pins are responsible for unlocking overclocking (the driver determines whether these pins are powered, if no - it blocks any attempts to raise frequencies). The card has TV-Out with an original connector. You will need a special adapter (usually bundled) to output video to a TV-set via S-Video or RCA. You can read about TV-Out here. We should also note that all top cards from ATI are traditionally equipped with VIVO (including Video In to convert analog video to digital form). This function is based on RAGE Theater 200 here instead of the traditional Theater. We should mention sterling HDMI support. The card is equipped with its own audio codec, which signals go to DVI. So the sterling video-audio signal goes through a special bundled DVI-to-HDMI adapter to an HDMI receiver. We can see that the card is equipped with a couple of DVI connectors. Dual link DVI allows resolutions above 1600x1200 via the digital interface. Analog monitors with d-Sub (VGA) interface are connected with special DVI-to-d-Sub adapters. Maximum resolutions and frequencies:

We'll not repeat our description of the cooling system for the third time, it's published in the baseline article. Since CATALYST 7.8, the cooler is not noisy in 2D mode - its speed was reduced, which had a positive effect on the noise background of a system unit. However, the airflow in 3D mode is still intense. But there are some changes as well: a third heat pipe is added to channel the heat from the base to the heatsink. It made the cooling system a tad more efficient. We cannot really say that these modified coolers (their exterior does not differ from the previous modification) will be used in the 2900 PRO only. They may be already used in the 2900 XT, but we haven't seen them.  The baseline article mentioned 37% of the fan potential, at which the cooler usually works. However, the noise is noticeable even in this mode. The core temperature does not exceed 80°C. This card dissipates a tad less heat than the 2900 XT. You can control fan speed at large steps only, it's either 31% or 37% (all new versions of RT can do that). Hence the conclusion that 37% is the required minimum, which helps avoid GPU overheating. You must not reduce the speed below this limit. That it the engineers were not being on the safe side here, they determined the real minimum speed for 3D mode to avoid overheating. And this minimum is not quiet. That's a big problem of the new cards. Here is a little consolation: the noise is generated by the airflow, not by the fan, so its frequency is not very irritating. As this card is actually a modification of the 2900 XT, its package and bundle (DVI-to-VGA, DVI-to-HDMI, and VIVO adapters, TV cords, CDs with drivers and demos, a STEAM coupon) are identical to those described in the article about a similar HIS 2900 XT card. Installation and DriversTestbed configuration:

Test results: performance comparisonWe used the following test applications:

Graphics card performanceIf you are familiar with 3D graphics, you will understand our diagrams and draw your own conclusions. However, if you are interested in our comments on test results, you may read them after each test. Anything that is important to beginners and those who are new to the world of video cards will be explained in detail in the comments. First of all, you should look through our reference materials on modern graphics cards and their GPUs. Be sure to note the operating frequencies, support for modern technologies (shaders), as well as the pipeline architecture. ATI RADEON X1300-1600-1800-1900 Reference NVIDIA GeForce 7300-7600-7800-7900 Reference If you have just begun realizing how large the selection to choose a video card is, don't worry, our 3D Graphics section offers articles about 3D basics (you will still have to understand them - when you run a game and open its options, you'll see such notions as textures, lighting, etc) as well as reviews of new products. There are presently two companies that manufacture popular GPUs: AMD (its ATI department is responsible for graphics cards) and NVIDIA. We can also mention Matrox, S3. However, their share of the discrete graphics market is less than 1%, so we can discard them. So most of the information is divided into these two sections. We also publish monthly 3Digests that sum up all comparisons of graphics cards for various price segments. The February 2007 issue analyzed dependence of modern graphics cards on processors without antialiasing and anisotropic filtering. The March 2007 issue did the same with AA and AF. Thirdly, have a look at the test results. We are not going to analyze each test in this article, primarily because for us it makes sense to draw a bottom line in the end of the article. We will, however, make sure that we make our readers aware of any special circumstances or extraordinary results. S.T.A.L.K.E.R. World In Conflict (beta), DX10, VistaTest results: World In Conflict (beta), DX10, Vista

Splinter Cell Chaos Theory (HDR) Call Of Juarez Company Of Heroes AA stopped working in this game with all AMD graphics cards starting from Driver Version 7.8. So in this case AA+AF for the 2900 = AF only!

Serious Sam II (HDR) Prey 3DMark05: MARKS 3DMark06: SHADER 2.0 MARKSTest results: 3DMark06 SM2.0 MARKS

3DMark06: SHADER 3.0 MARKSTest results: 3DMark06 SM3.0 MARKS

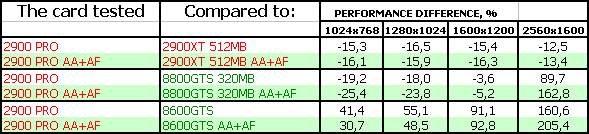

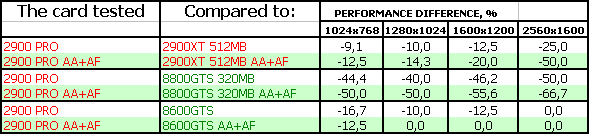

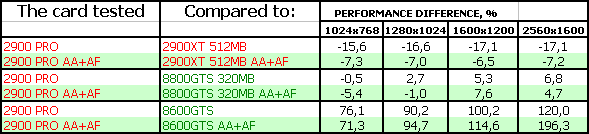

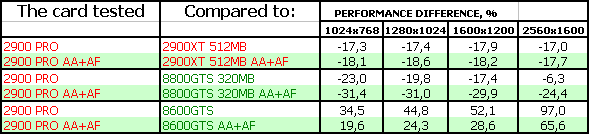

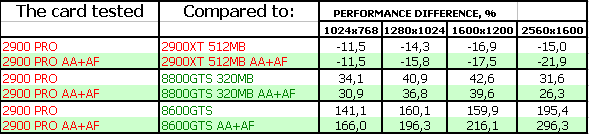

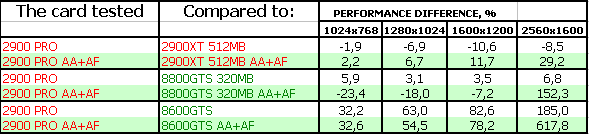

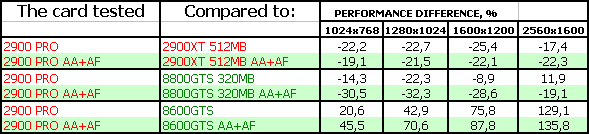

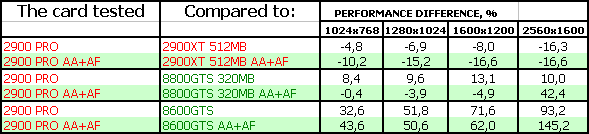

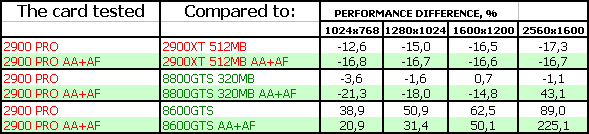

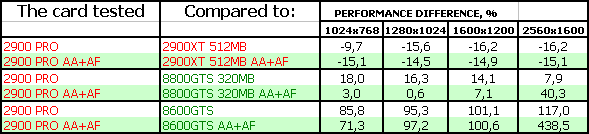

ConclusionsThis situation is more difficult to analyze than usual, because NVIDIA does not offer anything in the $250 segment. The 8800 GTS 320MB is more expensive, to say nothing of the 640 MB product. The 8600 GTS is cheaper. So we've compared our card under review with both competitors. So if the 2900 PRO performs somewhere in between the 8600 GTS and the 8800 GTS (that is the red curve on the diagrams is between two light-green curves), it's good for the new product. To say nothing of situations, when the 2900 PRO may demonstrate higher results. According to our tests, the 2900 PRO is outperformed by its elder brother directly proportionally to the difference in clock rates, because memory frequencies are little different. But there are some exceptions. For example, we have noticed some driver optimizations for the 2900 PRO. For example, in the notorious AA mode, where the 2900 XT does not perform well, the 2900 PRO demonstrates better results, it even sometimes outperforms the more expensive card. Yep, GPU errors fixed in drivers may give such surprises. For example, AA does not work in CoH, so a performance drop because of AF is minimal or non-existent here. What concerns the main question (competition with NVIDIA), the 2900 cards perform weakly only in WiC and sometimes in STALKER. OK, it's a painful blow at the image of this DX10 card, which copes with such a modern game just like a Middle-End card. We'll see what happens in other DX10 tests, which will be released soon. HIS RADEON HD 2900 PRO 512MB PCI-E produces a very nice impression owing to its low price. Indeed, $250 for such a product makes you forget about many problems, because this card is better than Mid-End cards of the previous and current generations anyway. Remember that the card works well at the 2900 XT frequencies, so it's actually a downrated 2900 XT card. Let's hope that driver problems will be fixed soon. The value of this product fades, of course, if the price is raised too high. As always, the final choice is up to the reader. We can only inform you about products and their performance, but can't make a buying decision. In our opinion, that should solely be in the hands of the reader, and possibly their budget. ;) And another thing that we are not tired to repeat from article to article. Having decided to choose a graphics card by yourself, you have to realize you're to change one of the fundamental PC parts, which might require additional tuning to improve performance or enable some qualitative features. This is not a finished product, but a component part. So, you must understand that in order to get the most from a new graphics card, you will have to acquire some basic knowledge of 3D graphics and graphics in general. If you are not ready for this, you should not perform upgrades by yourself. In this case it would be better to purchase a ready chassis with preset software (along with vendor's technical support,) or a gaming console that doesn't require any adjustments. To find more information regarding the current graphics card market and the performance of various cards, feel free to read our monthly special 3Digest.

Andrey Vorobiev (anvakams@ixbt.com)

October 23, 2007 Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. | |||||||||||||||||||||||||||||||||||||||||||||||||||||