|

||

|

||

| ||

Part 3: Performance in Game Tests

TABLE OF CONTENTS

Our mood after the ATI maritime party, when we could take a picture of the RADEON X1800XT under a palm-tree or on the seashore, is getting more and more business-like, jokes and lyrics fall into the shade. Considering that ATI has recently published its quarter financial results, reporting $100 million losses, launching the new series of accelerators gives ATI hope for recovery (ATI's revenue share for discrete graphics is very large). Will the new products come up to hopes and expectations? Judging from the first part of our article on the theory and architecture, we can say that it's quite possible. Part II of our X1000 saga confirmed that we can expect many interesting things from the new products. However, it gave some cause for trouble, considering that the new products will be initially offered at sky-high prices. The company will have to drop prices again to promote its products, which will certainly have a negative effect on revenues. But we still feel optimistic. Who knows, maybe Christmas sales will go up to the mass level. However, the main part of this serial is published in this article - game tests. Here we can determine a strong probability of success or failure. Though we still cannot be 100% sure, as the quality aspect is gaining more importance in modern 3D graphics: how well old and new 3D functions will work, what glitches will reveal themselves in games, etc. We shall cover this issue later, in the fourth part. Test results: performance comparisonWe used the following test applications:

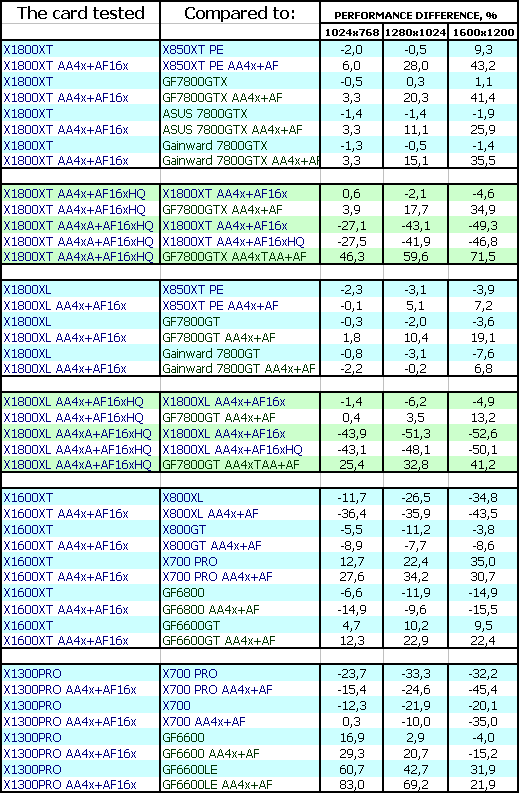

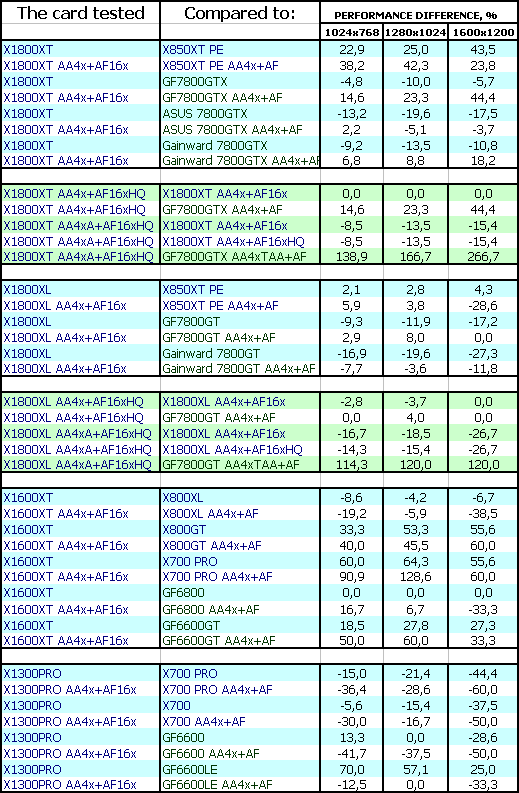

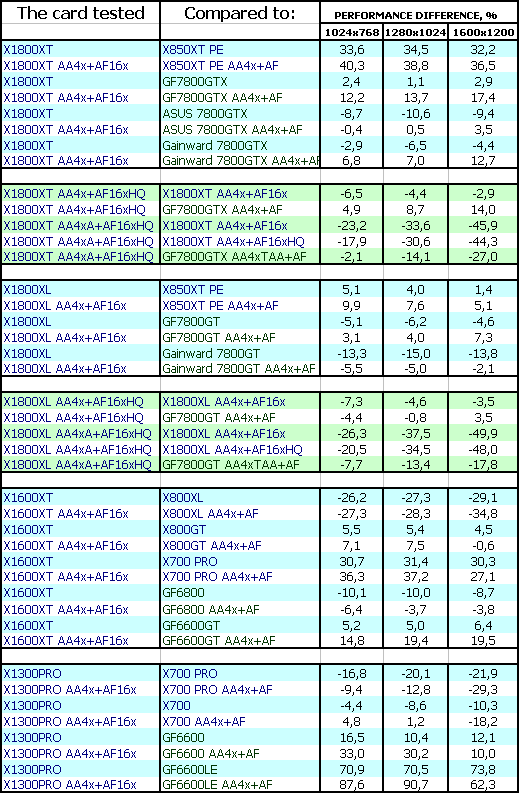

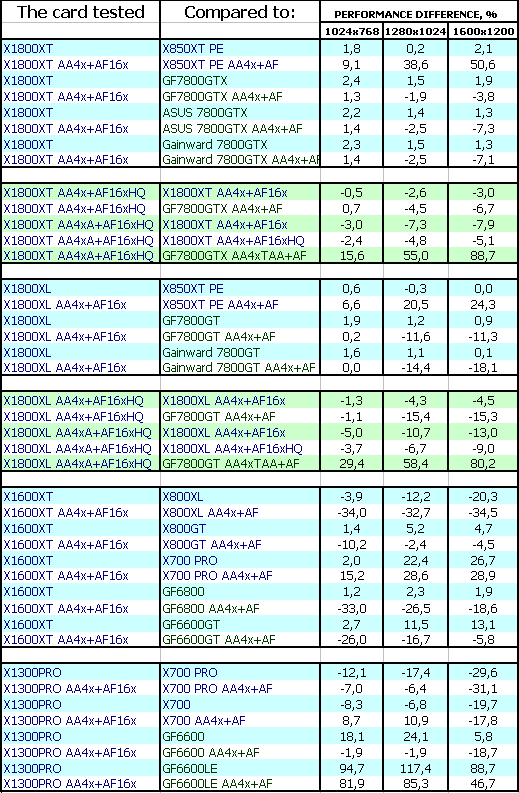

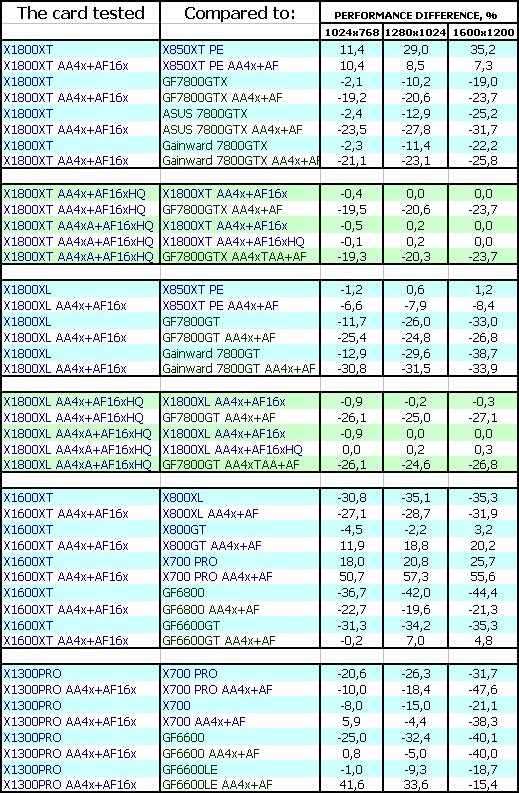

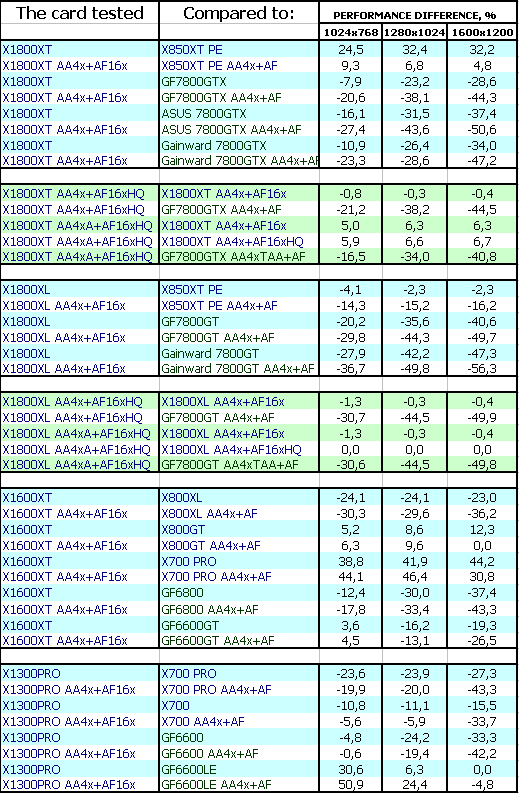

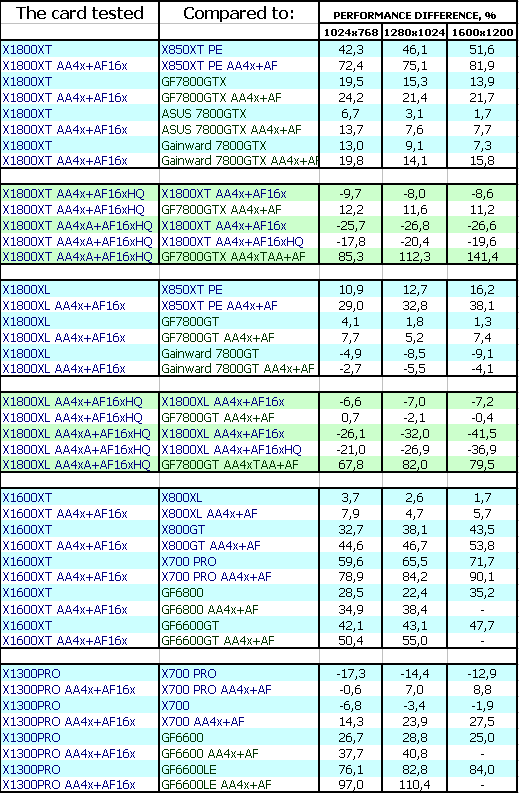

Considering the capacity of the latest generation of accelerators, performance analysis should be performed only with the AA+AF load. Otherwise, it would be a competition of processors or system units rather than video cards. Of course, in heavy applications, where powerful video cards demonstrate mediocre performance even without AA+AF, we shall analyze these modes as well. Game tests that heavily load vertex shaders, mixed pixel shaders 1.1 and 2.0, active multitexturing.FarCry, Research It goes without saying that the X1800XT is victorious everywhere in this case, though the AF HQ and AAA modes cost dearly. However, the NVIDIA 7800GTX dropped even lower because of the glitch in its drivers (TAA+GC+AF). The X1800XL also takes up the lead (considering modes with AA+AF), only the overclocked 7800GT managed to reach parity with the new product from ATI. The X1600XT managed to outperform only the 6600GT due to its disputable architecture. But the 6800 turned out faster, even though it's close in price to the new product. At the same time, the X700 PRO turned out much weaker than the X1600XT. Only the "king for a day" X800GT demonstrated higher speed than the new product from ATI. The X1300 PRO is victorious not only over the 6600LE (which is much cheaper), but the 6600 is left behind as well. It will be up to the price. In order to highlight the above mentioned architectural differences, let's digress to the synthetic tests. Let's see the architectural difference between the R420 and the R520 under equal conditions (16 pipelines in both cases, equalized core and memory clocks). Besides, let's add the G70, overclocked to 486 MHz (close to 500 MHz), which demonstrates potential of this chip in Ultra modification (if it's launched). Here are two synthetic tests - fillrate and geometry performance:

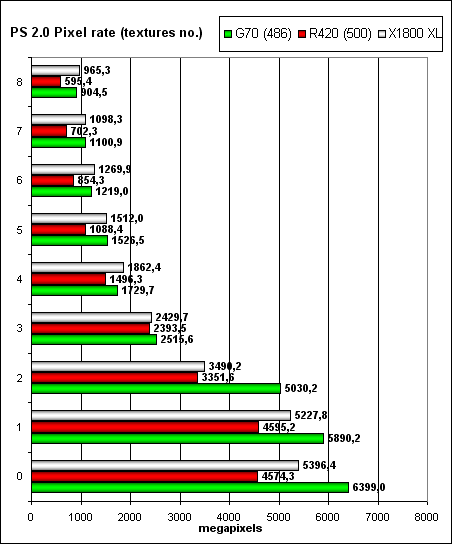

You can see well that the 24 pipelines from NVIDIA defeat ATI. Even despite an advanced pixel path of the R520 in XL modification. Except for one thing - faster memory in the XT card provides noticeable advantage in fillrate. The R420 is less efficient, especially in case of a single texture (an effect of the frame buffer compression difference). Now let's proceed to geometric shaders:

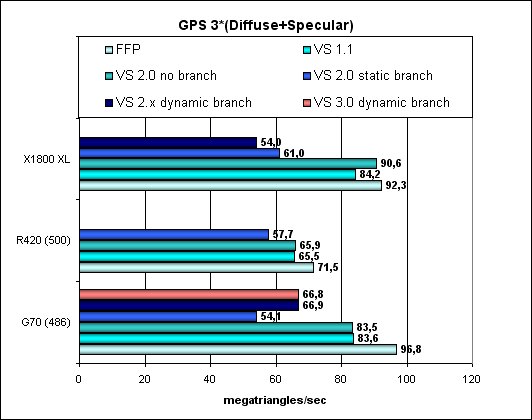

NVIDIA has 8 vertex units here, like the R520, while the R420 has only 6 - it's easily noticeable. You can also see well that NVIDIA's vertex units are no worse, to be more exact, a tad better - the core clock is 486 instead of 500. Now we have clearly seen the reasons for the above-said game results. Let's get back to Far Cry.  The game depends much on a processor, so advantages of the new architecture are somewhat neutralized. But still winning extra 15-18 percent to the X850XT at equal frequencies is not bad. Game tests that heavily load vertex shaders, pixel shaders 2.0, active multitexturing.F.E.A.R. (MP)

The X1800XT defeats the 7800GTX (reference). It can even easily compete with the overclocked 7800GTX represented by a card from Gainward. But the heavily overclocked 7800GTX (ASUS) snatches a victory. Enabling high-quality anisotropy slows down performance a little, but not critically. Adaptive AA works, but its quality leaves much to be desired. NVIDIA has a different problem: when TAA (in MSAA mode) and Gamma correction in AA are activated simultaneously, performance drops too low (SSAA snaps into action). It's the problem of Drivers 81.84, as we have never experienced this before. But that's the programmers' fault, thus the following situation. The X1800XL fairs a tad better than the previous flagship X850XT PE. However, there is parity between this card and the 7800GT (if we take into account AA+AF modes). It's even defeated by the faster 7800GT from Gainward. On the one hand, the X1600XT heavily outperforms the cheaper X800GT and X700PRO, which should have happened anyway. On the other hand, despite the brilliant victory over the 6600GT, it's outperformed by the 6800 card, which price is currently on the level with the X1600XT. So, the situation is controversial. There is an element of uncertainty. The X1300 PRO actually outperformed the cheapest 6600LE, the other cards won the battle. But the new card is much more expensive than the 6600LE.

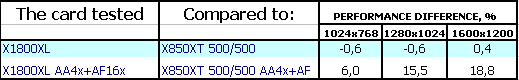

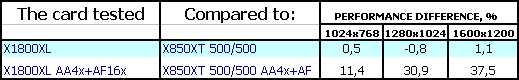

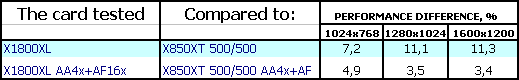

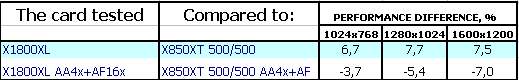

The above said on the X1800XL outperforming the X850XT PE is backed up by the fact that the architecture of the new product is more perfect. We get extra 20-25% performance at equal frequencies and with the same number of pixel pipelines (+2 vertex processors would have hardly provided such gain).

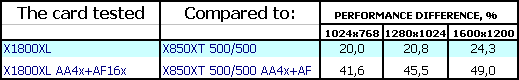

Splinter Cell Chaos Theory The X1800XT is also victorious. Even the overclocked 7800GTX cards failed to overcome the new flagship from the Canadian company. Situation with the X1800XL is not as peachy. The new card loses everywhere without AA+AF, but in heavy modes it practically snatches the victory from the 7800GT, but only if it works at the standard frequencies. The X1600XT demonstrates a similar picture as in the previous case: it's outperformed by the 6800, but it defeats the 6600GT (that can be easily explained by the difference between their memory buses and the very high core clock). The X1300PRO is doing quite well: this card outperforms the GF6600, to say nothing of the 6600LE. But the X700, offered at the same price level, turns out faster. They should reduce prices for this product as well. I can tell you that manufacturing 4-pipeline chips at the end of 2005 and trying to sell them at more than $100 is a sacrilege. The proof is remnant piles of the 9800 and 9700 PRO cards, and even the X700 at a dropped price - all of them are 8-pipeline products below $100 (or $150 for the 9800 due to the 256bit bus).  We can see again that improving the R520 architecture brings its dividends (+15-17%) versus performance of the single-frequency X850XT.

Half-Life2: ixbt01 demo

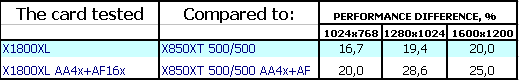

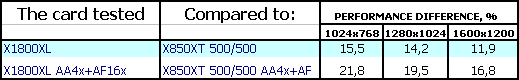

This game depends much on a processor, it's rather easy for modern 3D flagships, even the heaviest modes cannot load them to the brim. That's why we are witnessing approximate parity between the current leaders X1800XT and 7800GTX. But the X1800XL lost the battle, the 7800GT turns out faster even at nominal frequencies. The situation with the X1600XT is gloomy as well. It actually outperforms only the X700 PRO, which is below $200 already. The X1300PRO also managed to overcome only the weakest 6600LE, which is much cheaper. Alas. That's the limit of its capacities.  Comparison with the X850XT at 500/500 MHz still demonstrates the pronounced advantage of the new architecture.

Game tests that heavily load pixel pipelines with texturing, active operations of the stencil buffer and shader unitsDOOM III High mode

Chronicles of Riddick, demo 44

Alas, no matter how bright were our hopes for the shadow bottlenecks removed and for the OpenGL driver improved, all in vain. There is no point in reviewing the issue card by card - the entire line from ATI is utterly defeated. Alas!

Synthetic tests that heavily load shader units3DMark05: MARKS That's where everything is magical and peachy, you may even spare your question: of course in 3DMark. Let the cards work so-so in games, let them have bugs with activating various modes. But everything is perfectly streamlined for 3DMark! The results are self explanatory: if the new cards had had such victories in games as well, we wouldn't have asked questions on their prices, we wouldn't have advised to drop them.

It goes without saying, optimizations for the new architecture look much better in this test as well.

Performance conclusionsThe most controversial product is the ATI RADEON X1600 XT. I've ambivalent impressions. On the one hand, it obviously fairs on the level with the GeForce 6600GT (sometimes a tad better), but that card is below $200 already, reaching the $150 mark. And the X1600 XT is offered at $200 or even $250 for a 256 MB modification. Too expensive. On the other hand, it's equipped with very expensive memory, operating at huge frequencies for a middle-end card - 1380 MHz. Besides, the 590 MHz core is not a cheap die. Is there a price reduction reserve for such cards? I doubt it. The product is too "tight" (the frequencies are squeezed to maximum). Besides, the architecture is misbalanced. We already wrote about it before. 12 shader pipelines and just 4 texture units. If the Canadian company hopes that all games will be similar to TR:AoD, which uses shaders and a couple of simple textures, where its products will be up to the mark, they are mistaken. Texture units have plenty of work to do! They shouldn't be slimmed down like this. ATI RADEON X1800XT is a new flagship. Can we call it the king of 3D graphics? - No, we can't, I guess. You can clearly see that it shares the first place with the 7800GTX, considering the market is full with overclocked cards based on this model. But it's surely one of the leaders. The situation with the NV40/R420 repeats itself. Again we have two leaders and the choice comes down to your religion. Considering that both products, from ATI and from NVIDIA, fully support all new DirectX 9.0c features (somebody will definitely dislike the word "fully", being of the opinion that it's not quite the case with the Canadians, but according to Microsoft, they offer sterling support). NVIDIA is not doing perfectly either - as these two chips have different FP16 capacities, the chances are high that game developers should be careful with both of them). I hope the prices will not be initially raised too high, considering that the 7800GTX prices have already dropped significantly. The ATI RADEON X1800XL is the younger brother of the flagship, which closes the High-End sector at the bottom. It obviously competes with the GeForce 7800GT in price, but its performance issues are controversial. So the choice will again come down to your sympathies (religion), under condition that the prices for the new product are not raised too high. The ATI RADEON X1300 PRO is approximately on the level with the GeForce 6600, sometimes higher, sometimes lower... But again its characteristics are "tight": it is equipped with expensive DDR2 memory (have you ever seen 2.5ns memory on a video card for $100?). However, this product obviously doesn't qualify for $150, hence the controversial impressions.

On the whole I can say the following: though with problems, though not that brilliant, the new line has been finally launched and will soon hit the stores. Thus, we'll learn the real market prices, which will rate the products and determine popularity of the new cards. At least, there is hope. These are not final conclusions, as we are to analyze the other aspects of the new accelerators.

You can find more detailed comparisons of various video cards in our 3Digest.

Theoretical materials and reviews of video cards, which concern functional properties of the GPU ATI RADEON X800 (R420)/X850 (R480)/X700 (RV410) and NVIDIA GeForce 6800 (NV40/45)/6600 (NV43)

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |