|

||

|

||

| ||

TABLE OF CONTENTSHi-End video cards are traditionally much more interesting to review, because they are the engine of this industry - they either promote new technologies or expand the performance horizon with increasingly heavy AA and AF modes in high resolutions. But they have a major drawback from the point of view of a regular consumer. It's a very high price. That's why many users read such reviews only out of curiosity or just to dream a little... about buying such a device in distant future. Why do reviewers pay so little attention to budget models (prices below $100)? Only because they are not interesting and boring, because they are simply halves, quarters, octants of Hi-End models? Not just that. Let me tell you, that's not the main reason. The fact is, all new cards below $100 are usually on a par with former representatives of Middle-End cards, which got cheaper. That segment is actually overcrowded as well! Judge for yourself: There were GeForce 6600 and 6600GT cards. Now we have 7300GS, 7300GT, 7600GS cards (which are steadily going down to the sacramental mark of $100). These are just modifications of THE SAME CARD that come from different production lines and offer different frequencies! But they bring essentially nothing new in 3D. Yep, they offer improvements, they feature Dual Link DVI, etc. But that's not the point. Manufacturers and we understand that the process technology grows thin, there appear opportunities to raise core clocks, to equip budget cards with unprecedentedly fast local memory, as it's getting cheaper. Hence all those new numbers in card names. It pays to manufacturers. But do users benefit from it as well? They are already confused by all those multiple solutions, each one being said the best. Here is a question right off the bat: what card is better, GeForce 7300 GT or GeForce 6600 GT? The latter being even more expensive. It's impossible to answer without analyzing their architectures, frequencies, and test results. So as there is some lull in launching new expensive solutions, we should demonstrate budget cards, which can be very advantageous in terms of price/performance. So, GeForce 7300 GT. We already reviewed GeForce 7300 GS - its core contains only four pixel shader units, it operates at 550 MHz, and it has a 64-bit memory bus. Logically, we understand that the 7300 GT is an overclocked modification of the 7300 GS, the manufacturer is sure to raise frequencies and equip it with a 128-bit bus. Not by any means. The gap is even larger! The 7300 GT card is not based on G72 - a small core of GeForce 7300 GS, but on G73, that is the chip used in the GeForce 7600 series. But its GPU is curtailed from 12 to 8 pixel units. And its vertex units are reduced by one. That is 8 and 4, instead of 12 and 5 in the 7600GT/GS. I don't know why this card is not called 7600 LE or XT (in the old NVIDIA's fashion). But the fact remains, the difference between the 7300 GS and the GT is huge. Well, it's not twofold, but something about it - the reference 7300 GT card operates at 350/666 MHz, while the clock core of the 7300 GT is 550 MHz. Ironically, the first representative of the 7300 GT in our lab is a Galaxy card, which frequencies differ much from the reference card. It must be noted that GeForce 7300 GT has TWO modifications: with DDR2 and with GDDR3 memory. I repeat, you must always CHECK what memory is USED in such a card, when you buy it. If it's DDR2, you'll get low frequencies (350/666 MHz). If it's GDDR3, frequencies may reach 700 (1400!!!) MHz for memory and up to 500 MHz for the core. Fancy that! - The cards have the same name, but their frequencies differ like heaven and earth, like the 6600 and the 6600 GT. Again we see this ugly situation, when customers are taken for the fools and different cards are sold under the same name. That's too bad. We'll analyze other 7300 GT cards as well, to get the general idea of such "floating frequencies". Of course, this product and such frequencies may be blamed on Galaxy, its initiative. But I think that NVIDIA must not hush such things. I can understand the difference in 20-30 MHz - it's fashionable to manufacture overclocked cards (Golden-something or Extreme-to-the-skies products). But it's too much, when the difference between the reference card (350 MHz) and the production-line product (500 MHz) reaches 150 MHz. Let manufacturers use different names and not confuse our readers. Yep, a label on the box may run that the card is equipped with GDDR3, but it does not answer the question about frequencies and how much they are raised from the nominal value. Plenty of such products are sold as OEM, when you cannot find frequencies or a memory type of a card. So, let's proceed to the examination of GeForce 7300 GT from Galaxy, equipped with GDDR3 memory and offering much higher than nominal frequencies. Video card

You can see well on the photos that the Galaxy card has its own design, which only distantly resembles the reference design of the 7600 GT. The video card is equipped with very fast 1.2ns memory, which runs at frequencies below its nominal value. Considering that the GPU is already overclocked and is equipped with a huge cooler from Zalman (obeisance to overclockers), the card is generally intended for overclockers.

The card has TV-Out with a unique jack. You will need special bundled adapters to output video to a TV-set via S-Video or RCA. You can read about the TV-Out in more detail here. Analog monitors with d-Sub (VGA) interface are connected with special DVI-to-d-Sub adapters. Maximum resolutions and frequencies:

What concerns the cooler, the Galaxy product is equipped with a good modern and efficient solution from ZALMAN. You can read about this cooler at the link above.

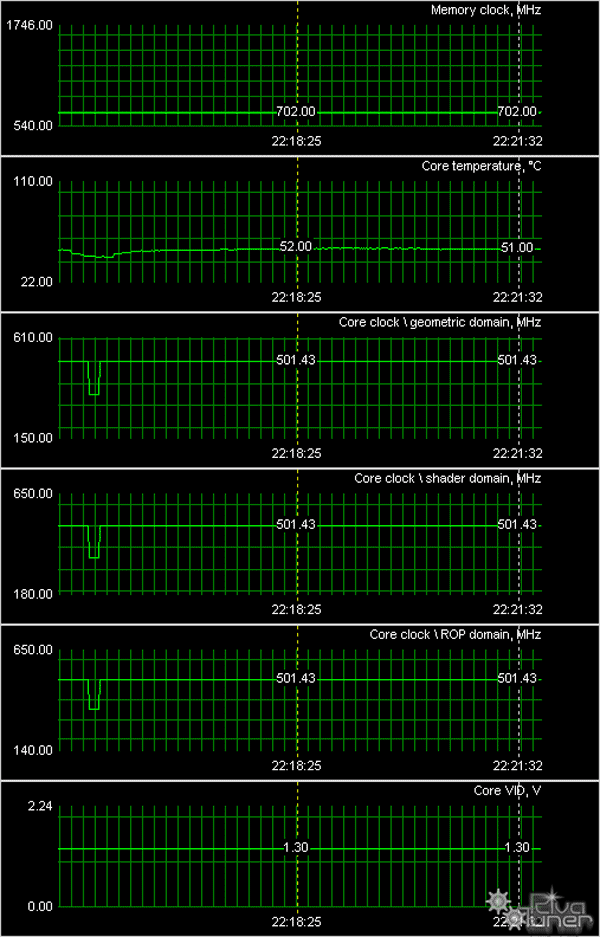

The device efficiently cools the overclocked core, remaining noiseless:

Memory chips are also equipped with heatsinks. So, running a few steps forward, I can tell you that we managed to overclock the card to 1680 MHz (memory) and 560 MHz (core).

Bundle

Box.

Installation and DriversTestbed configuration:

VSync is disabled.

Test results: performance comparisonWe used the following test applications:

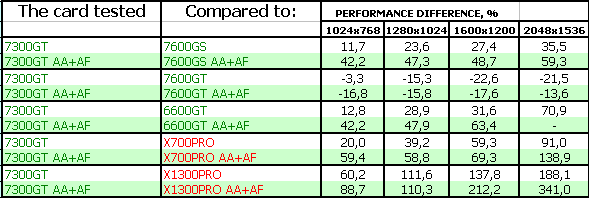

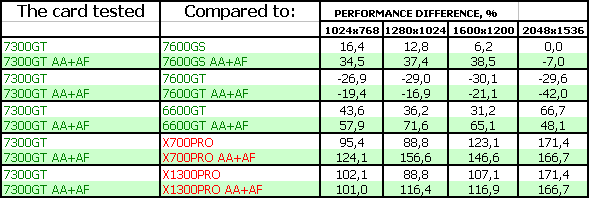

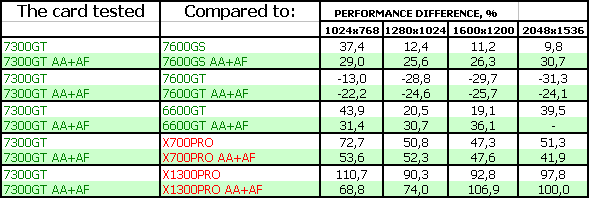

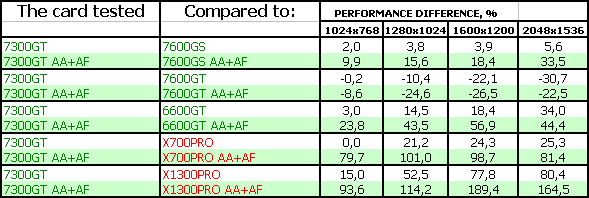

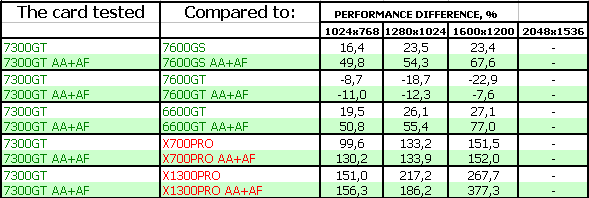

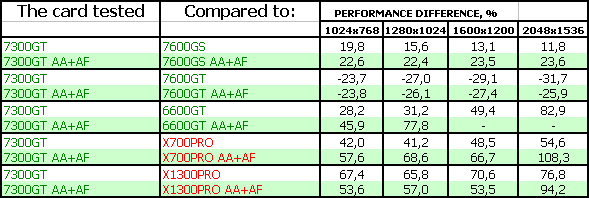

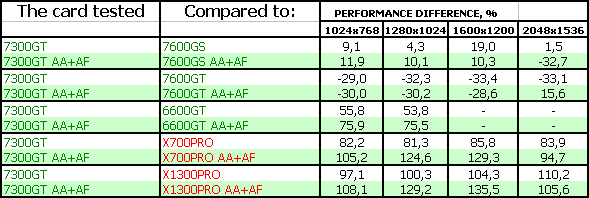

Summary performance diagrams

Game tests that heavily load vertex shaders, mixed pixel shaders 1.1 and 2.0, active multitexturing.FarCry, Research

Game tests that heavily load vertex shaders, pixel shaders 2.0, active multitexturing.F.E.A.R.

Splinter Cell Chaos Theory

Call Of Duty 2 DEMO Half-Life2: ixbt01 demo

Game tests that heavily load pixel pipelines with texturing, active operations of the stencil buffer and shader unitsDOOM III High mode Chronicles of Riddick, demo 44

Synthetic tests that heavily load shader units3DMark05: MARKS 3DMark06: Shader 2.0 MARKS

3DMark06: Shader 3.0 MARKS

You can find our comments in the conclusions.

Conclusions

Galaxy GeForce 7300 GT 256MB GDDR3 PCI-E. There is no need to comment on each test, everything is crystal clear. The accelerator brilliantly copes with its task and outperforms all competitors. Moreover, it's sometimes faster than the 7600 GS! (the total of 500 MHz and 8/4 pipes turned out faster than 400 MHz and 12/5 pipes). If the card is below $100, it will be evidently an excellent accelerator for its level! Of course, we have some doubts that the price of such cards will be much higher than for the regular 7300 GT cards.

What concerns the card itself, it has worked great, we have no gripes with its operation, it has demonstrated excellent 2D quality! I'll remind you that we managed to overclock the card to 560 MHz (core) and 840 (1680) MHz (memory).

I repeat that if the price for this product is just a tad higher than for the regular 7300 GT card, it will certainly be a phenomenal success. The card has put up an excellent performance.

You can find more detailed comparisons of various video cards in our 3Digest.

Galaxy GeForce 7300 GT 256MB GDDR3 PCI-E gets the Original Design award (July).

Theoretical materials and reviews of video cards, which concern functional properties of the GPU ATI RADEON X800 (R420)/X850 (R480)/X700 (RV410) and NVIDIA GeForce 6800 (NV40/45)/6600 (NV43)

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. | |||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||