|

||

|

||

| ||

TABLE OF CONTENTS

All our readers know well that in the end of January ATI announced a new series of desktop video accelerators - RADEON X1900. This series contains three cards: RADEON X1900 XTX (the most powerful accelerator), RADEON X1900 XT (a tad slower), and RADEON X1900 XT CrossFire Edition. We already examined the first two products. This article will be devoted to CrossFire based on the X1900. The technology itself was already reviewed, so we shall not repeat ourselves. In brief, it's a counterpart of NVIDIA SLI, when two accelerators work together to process 3D graphics and a final result is ideally obtained twice as fast (in real life the gain is lower for a number of reasons: game peculiarities and CPU performance limits (system resources), etc). There are different methods of sharing scene rendering between the cards, it's all up to the driver. NVIDIA SLI either slices a scene horizontally in two pieces, which size can vary depending on video requirements of a game; or each accelerator processes an entire frame on its own (a complete scene), but by turns (even frames - by one accelerator, odd frames - by the other). CrossFire adds the third mode - dividing a scene into tiles in staggered rows and sharing them between two video cards. That's just an outline. You can read the details in this article about CrossFire. SLI has a number of advantages: firstly, it had appeared earlier and had time to grow roots, its drivers were updated, SLI support got stronger. Secondly, SLI does not need a special card, any two video cards with identical GPUs will do (from GeForce 6600LE and higher). All these SLI advantages automatically turn into disadvantages for CrossFire (CF). This technology was too late, it was poorly supported, and represented by outdated X850 cards. Plus a mandatory master card - CF Edition. But that was back in summer and early autumn 2005. Now the situation has changed. First of all, the new X1900 series includes a CF Edition, these cards are already available in stores together with regular X1900 XT/XTX cards. That's why you can build such a tandem of two cards with a CF Edition and a regular X1900 card even now. There is no point in buying the XTX model, as the tandem will operate at XT frequencies anyway (CF Edition works at these frequencies). By the way, for this reason we can say that the X1900 CF is formally a duet of not the most powerful accelerators from ATI. But we'll speak of it later. There is also another issue: a motherboard. CF currently requires a motherboard on ATI RD480 or i975. Besides, a late launch and reluctant distribution of such cards is of a certain problem. It's already obvious, that if you have a system based on NForce4 or i955, you'll hardly want to upgrade to another motherboard for the sake of CF. But if you plan on upgrading your old AGP platform into something new and get the most powerful gaming system, that's where you should think it all. CF may be not the worst choice. However, it's up to users to decide. Prices and availability play a great role. Neither SLI nor CF possess bright features to spend lots of money, even if it's more expensive. Our mission is to demonstrate a new card. So, CF is a bundle: a motherboard, two video cards, and software. It's just usual drivers, nothing special. Video cards: one of them is a CF Edition model. Today we'll examine this very card. The motherboard will also be briefly reviewed below.

Video card

So we can see that the X1900 XT CFE is actually a regular X1900 XT, deprived of VIVO and even TV-out for the sake of CF. Just a bare video card without usual additions. Is it a disadvantage? I think it is not. If you plan on installing CF later, that is to buy the second video card after the first, the first card to be bought must be a regular X1900 XT with VIVO. Only then you should buy a CF Edition product.

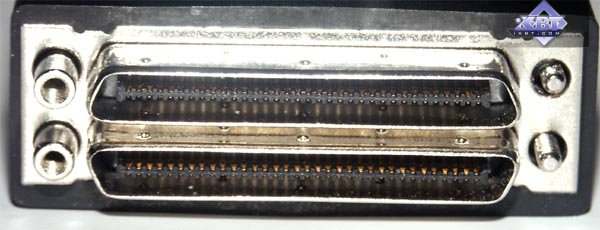

The card is equipped with two DVI jacks (Dual-Link, of course). One of them is special:

A special adapter-composer is plugged into it.  One of its ends goes to the second video card (DVI), the other is used to connect a monitor.

That's how it looks like assembled (without a PC case). CF Edition should be inserted into the farthest from a CPU slot. By the way, that's the second time already when I noticed that ATI has everything vice versa: the slots do not start from a CPU, all GPUs on video cards are upside down. What does it all mean? :)

That's how CF works:

I guess there is no point in examining the cooling system - just a standard thing, I already described this cooler before.

In conclusion of the CF Edition examination, let's have a look at the GPU:

It's an engineering sample of the R580, exactly like those installed in all X1900 XT/XTX cards. The difference between CFE and regular cards comes down to a PCB and its additional elements that assemble pieces into a whole 3D scene.

Installation and DriversTestbed configurations:

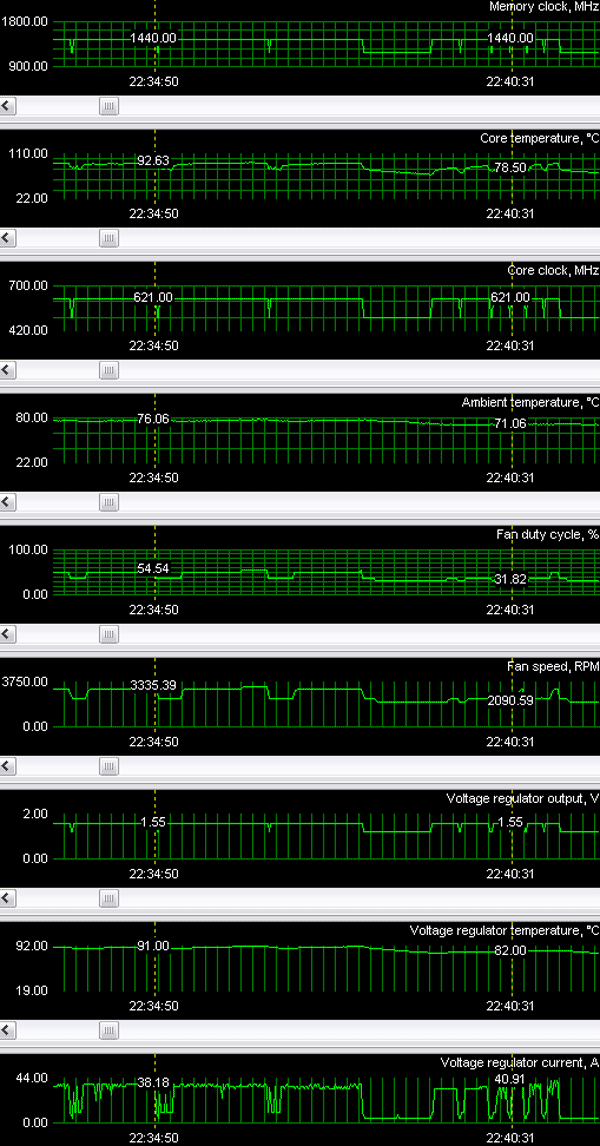

According to system monitoring, the video card is very hot. Over 90°C under load! But I repeat that the main positive feature of the cooling system is driving hot air outside of a PC case, so you shouldn't worry too much. Besides, rotational speed of the cooler does not grow very much even at maximum temperature (the noise is hardly audible).

Test results: performance comparisonIt's no secret that many games have already reached the limits of CPU and system capacities on the whole, so we don't see the potential of top video cards even in AA 4x and AF 16x modes. That's why we introduced a new HQ (High Quality) mode, which means: HQ:

In fact, that's the maximum the latest-gen accelerators from ATI can demonstrate. That is their capacity is used nearly by 100%. NVIDIA GeForce 7800 GTX 512 SLI is represented by Golden Sample cards from Gainward, that's why the frequencies are 580/1760 MHz instead of 550/1700 MHz. We used the following test applications:

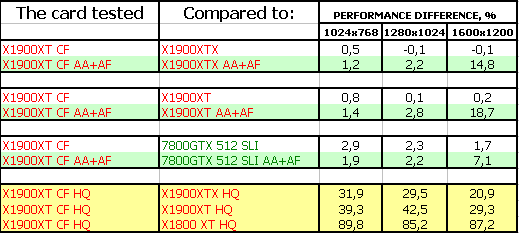

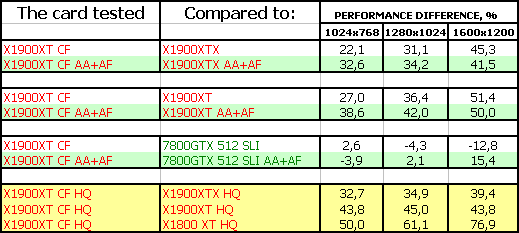

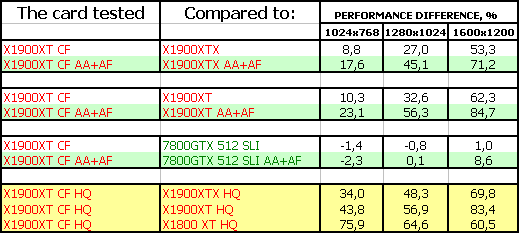

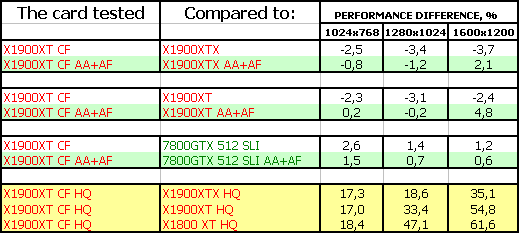

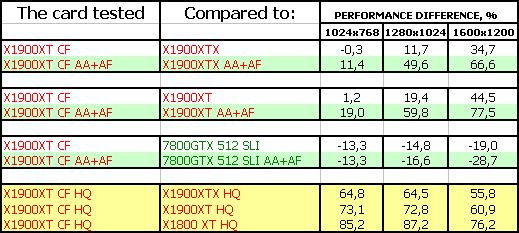

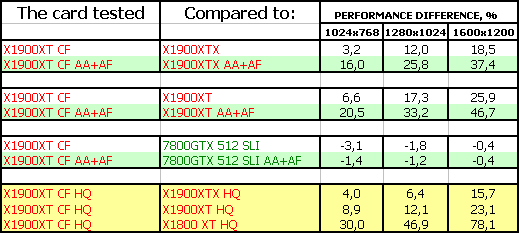

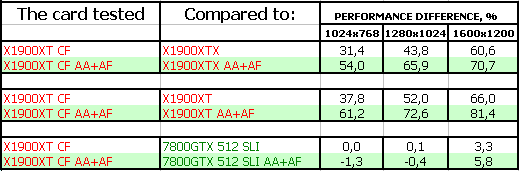

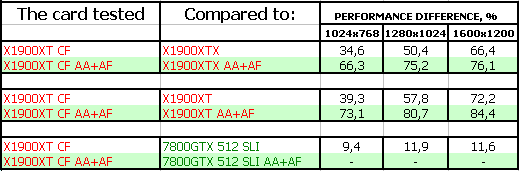

Test results of ATI CrossFire on RADEON X1900 XT, 2x512MBGame tests that heavily load vertex shaders, mixed pixel shaders 1.1 and 2.0, active multitexturing.FarCry, Research The game is heavily limited by system resources, so the gain is not large even in AA+AF mode. Only the HQ mode demonstrated the highest gain from CF. However, that's without HDR. As soon as Patch 1.40 supporting this technology on ATI cards is out, we shall carry out additional tests. Game tests that heavily load vertex shaders, pixel shaders 2.0, active multitexturing.F.E.A.R. CF gain is quite good, but it still does not exceed 50%. What concerns the battle with the 7800GTX 512 SLI, the situation is contradictory. But CF is still victorious, as this SLI will obviously be more expensive.

Splinter Cell Chaos Theory CF demonstrates very good performance gain, but SLI is still not defeated - we've got parity here. But we should keep in mind that CF may be cheaper.

Call Of Duty 2 DEMO It's a similar situation, we can even say that SLI is victorious. Half-Life2: ixbt01 demo

The game depends much on a processor, so only the HQ mode allowed this tandem to reveal its potential.

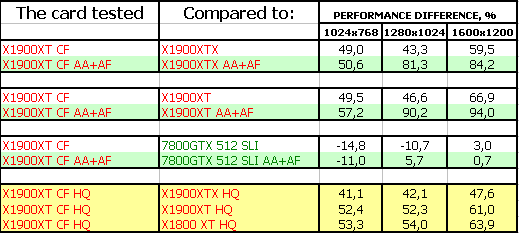

Game tests that heavily load pixel pipelines with texturing, active operations of the stencil buffer and shader unitsDOOM III High mode Chronicles of Riddick, demo 44

CF gain is very high, but NVIDIA's best API - OpenGL makes itself felt. While we can see parity between SLI and CF in DOOM III, CR favours the NVIDIA solution. Alas, OGL games will be a headache of the Canadian company up to the end.

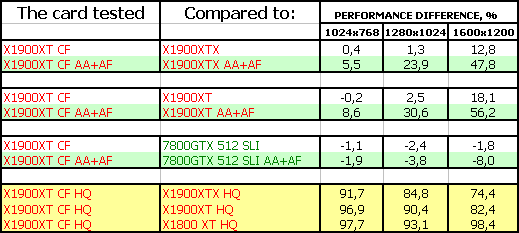

Synthetic tests that heavily load shader units3DMark05: MARKS It's amazing but the CF gain is not as high as we could have expected. SLI demonstrates higher performance gains. That's why the duets demonstrate parity here, even though a single X1900 XT outperforms a 7800GTX 512. 3DMark06: MARKSShader Model 2.0 Shader Model 3.0 CF yields much dividend in SM2.0 tests, but SLI is again more efficient. Thus we have parity here. SM3.0 brings success to CF, as even a single X1900 XT card is stronger than its competitor from NVIDIA. Besides, GeForce cards cannot use HDR together with AA. ConclusionsATI CrossFire based on RADEON X1900 XT, 2x512MB is currently the fastest tandem of 3D accelerators for games. It's sometimes outperformed by a similar duet of two GeForce 7800 GTX 512MB cards. But on the whole, it's a tad more powerful. Besides, SLI operates at higher frequencies. CF will certainly look like a winner versus a regular 7800 GTX 512MB SLI. But is it justified to spend so much money on two accelerators to get the average performance gain of 65-70%? There is no point in answering this question, as a regular user can hardly afford to spend nearly $1500 for such a video system. Only an enthusiast or a hardcore gamer can go for it, but such people will not be stopped by percents. All pros and cons are already mentioned above, everything is crystal clear. What concerns me, if I had such a choice, given I should have changed my platform completely, I wouldn't solve this problem easily even if the two cards had had the same price (as for now, we can expect the X1900 XT to be cheaper than the 7800GTX 512MB even as they only start to appear on the shelves, because the latter cards are in deficit). So it's up to our readers to decide. We've told everything we could about the new technology and its implementations. And don't forget about voracity of such cards! A single X1900 XT card consumes over 120 Watts, so CF requires very powerful PSUs (certainly more powerful than 500-600 Watts). When this article was nearly completed, I suddenly had an idea: was it too hard to make all X1900 cards CrossFire Editions? It's quite possible to arrange VIVO and composing on one PCB. The upper Dual-DVI jack can be used even without CF - a monitor can be connected via the adapter. It will not raise the cost by more than $20. That's nothing compared to $600. In return, there would be no problems with buying so-called master cards, any two X1900 cards would be able to form CF. Moreover, the price for a X1900 XT CFE, which is higher than the X1900 XT by $50, seems too high to me. The card does not contain super expensive elements! Besides, we should deduct the cost of Rage Theater, which is not used in CFE. That's why this high price is just a marketing move. We may have a situation when CFE cards will be cheaper than regular X1900 XT cards due to no demand. Many people use TV-out and the X1900 XT CFE does not have this feature.

You can find more detailed comparisons of various video cards in our 3Digest.

Theoretical materials and reviews of video cards, which concern functional properties of the GPU ATI RADEON X1800 (R520) / RADEON X1900 (R580) / X1600 (RV530) / X1300 (RV515) and NVIDIA GeForce 6800 (NV40/45) / 7800 (G70) / 6600 (NV43)

Write a comment below. No registration needed!

|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook

Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved.