|

||

|

||

| ||

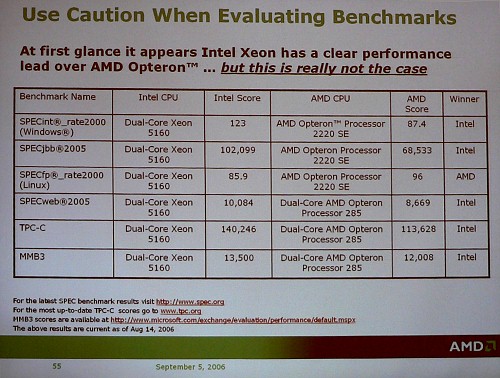

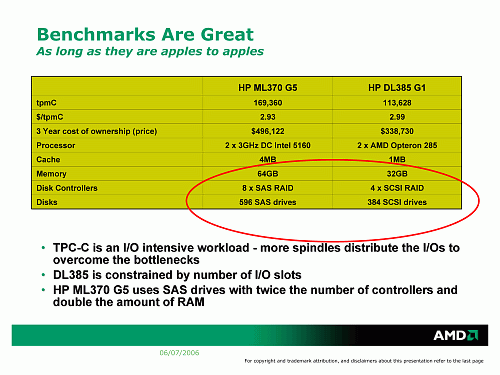

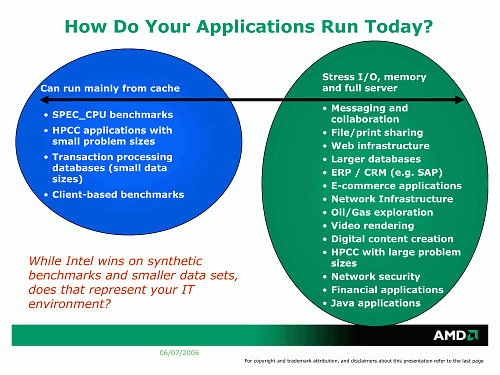

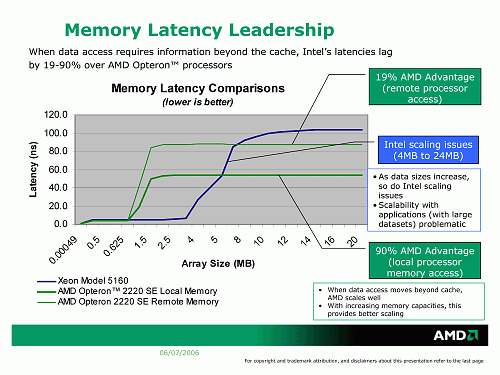

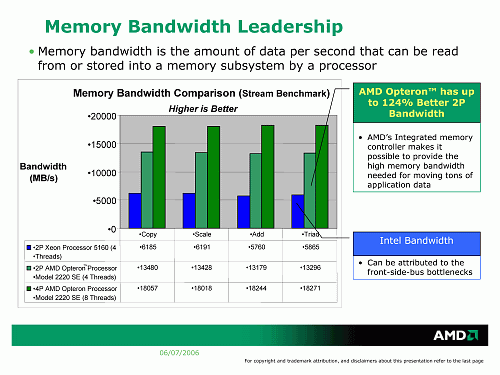

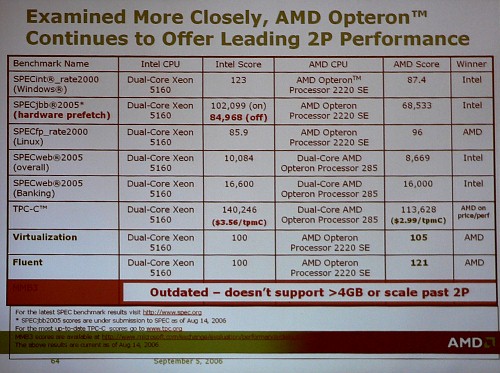

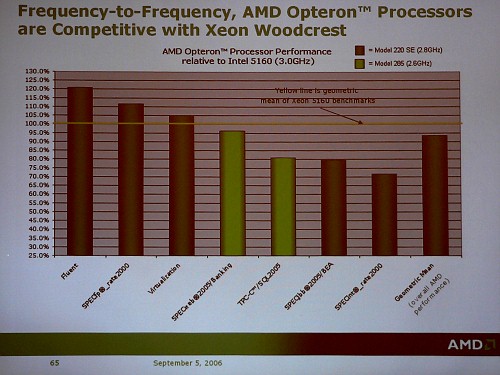

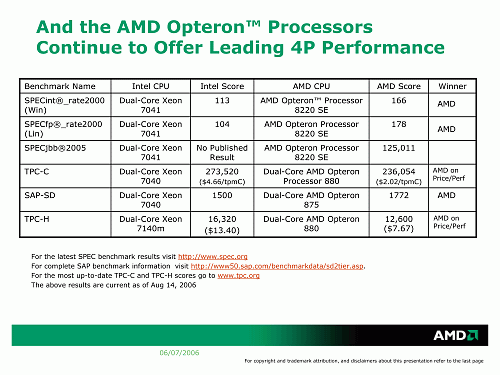

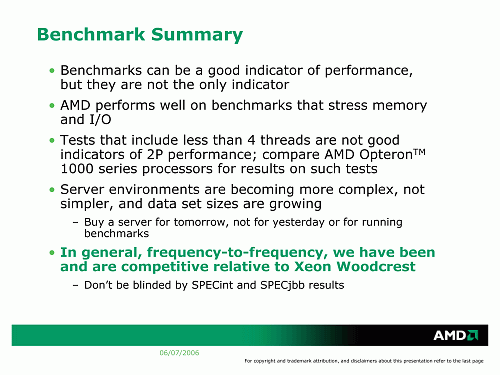

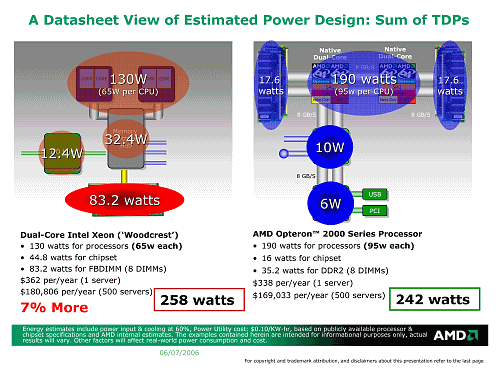

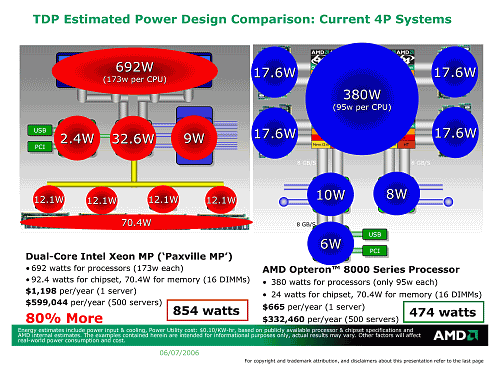

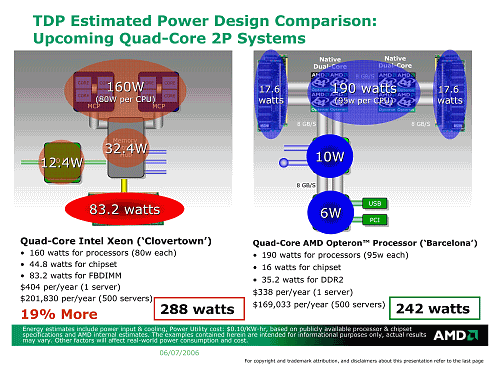

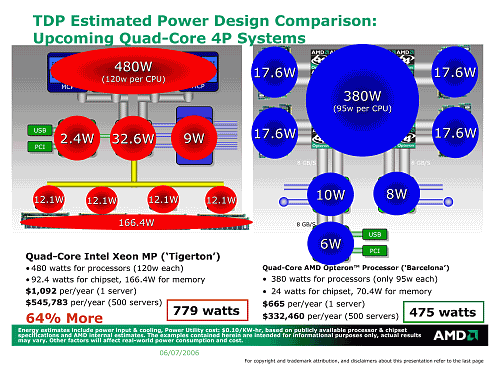

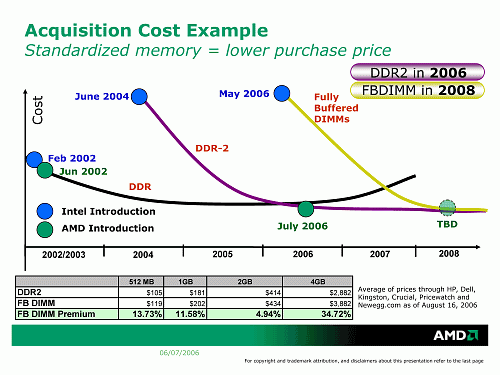

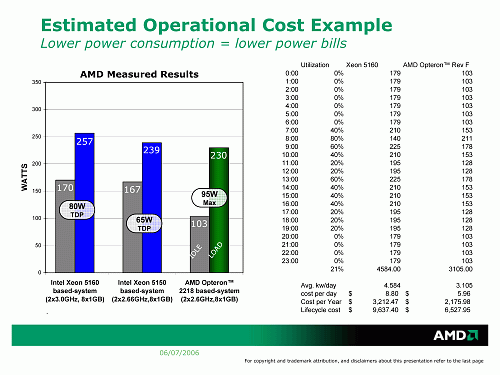

Part I. On Quad-Core Processors and Microarchitectural InnovationsGuiseppe Amato started the second part of the event with a very unpleasant slide for AMD (later on, it was even removed from the PDF version of the presentation, which explains its "pirate" quality in our article):  He promised to correct it in the course of the presentation to make it fair. First of all, configurations should be straightened out, so that we compare "apples to apples".  The next step was to determine priorities of applications. Unlike the home desktop segment, server applications may fail to fit into cache, even as large as in the new Xeon.  That's where the memory access rate comes into play. The "bailout" integrated controller together with Direct Connect Architecture provide nearly two-fold advantage in local memory latency and up to 19% advantage in case of remote access to arrays of other processors in a multi-processor system.  Memory bandwidth on the AMD platform is also higher. It scales up as the number of processors grows, which cannot be said about the Intel platform.  As a result, one can "pick up" an impressive list of tests, especially from the HPCC category, according to which the Opteron family will outscore the updated Xeon series due to its platform features, even though it's outperformed in pure core performance. Opteron's advantage for servers operating in the virtualization mode can be explained by hardware support for memory sharing in the integrated controller. The new slide, "corrected and updated", looks like this:  In fact, it has a right to exist just like any "comparisons from Intel" (or from any other company). It's a reminder to users - even if some company wants to recover its fortunes and launches a really powerful product in some parameters (in this case it's a more progressive micro architecture), it does not mean that its end products magically become the best ones for everybody :) However, if we stop comparing by the "binary" principle and calculate the geomean of test results instead, the new Intel platform actually outscores the AMD platform ("presented three years ago!" — adds Guiseppe Amato).  Yes, by the seven percent in the title of the article. But it wins them back in the comparison of 4P servers (hey, that's the reason of such "care" for the multi-processor segment in the first part of the presentation).  So, what does AMD offer for correct performance comparison, if we need "mean" results?  To use an extended set of tests, if possible. It should include synthetic tests that measure pure performance of the processor microarchitecture (optimized to run from cache for this purpose) as well as tests based on applications that imitate real server operations and realistic load. It makes sense, remaining naturally conjunctural (this position is profitable for AMD now).  The next point was power consumption. As it was already mentioned, the FBDIMM memory standard selected by Intel for the new platform is of invaluable help to AMD marketing here. Unlike DDR2, it demonstrates higher memory access latencies and high power consumption (the fault of control circuitry in each module). Installing eight such modules is enough to cover the TDP difference between two Opteron processors and two Woodcrest processors. It should be noted that in this case (as well as ever) AMD representatives do not bargain on the ways to measure TDP, which differ, as you may known. Not good for Intel either. The TDP level of AMD processors corresponds to the maximum level of the worst production-line samples (all processors that fail to qualify for the TDP level are rejected). Intel allows to exceed this level under maximum load on a processor. Considering that the real power consumption (and heat release) is an individual property of each sample like its overclocking potential, this position means that a user may buy a processor that will consume more power (and get hotter) under maximum load than its TDP level. Of course, only Intel knows what the chances are for each CPU model and stepping. But having analyzed the official definition of "TDP by Intel", we may assume that they are about 5—20%.  Guiseppe Amato couldn't help comparing the situation with power consumption in modern multi-processor systems, where Intel is represented by one of the surviving Netburst processors — Xeon MP on Paxville core. That's expectedly a heart-rending sight :)  However, Intel will hardly allow the competitor to break away in this parameter that much in future. For example, the comparison of future quad-core processors demonstrates just an advantage, no Waterloo. Of course, we should take into account that 80 W TDP will be demonstrated only by low-clock models. Top models will reach 120 W (that's the point made by AMD concerning the growing TDP level in Intel quad-core processors). But as is well known, users are more concerned with the comparison of models from the middle of the series.  The difference in the multi-processor segment is significant again. And that's where AMD seems to be in no danger from Intel for the nearest year, in power saving terms as well.  By the way, the AMD platform will surely upgrade to FBDIMM modules, which are heating only servers with the latest-gen processors from Intel now. But it will happen only in 2008, when they get cheap and their characteristics are at least no worse than those of DDR2.  Another illustration of the power consumption issue presents some typical server with a varied daily load. It concludes our tour of the situation on the server market as perceived by AMD. Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |