|

||

|

||

| ||

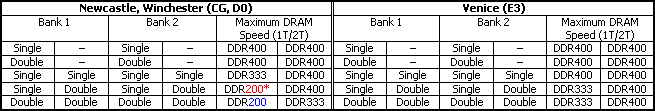

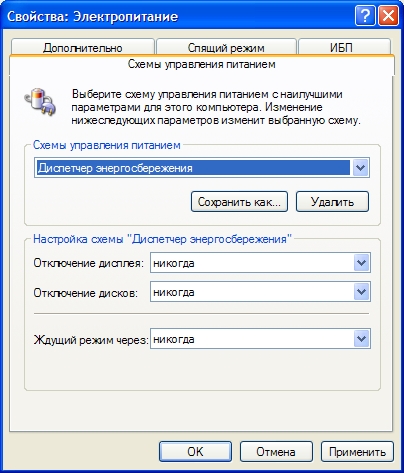

Configuring Integrated OptionsIt's not a secret that performance of modern processors, even low end models, to say nothing of high end models, is nothing less than sufficient in most consumer applications. Noticeable difference between top models can be detected only in games and programs for processing bitmap and 3D graphics as well as (much more rare for home use) audio editors. Of course, there always are professional applications, their requirements (and features) growing with the hardware development. Professional in the wide sense of the word. Most users, who need computers for their professional occupations, can tell their stories, when response times even of a top configuration are lower than human reaction (thus, such performance cannot be called excessive). But it's quite clear that the performance factor is not the only motive to attract the average consumer. Having browsed through some tests, this consumer may find out that even an old-generation processor may be sufficient for his or her tasks. In this case, only "third party" reasons may force him/her to keep up with the times and buy a new model. To impose such reasons, both main manufacturers of x86 processors integrate proprietary technologies into their chips. They do not necessarily have to do with the traditional objective to make a processor faster, but they make the product more attractive to users. Of course, if a processor copes well with its main responsibilities, such bonuses are only welcome. However, these features have a common unpleasant property - these options must be supported on the software and hardware level by a motherboard, its BIOS, and OS. If you buy a processor as part of a system unit, you can certainly expect all these settings to be configured by the manufacturer. Anyway, it won't hurt to make sure that these technologies not only formally exist but really effective. From this article you will learn how to finetune the most feature-rich processors - Athlon 64. It's certainly not a thorough theoretical research, mind it. Pure practice. Integrated memory controller (and a few words about Venice)Let's start with an option of the K8 core that affects the performance but still can be configured by users - integrated memory controller. Besides, the most noticeable changes (for users who are going to install A LOT OF memory; for other users, the choice of a processor on the new stepping can be justified only by a large overclocking potential) in the updated E3 stepping (Venice core) have to do exactly with the memory controller. The second option - SSE3 support - should be referred to marketing bonuses, there are still few optimized programs. And those "few optimized ones" demonstrate only moderate gain from additional commands. Software developers will hardly start optimizing their products only because AMD processors joined the ranks of SSE3 supporters. Efforts of AMD engineers are obviously directed to improve characteristics of the memory controller, so that increased memory capacity (number of occupied banks) would result in the least clock reduction and/or timings growth possible. This phenomenon is inevitable (both for integrated as well as discrete controllers), it has to do with reducing the electric load on a controller. Considering that the absolute majority of desktop motherboards have no more than 4 modules (8 banks), almost ideal situation is when we can fill all the banks without introducing extra latencies. Only a couple of modules (single- or double-sided 1) could be installed into motherboards based on the first revisions of Athlon 64 for Socket 939 to operate at the maximum frequency (DDR400). In practice it meant the total capacity of no more than 2 GB. For example, if you needed to install 3 GB (to preserve the dual channel mode, you had to install a couple of double-sided 1 GB modules and a couple of single-sided 512 MB modules), you had to adjust the multiplier by setting the memory to DDR200.  *If a motherboard supports a corresponding multiplier in BIOS, DDR266 mode is also possible All the above mentioned limitations refer to filling each memory bank separately. If intentionally or by chance you neglect the dual channel mode and install, for example, a couple of single-sided modules into one bank, you will also have to reduce the frequency or increase the latency, even if the second bank remains empty. The situation is certainly experimental (hypothetic), because there simply exist no real conditions, under which you should avoid the dual-channel mode. Proceeding to the practical part, we should admit that controller limitations even in previous revisions cannot be considered critical. The majority of applications that require more than 2 GB of memory are not very sensitive to nuances of its performance. On the contrary, servers and workstations, which operate really large memory capacities, actively use DDR266-DDR333, mostly registered. Such asceticism is dictated by increased reliability requirements rather than by economic reasons - the more memory is actively used by a program, the higher chances to memory failure, while frequency reductions naturally increase the safety margin. Finally, it wouldn't be fair not to mention the fact that the integrated memory controller retains higher access rates (low latencies) even with the 2T parameter, compared to any existing discrete controllers in chipsets with the same memory modules. Laboratory practiceWe still have to answer three practical questions to clear up the situation completely. What timings should we strive for in order to obtain optimal interaction between a controller and memory? Is there a reason to spend much money on elite memory, which can operate at the standard frequency with aggressive timings, up to the currently minimal 2-2-2-5, if your memory consists of two modules? What option (higher frequency with 2T latency or lower frequency with 1T) should we choose, if you have to install memory in all the slots? Let's not digress to the physical interpretation of each timing, it's not difficult to find this information. General recommendations about the first three parameters - tCAS (CAS# Latency), tRCD (RAS# to CAS# Delay), tRP (Row Precharge) are not original, the lower is the value, the higher is performance in all cases. The strongest influence on the general performance is exerted by CAS# Latency. There are some nuances only with the last parameter - tRAS (Activate to Precharge). In some BIOS versions it is called Minimal RAS Active Time. Increasing this parameter has a positive effect on the maximum memory throughput at the cost of increasing random access latency (it has minimal effect on sequential and pseudo random access). The tests were carried out on the MSI K8N Neo4 Platinum motherboard on nForce4 Ultra, memory - DDR400 Corsair XMS CMX512-3200XL, two 512 MB modules with standard 2-2-2-5 timings, Athlon 64 3500+ (D0) processor, ASUS EAX800XL/2DTV video card. The first two rows of the table are the tests with artificially increased latencies, typical for low end Value models and modern mainstream correspondingly. So, according to our tests, the optimal balance is obtained with 2-2-2-10 timings (the second place - 2-2-2-8). Further tests demonstrated that tRAS should be increased even for non-elite memory that doesn't have 2's in main timings. But if you pay attention to the difference between the results obtained in different tests… you will see that the difference is anything but critical even in WinRAR, supercritical to memory nuances, as well as in essentially exacting games. It's even hardly noticeable! However, the objective of any tuning is not in pulling unsatisfactory performance up to a decent level, but in squeezing maximum from the initially powerful system. That's what we aspired to. There is also another consequence from the same observation - if you need to save money, you may rest assured that even low-end memory with ordinary timings will not sink the system performance, but on the contrary, will provide performance only slightly slower than "elite". That's the answer to the second question, put at the beginning of the article. Nothing else is left but to clear up the sub problem of rigging a computer with extra large memory capacity. In our tests we used DDR400 Kingston ValueRAM HyperX KHX3200K2/2G - two sets of 2 GB with the official 2.5-3-3-7 timings (specifications stress the fact of possible 1T delay). Our motherboard (the same as in the previous test and two Athlon 64 3500+ processors, Revisions D0 and E3) set timings by default to 2.5-2-4-8, which were retained. For comparison purposes we assembled a testbed with a discrete controller (MSI 925X Neo motherboard on i925X, Pentium 4 550 processor) and two memory sets - 4 x 1 GB DDR2-533 KingMax KMX128DD64-533 and two sets of 2 x 1 GB DDR2-667 EB DC Platinum OCZ26672048EBDCPE-K. The standard timings in the first test correspond to the typical 4-4-4-12 (specified in the standard) for the popular DDR2-533, the SPD written value in the second test turns out 4-2-2-8, but the modules were also stable operating with 3-2-2-7 timings, used in the tests to get "maximum" results. A traditional counterpart of 1T/2T Memory Timings for discrete controllers is 1T/2T Command Rate. These days it's nearly extinct in BIOS versions for Intel chipsets and it's locked to 2T by default. By the way, this parameter reappears in BIOS for motherboards on nForce4 SLI IE, but it can be set to 1T only for elite memory, when its banks are occupied to the minimum (a bright example is Corsair XMS2-5400 with official 3-2-2-8-1T). The results need no extensive comments. It's quite obvious that you should choose an option with the maximum clock, irregardless of the core stepping. Besides the performance advantage, such an option is notable for higher robustness. As an experiment, we installed two 1GB Kingston ValueRAM modules mixed with a couple of 512MB Corsair XMS modules. This strange blend calls for no comments with the 2.5-2-4-8 timings both on the new and the old core in modes with 2T-latency. It also worked fine at 333 MHz and 1T-latency on the system with Venice core. But to make the first mode stable (200 MHz - 1T) on Winchester core, we had to increase timings to 3-3-3-8. However, if you install identical modules (required by all systems with a dual channel controller), there will be no problems in any mode. There is a certain practical reason to the recommendation to buy specially selected "dual channel" pairs. According to RMMA tests, there is a distinct difference in latencies (especially random access), from "nearly zero" in case of a successfully selected four modules to 10-15% in case of modules from different manufacturers, even with the same "production" timings, capacity, and chip number. Module kinship seems to have no effect on the results of bandwidth tests. As there are no sets of four modules on sale, the optimal option is to install one module from a two-module set to each bank. What concerns the results of the DDR2 testbed, they are published only "for illustration" (fortunately, detailed iXBT analyses of memory modules with various chipsets are plentiful). Note that the integrated controller retains the latency advantage irregardless of the 1T/2T setting, even DDR2-667 memory with its timings overclocked to maximum cannot change the situation. What concerns bandwidth, the situation is on the contrary favorable to DDR2. Besides, if you buy (unfortunately, still expensive and rare) Pentium 4 for the 1066 MHz bus or if you experiment with the multiplier, you can squeeze another 1000-1500 MB/s from DDR2-667 thanks to eliminating the system bus bottleneck. But if we limit ourselves to comparing performance on low-end (but branded!) modules, which are most typical in case of large memory capacities, we can actually say that the memory performance will generally be the same, irregardless of a memory type, while the overall computer performance will depend on other factors. Cool & QuietLet's proceed to the most infrastructure-dependent technology, integrated into Athlon 64. The essence of this mechanism hardly needs lengthy introductions, as it has been existing for quite a long time. In brief, it works like this: Cool & Quiet driver (power saving manager) that counts idle cycles of a processor determines how much it can reduce the clock and the power consumption without deteriorating performance. The minimum values depending on a processor type are 800-1000 MHz and 0.8-1.0 V correspondingly. Under a normal load, heat dissipation goes down so that a processor is doing fine with passive cooling in a spacious PC case. We may argue how vital this technology is, but its usefulness is obvious. It's helpful at least because it allows to reduce system noise without any experiments with liquid cooling, other expensive pretences, or modifications of the standard cooling system. This is done, when it's needed the most (when a user is thinking and performance is limited by user's abilities (also depending on a comfortable workplace), not by CPU capacities). Another indubitable fact - minimizing CPU heat dissipation allows more comfortable conditions for other components of a system unit, in particular for a video card, and to manage with a less powerful fan in a PC case in the long run. This description sounds great, but C&Q must be supported not only by a processor, but also by a motherboard and its BIOS. Fortunately, "theoretical C&Q non-support" has become a thing of the past together with early revisions of motherboards, mostly on Socket 754. Here is the objective reality in mid 2005: all motherboards on Socket 939 can reduce clock and voltage irregardless of a CPU model and a number of memory modules. Socket 754 still requires installing no more than two memory modules. There is no practical point in fighting with this limitation: as Sempron line expands towards lower clocks, Socket 754 migrates to the low budget sector and Cool & Quiet support is relevant only for Athlon 64 (all models) and Sempron (3000+ and higher). What concerns low end models, two memory modules are usually excessive, one module is quite enough. The situation with the intellectual fan rpm control is not that peachy - it's the second constituent part of Cool & Quiet, which depends only on a motherboard. It's clear that AMD has nothing to do with its implementation, it can just make its processors less heated, but it's up to a motherboard to reduce the rotational speed of coolers. This issue narrows down the choice of motherboards with impeccable functionality. In fact, this support is guaranteed only by Socket 939 motherboards from the Group of Three (ASUS, MSI, and Gigabyte), plus ABIT with its m-Guru technology that offers rich settings for this matter. Unlike the majority of motherboards manufactured under trademarks of the "second echelon", their BIOS has the corresponding simple option (Q-Fan, Smart Fan, etc). Manufacturers' neglect of smart modes is easy to understand, it was almost of no use up to now. Modern chips running at the standard clock and voltage, irregardless of their load, get heated to the temperatures, which make it impossible not only to stop the box cooler, but also to reduce its rotational speed. Moreover, smart control in this situation may result in an inverse effect. If a cooler is rather noisy even at an average rotational speed, periodic changes in the rotational speed will certainly be more irritating than loud but monotonous humming. However, the above said does not excuse motherboard designers, who had enough time to check into the positive differences of "advanced 64bit figure-mills" from the rest. We shall not point out the guilty. We just recommend products from the "Group of Three" if you want to get "Quiet" for sure. Perhaps the others will catch up in this case, as in the majority of cases it's a matter of adding a corresponding support into BIOS. Hardware logic on motherboards is already there, the proof is SpeedFan and the like that control the rotational speed of coolers. Meantime, AMD thinks about helping slow partners. Boxed processors have included coolers with thermal resistors for a long time already. Of course, it's only indirect control, it cannot influence rpms cardinally (temperatures are read from the heatsink, not from the processor core; the safe margin is very large to avoid overheating). However, even this control method accomplishes the main task - to switch a box cooler to low-noise rpms. OK, let's agree that we have passed the hardware requirements! So it's high time to activate Cool & Quiet according to all the rules. Let's enable the Cool & Quiet option in BIOS (except for Gigabyte motherboards, where this option is locked enabled and is not accessible in BIOS Setup). If you wish, you can set the maximum and the minimum clocks and voltages in FID and VID options (however, if you want to interfere in Cool & Quiet mechanics, it's much more convenient to use RightMark CPU Clock Utility, and leave BIOS settings by default, which correspond to the maximum range). Let's also enable Q-Fan/Smart Fan. Some motherboards allow to choose a control algorithm - to specify balance between the cooler activity and the admissible operating temperature of a processor. From our experience we know that if you set the maximum temperature within 55-60°, the box cooler will have to run at maximum rpm only during lengthy operations under maximum load, irregardless of a PC case type and other details. If the air temperature inside a PC case does not exceed 35-40° (which is actually important for stable operation of other components), when the CPU clock corresponds to the minimum 800-1000 MHz and rises only for short periods of time (typical operations with text, graphics, and other office/home applications), the fan will indeed be able to remain idle during your entire work session. Core temperature in this mode does not exceed the air temperature inside a PC case more than by 10-15°. That holds true even for processors based on 0.13 micron steppings and dual core models. Our observations are backed up by Athlon 64 assemblages in various PC cases, including tight Mini-Towers with an only fan in a PSU. When we are finished with BIOS, let's boot to Windows (you'd better have Windows XP SP1 and higher to be successful), install the Cool & Quiet driver, which can be found either on a CD with motherboard drivers, or can be downloaded here (select a processor in the Device Manager and update its driver). This operation is not necessary for Windows XP SP2. As a result, we get a new power management scheme "Power saving manager" to be activated.

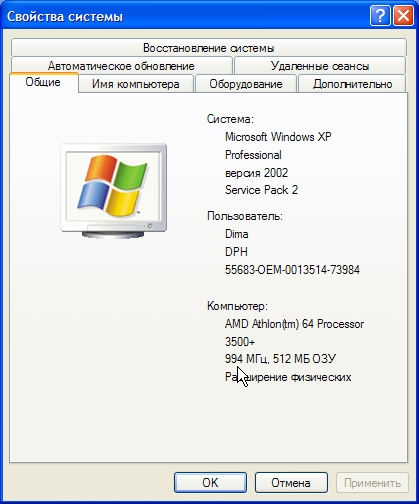

To open this window, go to Display Properties -> Screen Saver -> Power... Everything is ready! You can make sure that Cool & Quiet is active by opening the main System window, the clock will correspond to the current one. To make sure that the cooler control is all right, leave your computer idle for several minutes. Rotational speed of the cooler must at least go down significantly.

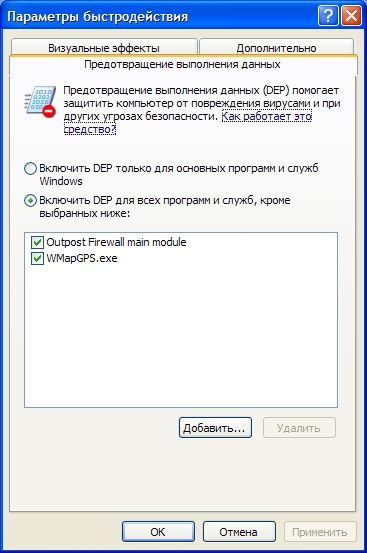

System Properties window What should you do, if everything is configured correctly, but the clock does not go down or on the contrary - it goes down when idle, but it doesn't grow back to maximum automatically? Or if a motherboard increases the voltage, but the processor still gets hotter? Our first recommendation (sufficient in most cases!) is to update BIOS to the latest version. Especially if you have a processor on one of 0.09 micron steppings and your motherboard is not manufactured yesterday. The second step is our RightMark CPU Clock Utility, which can often help organize automatic management even for old motherboards from the Socket 754 black book. Besides, the minimum clock and voltage available via this utility are usually lower than those available via the official driver. So it's reasonable to use this utility in any case, if you want to perfect the power saving idea. NX-bit, AMD64, SSE2, etc.Strictly speaking, all existing extensions must be written in BIOS (there still may be no options in BIOS Setup, though). AMD64 extension proper does not require user's interference, its support is written in BIOS starting from the first versions and there are absolutely no options to enable/block it in BIOS Setup. So you can install the newly-baked Windows XP x64 Professional on such a computer straight away. But, strange as it may seem, the SSE2 option appears even in modern motherboards. Of course, it must be enabled. It takes a long way to enable antivirus DEP with hardware protection from executing code, written in a data area (such areas are marked with a special No eXecution bit and an execution attempt results in a standard memory protection exception) in Windows XP SP2: System properties - on the Advanced tab, under Performance, click Settings - on the Data Execution Prevention tab, click Turn on DEP for all programs and services except those I select.

To open this window, go to System Properties -> Advanced -> Performance -> Settings -> Data Execution Prevention Don't select anything below, the system will offer to add such programs (firewalls, antivirus, etc) into this list, when the exception is raised for the first time the protection snaps into action. Of course, Windows may fail to correctly "process" some of your programs, where code from memory is executed for peaceful uses as a programming method (even if not quite correct, but possible), so they will crash without any warnings from the protection system. They will have to be added to this list manually (so that they are still allowed to execute code from the data area). Or you'd better inquire whether this problem is fixed in a more natural way (a patch or a new program version).

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |