|

||

|

||

| ||

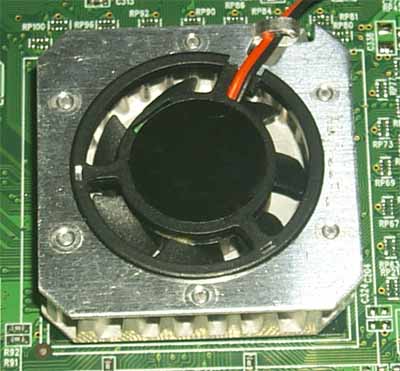

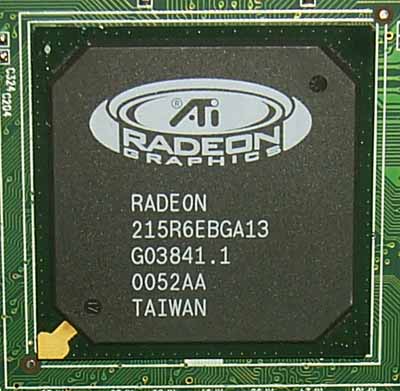

We had no reviews of RADEON video cards for a long time already (not RADEON VE) because such cards are produced only by ATI; you will hardly find any other companies engaged in it. Although the new ATI's strategy was announced yet in spring Taiwanese and Chinese firms manufacture nothing but cheap RADEON VE cards. Moreover, the RADEON LE cards made by one of the ATI's Chinese partners on the defected chips are not worth a separate article. It is quite enough to publish its results in the 3Digest. The RADEON's architecture is closer to the GeForce2 MX than to the GeForce2 GTS, i.e. it has 2 pixel pipelines (not 4). The fact that each pipeline controls 3 texture blocks instead of two is hardly beneficial for the RADEON as there are almost no any games which can use its advantage over the GeForce2 MX. It is only Serious Sam and a couple of little-known games which can use all 6 texture modules of the RADEON. It may seem that the RADEON should be equal to the GeForce2 MX, but it is mainly compared with the GeForce2 GTS which is more powerful in architecture. The matter is that NVIDIA has slowed down the GeForce2 MX cards by equipping them with SDR memory which doesn't allow even a 2-pipeline chip to operate at its full potential. The normal RADEON has DDR memory which mounts it a level up. Why normal? Because the RADEON family is large, and there are some video cards with SDR memory. But anyway, how can dual-pipeline RADEON, even with the DDR memory, compete against the 4-pipeline GeForce2 GTS? Well, when the competitors were released, RADEON 32/64 MBytes DDR and GeForce2 GTS 32/64 MBytes DDR, they were both equipped with 166 (333) MHz DDR memory. The RADEON coming in the Retail package had even higher frequencies - 183 (366) MHz. And while these clock speeds excellently suited the RADEON, and the card was well-balanced, such memory was a bottleneck for the 4-pipeline GeForce2 GTS as it limited the performance, first of all, in 32-bit color. If you take into account the HyperZ technology from ATI for optimization of working with the Z-buffer you will understand how the dual-pipeline chip can outscore the 4-pipeline one in 32-bit color in high resolutions. The RADEON had every prospect of success, but the clumsy price policy of ATI and talentless programmers of the software department reduced all the advantages to zero. The price was 30% over the NVIDIA GeForce2 GTS cards! Moreover, you might remember what a flow of problems the drivers had. The Canadian company, however, improved. The new drivers had less and less bugs. The programmers reacted very fast on problems in games, and the new versions which were released quite often pleased us with high quality and performance. Besides, the cards dropped in prices and, thus, became much more popular. Unfortunately, not all the problems were eliminated. The Chinese RADEON LE cards equipped with a 6ns DDR memory, like the normal cards, were 1.5-2 cheaper. It made popular such cards which had a good overclocking potential and could replace usual RADEON 32 MBytes DDR ones. But! These cards sell at their cost price, and ATI had almost no income. Besides, the RADEON LE cards restrained sales of normal RADEON 32 MBytes DDR ones in Russia and China. Well, the LE market was limited, they couldn't be found in USA or Europe, but they glutted the Chinese and Asian markets. Now the RADEON 32 MBytes DDR cards have been selling at $125-130 half a year already, and the LE cards are extremely cheap ($75-80). Bear in mind that I mean the cards the production of which is connected with ATI. Even LE cards made by the Chinese company have a PCB from ATI. After ATI lost to NVIDIA in the market of game video cards it understood that it should sell the chips and establish relationships with Asian card makers who could bring the products to end-users much faster. Some major companies have already started production of video cards on the ATI processors. Jetway, which is known for its motherboards, has launched a normal 64-MB card with a DDR memory based on the RADEON. Such a card costs only $100 against $150-160 for a similar card from ATI. So, today we are going to examine the Jetway Fantasy RADEON-A1 64 MBytes DDR card. CardThe Jetway Fantasy RADEON-A1 64 MBytes DDR has AGP x2/x4 interface, 64 MBytes DDR SDRAM located in 8 chips on the right and back sides of the PCB.    Hyundai (Hynix) produces memory chips with 5.5 ns access time, which corresponds to 183(366) MHz. The card works at 169/169 (338) MHz. It is very close to the OEM versions of RADEON based cards from ATI - 166/166 (333) MHz, and a little lower than the retail versions clocked at 183/183 (366) MHz. That is why we should count on the performance shown by the well known RADEON 64 MBytes DDR from ATI. This card is based on the ATI's design: Jetway Fantasy RADEON-A1 64 MBytes DDR (on the left) and ATI RADEON 64 MBytes DDR (on the right):   While the right parts of both cards are identical, the left ones are different. First of all, the Jetway Fantasy RADEON-A1 64 MBytes DDR has a different layout due to a lack of the RAGE Theater coprocessor which implements multimedia functions. The PCB of both cards is bright green. The cooler of the Jetway's card has nothing in common with the ATI's one.  Last time I saw such a design in 1998 on the Hercules Dynamite TNT card. However, a cooler on the ATI's cards was rather a decorative part. The chip could flawlessly work at 183 MHz. If we take the cooler off, we can look at the GPU:  The card has neither TV-out nor DVI.  The Retail package contains:

OverclockingThe Jetway Fantasy RADEON-A1 64 MBytes DDR has reached 210/210(420) MHz. Taking into account that the frequencies of the chip and memory are synchronized earlier we couldn't get more than 200 MHz on the ATI's cards because of the processor's potential. Note:

Installation and driversTest system:

During the tests we used ATI drivers of v.7.189, VSync was off, the S3TC technology was enabled. For the comparative analyses we used the following cards:

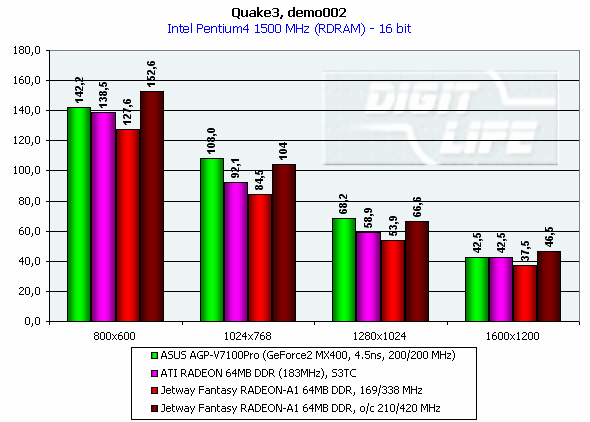

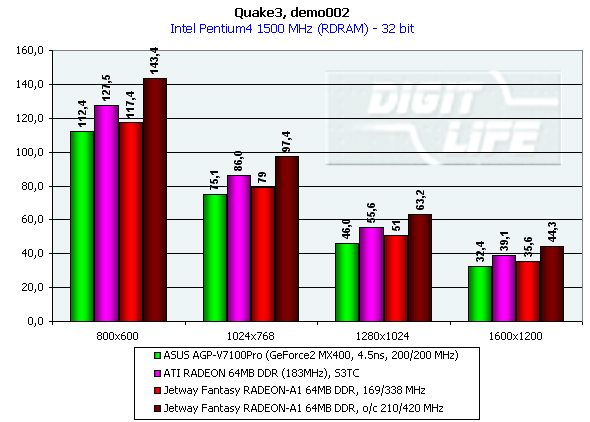

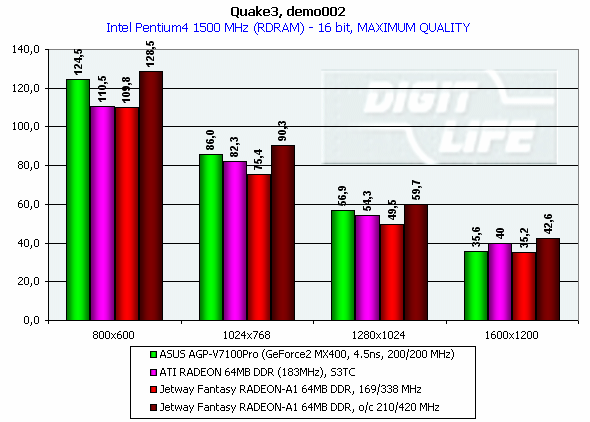

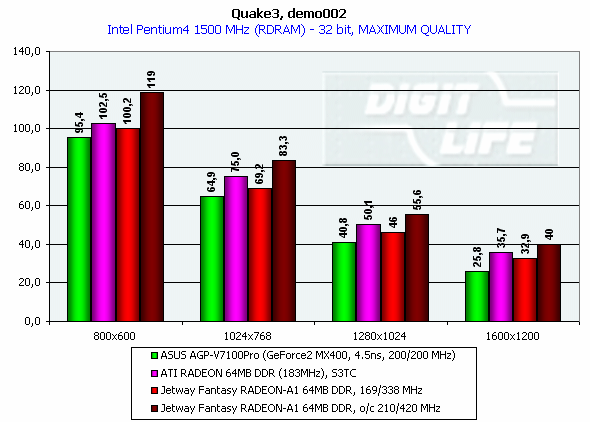

We chose the MX400 card because this notable card from ASUS with 4.5ns memory is equal to the Jetway Fantasy RADEON-A1 64 MBytes DDR in price (on the October 20 2001 it is $90-95). Test resultsThe 2D quality of the RADEON based cards was always excellent. The Jetway Fantasy RADEON-A1 64 MBytes DDR performs quite good, and you can work without any problems in 1600X1200 at 75 Hz. For estimation of 3D quality we used the id Software Quake3 v.1.17 a game test which demonstrates operation of a card in OpenGL with a standard demo benchmark demo002. Quake3 Arenademo002, standard modesThe tests were carried out in two modes: Fast (16-bit color) and High Quality (32-bit color).   The performance of our hero is expectedly lower than that of the speedier ATI. And while in 16-bit color the RADEON cards lose to the NVIDIA's one, in 32-bit one the Jetway Fantasy RADEON-A1 64 MBytes DDR thrives (even overclocked, the GeForce2 MX400 falls far behind). demo002, Highest QualityThe tests were also carried out in 16- and 32-bit colors at the highest detailing level. The scenes were complicated by dividing the objects into a great number of polygons: r_lodCurveError "30000" r_subdivisions "1".   Well, the situation is very close. ConclusionToday we have studied a unique product, a pioneer in its field. As I have already mentioned ATI started selling its graphics processors to third companies not so long time ago, and it is the first time when we can take a look at the card on the normal RADEON with 64 MBytes DDR memory produced by Jetway. You see, the production of relatively cheap video cards on the RADEON is justified. Moreover, this card is equipped according to the chipset's characteristics: 64 MBytes of fast DDR memory. Besides, the card doesn't yield to the ATI's brothers in frequencies. The complete characteristics of video cards of this and other classes can be found in our 3Digest. Highs:

Lows:

Write a comment below. No registration needed!

|

Platform · Video · Multimedia · Mobile · Other || About us & Privacy policy · Twitter · Facebook Copyright © Byrds Research & Publishing, Ltd., 1997–2011. All rights reserved. |